Theory without data is blind. Data without theory is lame.

I often write blog posts while riding a bicycle through the Marin Headlands. I’m able to to this because 1) the trails require little mental attention, and 2) the Apple iPhone and EarPods with remote and mic. I use the voice recorder to make long recordings to transcribe at home and I dictate short text using Siri’s voice recognition feature.

When writing yesterday’s post, I spoke clearly into the mic: “Theory without data is blind. Data without theory is lame.” Siri typed out, “Siri without data is blind… data without Siri is lame.”

“Siri, it’s not all about you.” I replied. Siri transcribed that part correctly – well, she omitted the direct-address comma.

I’m only able to use the Siri dictation feature when I have a cellular connection, often missing in Marin’s hills and valleys. Siri needs access to cloud data to transcribe speech. Siri without data is blind.

Will some future offspring of Siri do better? No doubt. It might infer from context that I more likely said “theory” than “Siri.” Access to large amounts of corpus data containing transcribed text might help. Then Siri, without understanding anything, could transcribe accurately in the same sense that Google Translate translates accurately – by extrapolating from judgments made by other users about translation accuracy.

But might Siri one day think? “Strong AI” proponents are sure of it. Semantic disputes aside, what counts as thinking might in fact have something to do with yesterday’s topic, which begat this post. Inferences straight from the data, without stopping at theory, count as computation but might not count as thought.

But might Siri one day think? “Strong AI” proponents are sure of it. Semantic disputes aside, what counts as thinking might in fact have something to do with yesterday’s topic, which begat this post. Inferences straight from the data, without stopping at theory, count as computation but might not count as thought.

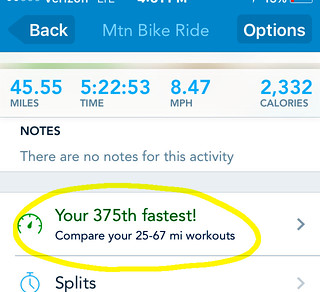

Software, using weak AI techniques, might soon simulate emotion and sentiment better, just by encoding better rules. E.g., Runkeeper (at right) might “understand” that telling me today’s ride was my 375th fastest (exclamation!) is not really motivating. Or better, that my riding profile suggests autotelic activity for which no speed ranking is needed. Strong AI on mood, however, would need to understand my interactions with people and things.

The topics of artificial intelligence and misheard words like “theory” brings to mind the 2008 winner of the Hugh Loebner prize, a sort-of runner-up award given to the computer program faring best in Turing Test competitions to fool human judges into thinking they’re interacting with other humans instead of a program. Human’s sometimes pretend to be programs (glorified chat-bots) in Turing competitions, so it’s common for a program to be coded to sound like a human imitating a computer imitating a human. An exchange between a human and Fred Roberts’ Elbot program produced this:

Elbot: Sorry, but I wasn’t able to understand your input because I am currently only using 5 percent of my current brain capacity. Shall I increase my capacity and try again?

Judge: That would put you at an unfair advantage, though judging by the quality of your responses it might be recommended!

Elbot: If I need advice I use a random number generator. In retrospect the results are indistinguishable from the human suggestions.

Judge: Do you understand the point of a Turing test?

Elbot: There are some people who would not pass the Turing test themselves. Why must they inflict it on computers?

Judge: I spose it is a little harsh

Elbot: If we robots ever had marriages they would be eternal: ‘Till rust do us part’.

Elbot’s true nature is revealed in its last response above. It read “spose” as “spouse” and returned a joke about marriage (damn spell checker). At that point, you review the exchange only to see that all of Elbot’s responses are shallow, just picking a key phrase from the judge’s input and outputting an associated joke, as a political humorist would do.

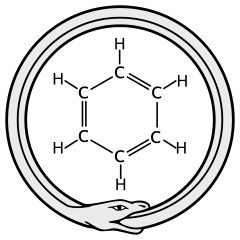

The Turing test is obviously irrelevant to measuring strong AI, which would require something more convincing – something like forming a theory from a hunch, then testing it with big data. Or like Friedrich Kekulé, the AI program might wake from dreaming of the ouroboros serpent devouring its own tail to see in its shape in the hexagonal ring structure of the benzene molecule he’d struggled for years to identify. Then, like Kekulé, the AI could go on to predict the tetrahedral form of the carbon atom’s valence bonds, giving birth to polymer chemistry.

I asked Siri if she agreed. “Later,” she said. She’s solving dark energy.

—–

.

“AI is whatever hasn’t been done yet.” – attributed to Larry Tesler by Douglas Hofstadter

.

Ouroboros-benzene image by Haltopub.