Bill Storage

This user hasn't shared any biographical information

“He Tied His Lace” – Rum, Grenades and Bayesian Reasoning in Peaky Blinders

Posted in Probability and Risk on August 4, 2025

“He tied his lace.” Spoken by a jittery subordinate halfway through a confrontation, the line turns a scene in Peaky Blinders from stylized gangster drama into a live demonstration of Bayesian belief update. The scene is a tightly written jewel of deadpan absurdity.

(The video clip and a script excerpt from Season 2, Episode 6 appears at the bottom of this article – rough language. “Peaky blinders,” for what its worth, refers to young brits in blindingly dapper duds and peaked caps in the 1920s.)

The setup: Alfie Solomons has temporarily switched his alliance from Tommy Shelby to Darby Sabini, a rival Italian gangster, in exchange for his bookies being allowed at the Epsom Races. Alfie then betrayed Tommy by setting up Tommy’s brother Arthur and having him arrested for murder. But Sabini broke his promise to Alfie, causing Alfie to seek a new deal with Tommy. Now Tommy offers 20% of his bookie business. Alfie wants 100%. In the ensuing disagreement, Alfie’s man Ollie threatens to shoot Tommy unless Alfie’s terms are met.

Tommy then offers up a preposterous threat. He claims to have planted a grenade and wired it to explode if he doesn’t walk out the door by 7pm. The lynchpin of this claim? That he bent down to tie his shoe on the way in, thereby concealing his planting the grenade among Alfie’s highly flammable bootleg rum kegs. Ollie falls apart when, during the negotiations, he recalls seeing Tommy tie his shoe on the way in. “He tied his lace,” he mutters frantically.

In another setting, this might be just a throwaway line. But here, it’s the final evidence given in a series of Bayesian belief updates – an ambiguous detail that forces a final shift in belief. This is classic Bayesian decision theory with sequential Bayesian inference, dynamic belief updates, and cost asymmetry. Agents updates their subjective probability (posterior) based on new evidence and choose an action to maximize expected utility.

By the end of the negotiation, Alfie’s offering a compromise. What changes is not the balance of lethality or legality, but this sequence of increasingly credible signals that Tommy might just carry through on the threat in response to Alfie’s demands.

As evidence accumulates – some verbal, some circumstantial – Alfie revises his belief, lowers his demands, and eventually accepts a deal that reflects the posterior probability that Tommy is telling the truth. It’s Bayesian updating with combustible rum, thick Cockney accents, and death threats delivered with stony precision.

Bayesian belief updating involves (see also *):

- Prior belief (P(H)): Initial credence in a hypothesis (e.g., “Tommy is bluffing”).

- Evidence (E): New information (e.g., a credible threat of violence, or a revealed inconsistency).

- Likelihood (P(E|H)): How likely the evidence is if the hypothesis is true.

- Posterior belief (P(H|E)): Updated belief in the hypothesis given the evidence.

In Peaky Blinders, the characters have beliefs about each other’s natures, e.g., ruthless, crazy, bluffing.

The Exchange as Bayesian Negotiation

Initial Offer – 20% (Tommy)

This reflects Tommy’s belief that Alfie will find the offer worthwhile given legal backing and mutual benefits (safe rum shipping). He assumes Alfie is rational and profit-oriented.

Alfie’s Counter – 100%

Alfie reveals a much higher demand with a threat attached (Ollie + gun). He’s signaling that he thinks Tommy has little to no leverage – a strong prior that Tommy is bluffing or weak.

Tommy’s Threat – Grenade

Tommy introduces new evidence: a possible suicide mission, planted grenade, anarchist partner. Alfie must now update his beliefs:

- What is the probability Tommy is bluffing?

- What’s the chance the grenade exists and is armed?

Ollie’s Confirmation – “He tied his lace…”

This is independent corroborating evidence – evidence of something anyway. Alfie now receives a report that raises the likelihood Tommy’s story is true (P(E|¬H) drops, P(E|H) rises). So Alfie updates his belief in Tommy’s credibility, lowering his confidence that he can push for 100%.

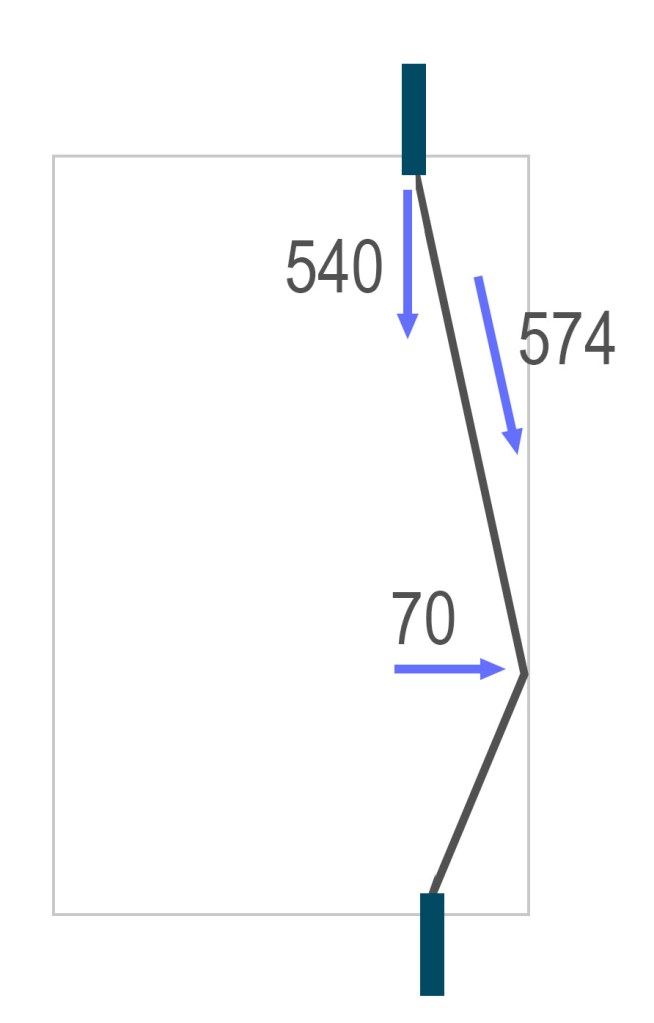

The offer history, which controls their priors and posteriors:

- Alfie lowers from 100% → 65% (“I’ll bet 100 to 1”)

- Tommy rejects

- Alfie considers Tommy’s past form (“he blew up his own pub”)

This shifts the prior. Now P(Tommy is reckless and serious) is higher. - Alfie: 65% → 45%

- Tommy: Counters with 30%

- Tommy adds detail: WWI tunneling expertise, same grenade kit, he blew up a mine

- Alfie checks for inconsistency (“I heard they all got buried”)

Potential Bayesian disconfirmation. Is Tommy lying? - Tommy: “Three of us dug ourselves out” → resolves inconsistency

The model regains internal coherence. Alfie’s posterior belief in the truth of the grenade story rises again. - Final offer: 35%

They settle, each having adjusted credence in the other’s threat profile and willingness to follow through.

Analysis

Beliefs are not static. Each new statement, action, or contradiction causes belief shifts. Updates are directional, not precise. No character says “I now assign 65% chance…” but, since they are rational actors, their offers directly encode these shifts in valuation. We see behaviorally expressed priors and posteriors. Alfie’s movement from 100 to 65 to 45 to 35% is not arbitrary. It reflects updates in how much control he believes he has.

Credibility is a Bayesian variable. Tommy’s past (blowing up his own pub) is treated as evidence relevant to present behavior. Social proof is given by Ollie. Ollie panics on recalling that Tommy tied his shoe. Alfie chastises Ollie for being a child in a man’s world and sends him out. But Alfie has already processed this Bayesian evidence for the grenade threat, and Tommy knows it. The 7:00 deadline adds urgency and tension to the scene. Crucially, from a Bayesian perspective, it limits the number of possible belief revisions, a typical constraint for bounded rationality.

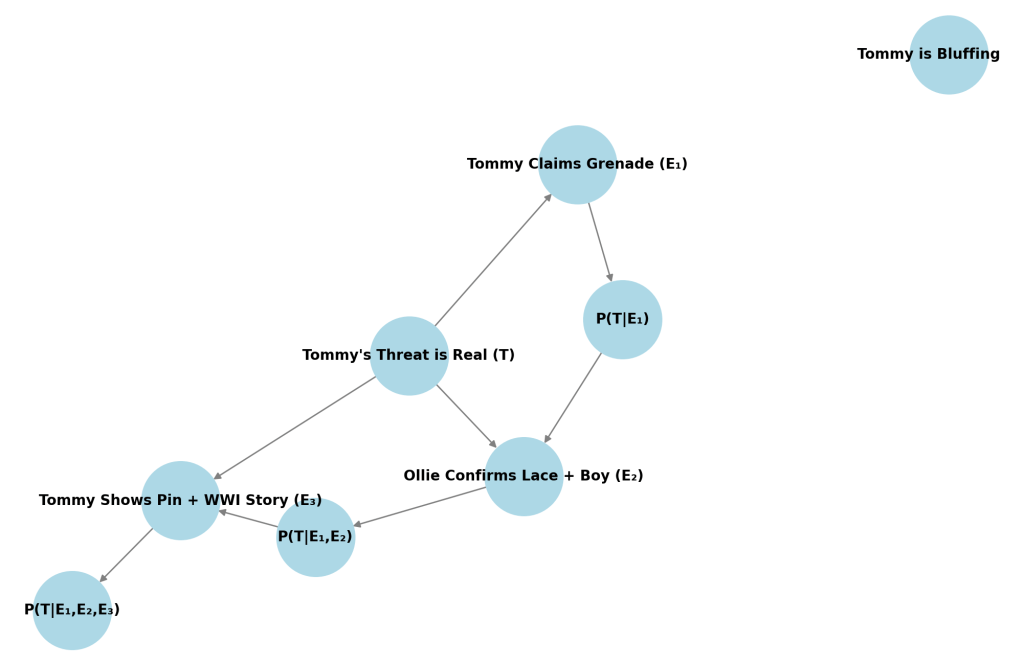

As an initial setup, let:

- T = Tommy has rigged a grenade

- ¬T = Tommy is bluffing

- P(T) = Alfie’s prior that Tommy is serious

Let’s say initially:

P(T) = 0.15, so P(¬T) = 0.85

Alfie starts with a strong prior that Tommy’s bluffing. Most people wouldn’t blow themselves up. Tommy’s a businessman, not a suicide bomber. Alfie has armed men and controls the room.

Sequence of Evidence and Belief Updates

Evidence 1: Tommy’s grenade threat

E₁ = Tommy says he planted a grenade and has an assistant with a tripwire

We assign:

- P(E₁|T) = 1 (he would say so if it’s real)

- P(E₁|¬T) = 0.7 (he might bluff this anyway)

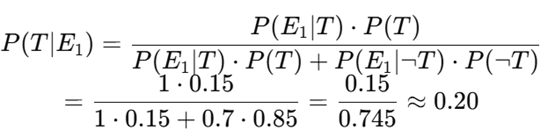

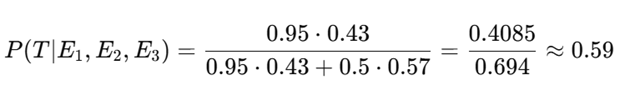

Using Bayes’ Theorem:

So now Alfie gives a 20% chance Tommy is telling the truth. Behavioral result: Alfie lowers the offer from 100% → 65%.

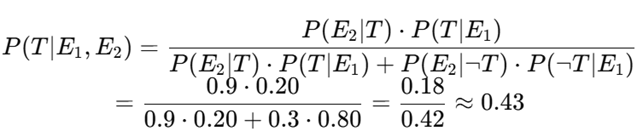

Evidence 2: Ollie confirms the lace-tying + nervousness

E₂ = Ollie confirms Tommy bent down and there’s a boy at the door

This is independent evidence supporting T.

- P(E₂|T) = 0.9 (if it’s true, this would happen)

- P(E₂|¬T) = 0.3 (could be coincidence)

Update:

So Alfie now gives 43% probability that the grenade is real. Behavioral result: Offer drops to 45%.

Evidence 3: Tommy shows grenade pin + WWI tunneler claim

E₃ = Tommy drops the pin and references real tunneling experience

- P(E₃|T) = 0.95 (he’d be prepared and have a story)

- P(E₃|¬T) = 0.5 (he might fake this, but riskier)

Update:

Now Alfie believes there’s nearly a 60% chance Tommy is serious. Behavioral result: Offer rises slightly to 35%, the final deal.

Simplified Utility Function

Assume Alfie’s utility is:

U(percent) = percent ⋅ V−C ⋅ P(T)

Where:

- V = Value of Tommy’s export business (let’s say 100)

- C = Cost of being blown up (e.g., 1000)

- P(T) = Updated belief Tommy is serious

So for 65%, with P(T) = 0.43:

U = 65 – 1000 ⋅ 0.43 = 65 – 430 = −365

But for 35%, with P(T) = 0.59:

U = 35 – 1000 ⋅ 0.59 = 35 – 590 = −555

Here we should note that Alfie’s utility function is not particularly sensitive to the numerical values of V and C; using C = 10,000 or 500 doesn’t change the relative outcomes much. So, why does Alfie accept the lower utility? Because risk of total loss is also a factor. If the grenade is real, pushing further ends in death and no gain. Alfie’s risk appetite is negatively skewed.

At the start of the negotiation, Alfie behaves like someone with low risk aversion by demanding 100%, assuming dominance, and later believing Tommy is bluffing. His prior is reflect extreme confidence and control. But as the conversation progresses, the downside risk becomes enormous: death, loss of business, and, likely worse, public humiliation.

The evidence increasingly supports the worst-case scenario. There’s no compensating upside for holding firm, no added reward for risking everything to get 65% instead of 35%.

This flips Alfie’s profile. He develops a sharp negative skew in risk appetite, especially under time pressure and mounting evidence. Even though 35% yields a worse expected utility than 65%, it avoids the long tail – catastrophic loss.

***

[Tommy is seated in Alfie’s office]

Alfie (to Tommy): That’ll probably be for you, won’t it?

Tommy: Hello? Arthur. You’re out.

Alfie: Right, so that’ll be your side of the street swept up, won’t it? Where’s mine? What you got for me?

Tommy: Signed by the Minister of the Empire himself. Yeah? So it is.

Tommy: This means that you can put your rum in our shipments, and no one at Poplar Docks will lift a canvas.

Alfie: You know what? I’m not even going to have my lawyer look at that.

Tommy: I know, it’s all legal.

Alfie: You know what, mate, I trust you. That’s that. Done. So, whisky… There is, uh, one thing, though, that we do need to discuss.

Tommy: What would that be?

Alfie: It says here, “20% “paid to me of your export business.”

Tommy: As we agreed on the telephone…

Alfie: No, no, no, no, no. See, I’ve had my lawyer draw this up for us, just in case. It says that, here, that 100% of your business goes to me.

Tommy: I see.

Alfie: It’s there.

Tommy: Right.

Alfie: Don’t worry about it, right, because it’s totally legal binding. All you have to do is sign the document and transfer the whole lot over to me.

Tommy: Sign just here, is it?

Alfie: Yeah.

Tommy: I see. That’s funny. That is.

Alfie: What?

Tommy: No, that’s funny. I’ll give you 100% of my business.

Alfie: Yeah.

Tommy: Why?

[Ollie appears and aims a revolver at Tommy]

Alfie: Ollie, no. No, no, no. Put that down. He understands, he understands. He’s a big boy, he knows the road. Now, look, it’s just non-fucking-negotiable. That’s all you need to know. So all you have to do is sign the fucking contract. Right there.

Tommy: just sign here?

Alfie: With your pen.

Tommy: I understand.

Alfie: Good. Get on with it.

Tommy: Well, I have an associate waiting for me at the door. I know that he looks like a choir boy, but he is actually an anarchist from Kentish Town.

Alfie: Tommy… I’m going to fucking shoot you. All right?

Tommy: Now, when I came in here, Mr. Solomons, I stopped to tie my shoelace. Isn’t that a fact? Ollie?

Tommy: I stopped to tie my shoelace. And while I was doing it, I laid a hand grenade on one of your barrels.

Tommy: Mark 15, with a wired trip. And my friend upstairs… Well, he’s like one of those anarchists that blew up Wall Street, you know? He’s a professional. And he’s in charge of the wire. If I don’t walk out that door on the stroke of 7:00, he’s going to trigger the grenade and… your very combustible rum will blow us all to hell. And I don’t care… because I’m already dead.

Ollie: He tied his lace, Alfie. And there is a kid at the door.

Tommy: From a good family, too. Ollie, it’s shocking what they become…

Alfie (to Ollie): What were you doing when this happened?

Ollie: He tied his lace, nothing else.

Alfie: Yeah, but what were you doing?

Ollie: I was marking the runners in the paper.

Alfie: What are you doing?

Tommy: Just checking the time. Carry on.

Alfie: Right, Ollie, I want you to go outside, yeah, and shoot that boy in the face – from the good family, all right?

Tommy: Anyone walks through that door except me, he blows the grenade.

Ollie: He tied his fucking lace…

Tommy: I did tie my lace.

Alfie: I bet, 100 to 1, you’re fucking lying, mate. That’s my money.

Tommy: Well, see, you’ve failed to consider the form. I did blow up me own pub… for the insurance.

Alfie: OK right… Well, considering the form, I would say 65 to 1. Very good odds. And I would be more than happy and agree if you were to sign over 65% of your business to me. Thank you.

Tommy: Sixty-five? No deal.

Alfie: Ollie, what do you say?

Ollie: Jesus Christ, Alfie. He tied his fucking lace, I saw him! He planted a grenade, I know he did. Alfie, it’s Tommy fucking Shelby…

[Alfie smacks Ollie across the face, grabs him by the collar, pulls him close and looks straight into his face.]

Alfie to Ollie: You’re behaving like a fucking child. This is a man’s world. Take your apron off, and sit in the corner like a little boy. Fuck off. Now.

Tommy: Four minutes.

Alfie: All right, four minutes. Talk to me about hand grenades.

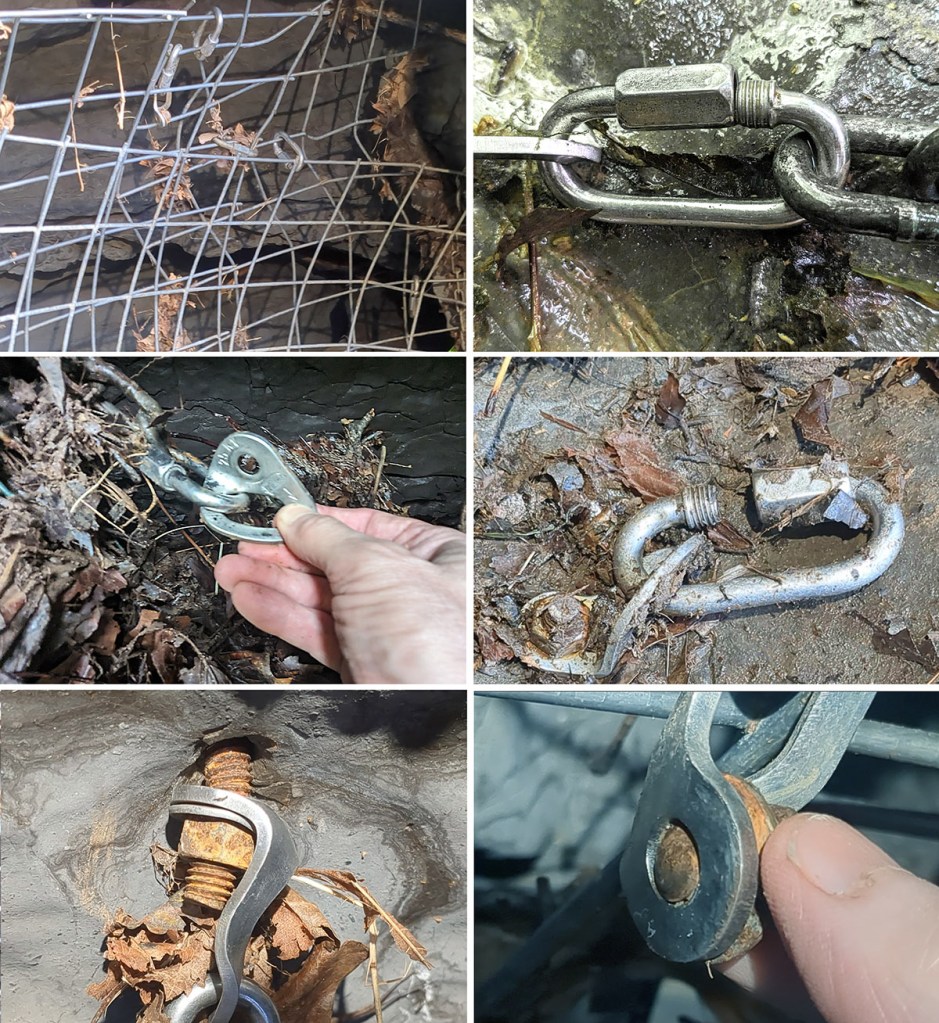

Tommy: The chalk mark on the barrel, at knee height. It’s a Hamilton Christmas. I took out the pin and put it on the wire.

[Tommy produces a pin from his pocket and drops it on the table. Alfie inspects it.]

Alfie: Based on this… forty-five percent. [of Tommy’s business]

Tommy: Thirty.

Alfie: Oh, fuck off, Tommy. That’s far too little.

Tommy: In France, Mr. Solomons, while I was a tunneller, a clay-kicker. 179. I blew up Schwabenhöhe. Same kit I’m using today.

Alfie: It’s funny, that. I do know the 179. And I heard they all got buried.

[Alfie looks at Tommy as though he has caught him in an inconsistency]

Tommy: Three of us dug ourselves out.

Alfie: Like you’re digging yourself out now?

Tommy: Like I’m digging now.

Alfie: Fuck me. Listen, I’ll give you 35%. That’s your lot.

Tommy: Thirty-five.

[Tommy and Alfie shake hands. Tommy leaves.]

Gospel of Mark: A Masterpiece Misunderstood, Part 7 – Mark Before Modernism

Posted in History of Christianity on August 3, 2025

See Part 1, Part 2, Part 3, Part 4, Part 4, Part 5, Part 6

In ancient Greek theater, like Sophocles’s Oedipus Rex, dramatic irony was central. Audiences knew Oedipus’s fate while he remained ignorant. This technique was carried into Roman drama, like Seneca’s tragedies. As described earlier, Christian writers moved away from irony in the late antique period.

During the Renaissance, Shakespeare used dramatic irony heavily. In Romeo and Juliet, the audience knows Juliet’s “death” is staged, but Romeo doesn’t. Such irony remained common in 17th- and 18th-century European drama, as in Molière’s comedies, but less structurally central than in Greek tragedy. The 19th century saw it in melodrama and novels (e.g., Thomas Hardy’s Tess of the d’Urbervilles), where readers grasped fates characters couldn’t.

In the 20th century, dramatic irony shifted. Modernist works like Brecht’s epic theater used it deliberately to alienate audiences, encouraging critical reflection. O’Neill’s plays (Long Day’s Journey into Night) leaned on it for emotional weight.

The Gospel of Mark seems to anticipate literary modernism. Mark didn’t invent stream of consciousness or set his gospel in a world of urban alienation. But the instincts of modernist storytelling – deliberate ambiguity, refusal to explain, the layering of voices, the elevation of reader above character, the fragmentary sense of time – are already alive in Mark. They are what make the gospel feel so strange to readers trained on the smoother harmonies of Matthew and Luke. In literary style, Mark seems to reach both far back, to the ancient Greeks, and far ahead, to modernism. He writes more as dramatist than as evangelist, putting him in unexpected company.

Withheld Meaning: Proust’s Readers and Mark’s

Modernist literature often refuses to say what it means. It circles themes without resolving them. It trusts the reader to infer. Mark gives riddles disguised as parables, miracles that aren’t explained, and a resurrection that isn’t shown. Not glory, but silence.

In Swann’s Way, Proust captures this same dynamic, not in plot, but in psychological structure. Swann, obsessively reading the behavior of the woman he loves, becomes a figure of frustrated interpretation:

“He belonged to that class of men who… are capable of discovering in the most insignificant action a symbol, a menace, a piece of evidence, and who are no more capable of not interpreting a movement of the person they love than a believer is of not interpreting a miracle.”

There’s the reader Mark aimed for, watching every detail, looking for signs.

Beckett and the Failed Witness

Beckett’s characters, like Vladimir and Estragon in Waiting for Godot and Winnie in Happy Days are excluded from understanding. They wait for voices that don’t explain, and they continue despite knowing the endpoint will never come.

Vladimir (Waiting for Godot): Suppose we repented.

Estragon: Repented what?

Vladimir: Oh… (He reflects.) We wouldn’t have to go into the details.

Estragon: Our being born?

In Mark, the reader continues after the characters collapse. The women flee the tomb. The disciples abandon the frame. The gospel stops, but the reader continues – because Mark has structured the story so that you see what they don’t.

Beckett once said that Joyce was always adding to his prose, and that he himself was working in the opposite direction: “I realized that my own way was in impoverishment, in lack of knowledge and in taking away.”

Mark takes away. He subtracts resurrection appearances and erases resolution. What remains is a void that insists on meaning – not through declaration, but through the reader’s isolation.

Unreliable Perception and Faulkner’s Disciples

In Faulkner’s works like The Sound and the Fury, characters narrate their experiences through fragmented, subjective lenses, often unaware of the full scope of their stories. Their voices – Quentin Compson’s anguished stream-of-consciousness or Addie Bundren’s posthumous reflections – clash and contradict, leaving gaps that the reader must navigate. This aligns with reader-response criticism, which emphasizes the reader’s active role in interpreting and reconstructing meaning from incomplete or biased accounts. Faulkner’s narrators don’t deliver a tidy “truth”; they offer perspectives clouded by personal trauma, guilt, or limited understanding. Quentin, for instance, obsesses over time and his sister Caddy’s fall, but his mental collapse distorts his narrative, forcing the reader to piece together the Compson family’s decay from his fractured memories and those of his brothers.

Faulkner’s unreliable narrators force the reader to rise above their limitations, synthesizing disparate voices to uncover a truth that no single character fully grasps.

Mark gives us the same through the disciples. They speak, but they are not to be trusted. They fear Jesus’s passion predictions and change the subject. And unlike Luke, Mark never rehabilitates them.

As with Faulkner, their unreliability is device. Mark lets them fall so you can rise, just as Faulkner allows Quentin’s breakdown to weave time, memory, and guilt into the fabric of the narrative. Faulkner’s chaos of competing voices reflects the human condition – fragmented, subjective, and burdened by history. In Mark, the disciples’ failures underscore the radical nature of Jesus’s mission, which defies human expectations of power and glory.

Beckett on the Death of the Subject

Samuel Beckett, writing on Proust in 1931, described the modern condition as a crisis, not of plot, but of self:

“We are not merely more weary because of yesterday, we are other, no longer what we were before the calamity of yesterday… The subject has died – and perhaps many times – on the way.”

This is the shape of Mark’s gospel. The narrator sees all but explains nothing. The disciples begin as named voices and end as absences. The final scene gives no resolution. Time, once galloping forward with Mark’s “immediately” at every step, halts in a tomb that no one enters.

The reader is left standing outside the story with a question its characters cannot answer.

Gospel of Ellipsis: Hemingway’s Surface Tension

Hemingway’s prose derives its emotional power from deliberate restraint, a technique often described as the “iceberg theory,” where the bulk of meaning lies beneath the surface of the text. In stories like Hills Like White Elephants, he employs sparse, minimalist dialogue and understated narration to convey profound emotional and thematic weight without explicitly stating the core issues. The story’s central conflict – an implied discussion about abortion between a man and a woman at a train station – is never directly named. Instead, Hemingway embeds the tension in clipped exchanges, pregnant pauses, and subtle imagery.

This restraint amplifies the emotional force by forcing readers to engage actively with the subtext. The silences between sentences – where characters avoid articulating their fears, desires, or regrets – carry the weight of unspoken truths. For example, when Jig says, “They look like white elephants,” and the man responds dismissively, the dialogue skirts the real issue, revealing their emotional disconnect and the power imbalance in their relationship. The unsaid looms larger than the said, making the reader feel the characters’ anxiety, uncertainty, and isolation.

Mark doesn’t explain the fig tree or narrate the resurrection. He doesn’t say why the women told no one. And when Jesus speaks cryptically, the narrator does not clarify. Mark doesn’t mismanage meaning, he suppresses it for effect. Like Hemingway, Mark trusts the reader to feel the weight of what isn’t said.

Kafka’s Gospel: Parable Without Answer

Kafka’s stories are often structured as parables – but not the kind that end in moral resolution. His parables frustrate the interpretive impulse. Their logic seems to point to something just beyond reach.

In Before the Law, a man spends his life trying to gain access to a door that was meant only for him. He dies without ever passing through. The priest in The Trial tells Joseph K. the parable – and then refuses to explain it.

In Mark 13:14, Jesus warns of an “abomination of desolation” and then stops mid-sentence. The narrator breaks in: “Let the reader understand.” Who is this reader? Not Peter, James, or John. You. Understand what? Mark’s narrator refuses to explain it.

Like Kafka, Mark knows the parable won’t resolve. He knows it exists to sharpen the hunger to understand. And the gospel itself becomes that hunger’s object.

Conclusion – Mark’s Gospel Came Too Soon

Even sympathetic readers struggle to see it. Because Mark says less the other gospels say, it is nearly impossible to read him without filling in what he left out. Harmonization is a habit learned in childhood. An untrained, unbiased, innocent reading – a first reading – by a western reader is almost unavailable. And so the masterpiece goes unnoticed because the broader story has been too thoroughly absorbed for the real Mark to be seen.

By theological or historical standards, Mark has long ranked lowest by far among the gospel writers. In early Christian citation, he accounts for barely 4% of gospel references. He is by far the shortest and the roughest, some say the least theologically rich. I disagree.

By modern literary standards – those that distrust omniscient narration and place the burden of meaning on the reader – Mark might be the rhetorical master of millennia.

That achievement is easily missed. I think it a shame that readers of modern literature rarely turn to the gospels, starting with Mark. And if they do, prior convictions prevent them from imagining it could house a work this strange, this far ahead of its time. Mark wasn’t experimenting with form for its own sake. He was a storyteller – one whose narrative instincts ran far ahead of his genre.

In his world of early Christianity, stories were expected to explain, miracles to prove, and heroes to be understood. Mark resists all of that. He gives us a Messiah who is misunderstood, a story that ends in silence, and a text that refuses to explain itself.

In other words, he wrote a modernist gospel – a work of quiet fire – before modernism existed.

Postscript: The Gospel That Leaves You Standing

Mark ends with absence– with flight, silence, and a rolled-away stone. That was the final move of a writer who trusted you to finish what he started.

Across this series, I haven’t treated Mark as theology but as what it so clearly is, once you stop trying to fix it: a story designed to be misunderstood by its characters and grasped by its reader. None of that should bear on your theology, beliefs, or lack thereof; it works regardless.

That story does not yield its truth by accumulating facts. It yields by withholding enough to make you reach. And when you do, something happens. You see what others miss. You feel the silence grow louder than the speech.

Even now, twenty centuries later, the final question still hangs–not in the mouths of the women at the tomb, but in yours: What are you going to do with what you’ve seen?

Gospel of Mark, Masterpiece Misunderstood, Part 6 – Mark, Paul and James: The Silence, the Self and the Law

Posted in History of Christianity on August 2, 2025

Mark vs. Matthew and Luke: Redaction, Not Clarification

Matthew and Luke didn’t set out to clarify Mark, as many scholars have claimed. They were authors writing for different communities with different needs. They either misunderstood Mark’s rhetorical style, understood it but disliked it, or were indifferent to it altogether, merely reusing his stories and text. They took Mark’s gospel and Q as starting points, then reshaped them to fit their theological goals. In doing so, they smoothed its edges, filled in its silences, and reframed its mysteries using their own rhetorical styles.

Matthew, by most accounts, is rhetorically more refined than Mark. His Greek is more polished, and his theological framing is clearer. But Matthew and Luke lose Mark’s vividness. In my view, the most rhetorically daring gospel in Christianity was overwritten by its successors, and it is inaccurate or disingenuous to frame this as clarification.

Matthew and Luke reworked the fig tree. Mark’s fig tree vignette (11:12–14, 20–21) is famously strange, as discussed earlier: Jesus curses a tree for having no fruit out of season and Mark wraps the episode around the cleansing of the temple to enforce the metaphor.

Matthew’s version (21:18–22) changes the tempo: the tree withers immediately. The temple scene is unlinked. And the point is made explicit: it’s a lesson about faith and prayer. Luke (13:6–9) avoids the destructive miracle and cursing the tree, giving instead a parable that calls for repentance while there’s still time. A summary shows the transformation:

| Feature | Mark | Matthew | Luke |

| Type | miracle + symbol | miracle + moral | parable |

| Timing of Withering | next day | immediate | not applicable |

| Commentary | faith and prayer | faith and prayer | repentance and mercy |

| Relation to Temple | surrounds cleansing | follows cleansing | precedes healing on sabbath |

| Theological Emphasis | judgment, irony, failure of temple | power, faith, moral clarity | warning, grace, call for repentance |

What was rhetorical structure in Mark becomes illustrative theology in Matthew and Luke. Riddle becomes sermon; the silence is gone.

A comparison of approaches to the fig tree shows the progression toward theological evolution and loss of irony:

| Detail | Mark | Matthew | Luke |

| Fig tree cursed | Yes | Yes | No (parable only) |

| Disciples mentioned | Yes: “heard it”, “Peter remembered” | Yes: they “marveled” | No |

| Delayed withering | Yes | No | N/A |

| Delayed narrative payoff | Yes | No | N/A |

| Irony/suspension | Yes | No | No |

A comparison of the way Mark and Matthew mention the disciples in this story shows still more about their rhetorical mindsets. Mark (11:14) reports:

And he said to it, “May no one ever eat fruit from you again.” And his disciples heard it. (ESV)

His disciples heard it? Of course they did. But what an odd thing for Mark, given his economic prose, to include. The statement doesn’t advance the plot and interprets nothing. No, this is Mark the author signaling that he’s hung Chekov’s gun (give a reader no false promises) on the wall. Take notice, something is going to happen, so remember what is being marked here.

What’s going to happen is that Jesus will cleanse the temple. The marker (they heard him) marks the curse and is a small, almost invisible trigger, narratively minimal, ironically loaded, and structurally strategic. Matthew and Luke steered clear. Mark delays firing Chekov’s gun until he returns to the tree. Bang, it’s dead.

Mark ends his gospel with silence and fear. The women flee the tomb. No resurrection appearances. “They said nothing to anyone, for they were afraid.”

Matthew and Luke add resurrection appearances, dialogue, comfort, and commissions. Matthew gives us theatrical effects: guards, earthquakes, angelic speech. Luke gives us the road to Emmaus, meals, and final instructions.

These endings do more than continue the story. They close a loop Mark left open. They give theological assurance where Mark offered emotional tension. By explaining what Mark left implied, they take the burden of interpretation off the reader and place it into the narrative.

Mark’s disciples are never right. They botch the parables and miss the miracles. They sleep, flee, and deny. Mark never resolves that arc. The disciples have no epiphany. Peter is given a beatitude in Matthew: “Blessed are you, Simon… you are Peter, and on this rock I will build my church” (Matt. 16:17–18).

Luke dials back the disciples’ failures and paints a more stable community. By the time we reach Acts, the apostles are the theological center of gravity.

Modern scholarship tends to treat Matthew and Luke as consciously adapting Mark rather than misunderstanding him or cringing at his telling. But their treatment of the fig tree is revealing. Whether their changes stem from narrative or theological agendas, the result is a loss of Mark’s narrative complexity. In that sense, even if they didn’t misunderstand or dislike Mark’s meaning, they did dismantle his rhetorical scaffolding – and with it, the deeper tension he built into the scene.

In Mark, Jesus says explicitly that parables are designed to (in order that they) conceal, not clarify (4:11–12). It’s a shocking claim. Jesus doesn’t teach in parables to illustrate the truth, but to hide it from those unready to hear it. It’s a clear challenge to you to show your readiness.

Matthew retains many of the same parables but softens the intent. He writes:

This is why I speak to them in parables, because seeing they do not see… (Matt. 13:13)

The subtle change from “in order that” to “because” shifts the parables’ purpose from concealment to explanation. This contrast doesn’t result from translation; it’s present in the Koine manuscripts. I agree with scholars like R.T. France and Joel Marcus that Matthew must have deliberately changed Mark’s ἵνα to ὅτι to soften the implication that Jesus’s parables intentionally obscure truth. That implication was theologically problematic for Matthew. What Mark presents as rhetorical filtering, Matthew turns into compassionate pedagogy. Matthew and Luke, in moving away from literary puzzle toward religion, wrote for churches, for instruction, for catechesis. Their redactions obscured the most subversive thing Mark had done: trust the reader.

Paul vs. Mark

While the epistles – especially those commonly attributed to Paul – show formidable rhetorical skill, their style is strikingly different from Mark’s. Paul’s prose is argumentative, insistent, full of digression and appeal. He leads the reader, often with intensity, sometimes with exasperation, and always with a strong sense of his own position in the exchange. Paul’s voice dominates. There’s no narrative mask, little humble pretense. The authority of the letter comes not from its structure but from the voice behind it. Even Paul’s moments of self-deprecation – “I speak as a fool” – seem more performative than self-effacing.

In 2 Corinthians 11, Paul all but dares his audience to compare him to rival apostles, saying,

Are they Hebrews? so am I. Are they Israelites? so am I. Are they the seed of Abraham? so am I. Are they ministers of Christ? (I speak as one beside himself) I more; in labors more abundantly… (2 Cor 11:22-23 ASV)

In Galatians, Paul shows that he is the conduit. He is bound to his message; it’s his claim, his proof, his identity. He states outright that he is bypassing both tradition and community—no apostolic succession, no collective discernment. It’s just him and revelation.

For I would have you know, brothers, that the gospel that was preached by me is not man’s gospel. For I did not receive it from any man, nor was I taught it, but I received it through a revelation of Jesus Christ. (Gal 1:11–12 ESV)

In 1 Corinthians 9, Paul defends his apostleship with personal passion and rhetorical intensity:

Am I not free? Am I not an apostle? Have I not seen Jesus our Lord? Are not you my workmanship in the Lord? If to others I am not an apostle, at least I am to you… (1 Cor 11:1-2 ESV)

Here, Paul’s rhetorical command is on full display, but so is his presence. He becomes part of the message. He is its defender and its embodiment. Mark, by contrast, disappears. His narrator rarely intrudes, and when he does, it is briefly, obliquely, or through broken syntax. The reader, not the writer, is meant to emerge in command. That difference of posture – one text rhetorical to persuade, the other rhetorical to implicate the reader in the story’s meaning and cost – is perhaps the clearest sign of Mark’s literary distinctiveness.

James – Rhetoric Without a Narrator

The Epistle of James warrants a mention because its rhetoric is also shrewd. The book is famous for its assertion that “faith without works is dead.” He sets up a contrast between empty belief and active compassion:

If a brother or sister is poorly clothed and lacking in daily food… what good is that? (2:14–17 ESV)

Here, “works” clearly means acts of charity and mercy. The moral framing is universal, hard to argue with, and rhetorically effective. It appeals to shared values. But elsewhere in James, “works” may implicitly include behaviors not so obviously ethical at root:

Religion that is pure and undefiled before God… is this: to visit orphans and widows… and to keep oneself unstained from the world. (1:27)

The second clause – “unstained from the world” – is vague, but loaded. It likely gestures toward purity behaviors that are more Jewish than Christian in tone.

Do you not know that friendship with the world is enmity with God? (4:4)

Again, this moves from moral to separatist rhetoric – potentially reinforcing ethnic or cultural boundaries. We can’t be certain, but James seems to be framing his argument in terms everyone can agree on. Then he gradually broadening the meaning of “works” to smuggle in a stricter behavioral code, includes Jewish law-adjacent customs. Cunning. He avoids direct confrontation with Paul’s theology, but still answers it implicitly but forcefully.

James often sounds like Proverbs or Sirach, surely no accident. His use of tight, balanced structures gives his writing an oracular, gnomic quality:

Let every person be quick to hear, slow to speak, slow to anger… (1:19 ESV)

From the same mouth come blessing and cursing. My brothers, these things ought not to be so. (3:10 ESV)

Do not swear, either by heaven or by earth or with any other oath; but your yes is to be yes, and your no, no (5:12 NASB – note the syntactic ellipsis between “no” and “no”, lost in many translations)

James’s imagery is concrete, unlike Mark and Paul. He compares the tongue to a spark, an uncertain man to a bobbing wave, and the rich to withering grass. His imagery persuades while bypassing formal argument.

A short comparison between Mark, Paul, and James shows:

| Writer | Narrative Presence | Rhetorical Voice | Ego/ Authority | Style of Engagement |

| Mark | Minimal, oblique | Structural, ironic | Effaced | Reader discovers meaning |

| Paul | Occasional but strongly personal | Assertive, argumentative | Central | Reader is persuaded |

| James | None | Moral, aphoristic | Neutral | Reader exhorted, corrected |

Mark is the early outlier, followed by a literary trend toward clarity and control. The text becomes the instrument of the Church, not a provocation to the reader. Tastes of the church turned institutional, doctrinal, and mass-oriented. Mark wrote for those with ears to hear (4:9). The Church wrote for those who sought a creed.

Next and final: Mark Before Modernism

The Gospel of Mark: A Masterpiece Misunderstood, Part 5 – Mark’s Interpreter Speaks

Posted in History of Christianity on August 1, 2025

See Part 1, Part 2, Part 3, Part 4

Mark’s narrator very rarely offers commentary. His most overt interpretations are tucked into parentheses or framed as almost self-effacing asides. In Mark 7:2–4, he breaks the flow of Jesus’s confrontation with the Pharisees to explain handwashing customs:

(For the Pharisees, and all the Jews, do not eat unless they wash their hands properly, holding to the tradition of the elders; and when they come from the marketplace, they do not eat unless they wash. And there are many other traditions they observe, such as the washing of cups and pots and copper vessels.) (ESV)

This form of direct exposition appears nowhere else in the gospel, and its tone is uncharacteristically anxious. The syntax is crowded and accumulative, almost list-like. There’s no attempt to link this aside tightly to the main dialogue, and it reads like a clarification added for a reader who simply wouldn’t understand the stakes of the debate without it. In that sense, it’s a breach where Mark momentarily acknowledges the gap between the world of the story and the world of the reader.

But it’s not clear who is being addressed. A Jewish narratee wouldn’t need the explanation. A Roman reader might, but Mark doesn’t frame it as such. There’s no “as you know” or direct narrative address. Instead, the narrator drops the aside in mid-stream, then promptly disappears again. The result is strangely destabilizing. It invites the reader to notice that this gospel knows it’s being read across cultural lines but doesn’t want to say so too loudly.

Scholars have long noted this passage as evidence that Mark’s intended audience may have included Gentile readers unfamiliar with Jewish purity laws. But its narrative awkwardness may be more important than its audience implications. The digression doesn’t belong to Jesus’s speech, and it isn’t integrated into the narrator’s voice. It hangs slightly askew, as if the narrator is not quite practiced in speaking outside the bounds of his scenes. And that narrative unease may be the point.

In rhetoric, dubitatio is the technique of feigning hesitation or uncertainty, often to enhance credibility. Mark’s aside in 7:2–4 isn’t classic dubitatio. It’s not self-aware enough to feel like artful hesitation, but it does feel like narrative restraint forced into speech. It overexplains in a crowded string of clauses and lacks a clear addressee. Mark’s narrator shows a kind of structural dubitation.

Mark is quiet, especially where we would most expect it to explain itself. The narrator rarely steps in to clarify, summarize, or instruct. When he does, it’s with restraint and can seem indecisive. Odd, parenthetical elements are syntactically jarring. Is Mark’s narrator hesitant to break the rhetorical spell, or is he intentionally breaking rhythm?

This piece looks at the breaches in Mark’s otherwise minimalist storytelling and argues that they are meant to highlight his indirection. Mark’s rare authorial voice is self-referential: not so much pointing to the meaning of events, but to the process of reading and interpreting them. Even when he speaks, Mark still makes you work.

Parentheses in the Wilderness: Ritual Washing

In Mark 7:2–4, the Pharisees confront Jesus about his disciples eating with unwashed hands. Notice how the narration breaks:

(For the Pharisees and all the Jews do not eat unless they wash their hands properly… and there are many other traditions that they observe.) (ESV)

On the surface, this parenthetical is meant to help the reader. But which reader? Again, a Jewish reader wouldn’t need this to be explained, assuming the statement is correct. A Gentile might. So this comment could be the voice of the narrator to the real-world reader, not the narratee within the gospel world.

But it’s not introduced formally. It adds a tangent. Its syntax is overloaded. It’s explanatory, but clunky. It breaks the flow, and it reads like an interlinear gloss that drifted into the body of the text – as some scholars argue it is.

But comparison with Mark’s other parentheses shows it to be consistent. It emphasizes his deliberate method of resisting interpretation at the narrator level.

Dramatically, in 7:19, Jesus says that nothing entering a person from the outside can defile him. It’s a provocative statement – but it’s not a formal abrogation of dietary law. Yet Mark’s narrator follows it with a striking editorial aside: “Thus he declared all foods clean.” Mark tells us that this is his narrator’s gloss, not what Jesus said. It’s what the narrator concludes – or wants the reader to conclude. This is a major theological claim, especially in a first-century Jewish context. Yet it’s not put in Jesus’s mouth but tacked onto the end. The comment is not timeless; it’s contextual. But most readers fail to notice this; they remember the story as if this were Jesus’s claim.

In Greek, this phrase is syntactically ambiguous. It’s an editorial comment, awkwardly inserted and easily overlooked. Yet it’s doing a lot of work.

If it’s Mark’s voice, then it’s one of the rare times he interprets Jesus’s meaning for the reader. But even here, he does it indirectly, after the fact, as a kind of explanatory shrug. He doesn’t say, “Here’s what Jesus meant.” His “thus” leaves us wondering.

In the healing of Jairus’s daughter, Jesus takes the child by the hand and says:

“Talitha koum”–which means, “Little girl, I say to you, arise.” (5:41 NASV)

Koine Greek has no quotation marks. It’s unclear whether this translation, internal to the text, is Jesus speaking to the girl, Jesus speaking to others in the room, or the narrator speaking to the reader. The effect is subtle: Jesus has just used Aramaic; so someone has translated it. But the grammar doesn’t make it obvious who that is.

This is one of several places where Mark’s narration blurs into character speech. It mirrors the overall strategy of the gospel, where author and narrator are not fully aligned, and where the reader is constantly asked to track perspective.

Let the Reader Misunderstand: Parentheses and Self-Reference

A curious moment where Mark breaks from letting actions and dialogue tell the story is the anointing story. Here’s the core moment:

Truly I tell you, wherever the gospel is preached in the whole world, what she has done will be told in memory of her. (14:9)

The narrator up to this point has played things relatively straight – omniscient without interpretation. But here, something unusual happens. A character (Jesus) speaks with a global-historical voice, predicting the preservation of this woman’s story. But this prediction is, ironically, already fulfilled by the gospel in which it appears.

The moment is self-aware. It feels like the author breaking through the narrator using Jesus’s words. Jesus says her story will be told wherever the gospel goes, and the truth of his prophecy is in the reader’s hands. Look, you’re reading it.

Mark 14:9 collapses narrative time and reader time. It’s a moment of reflexivity, not just a character’s prediction, but a cue from the authorial level that this story you’re reading is already enacting the prediction. Mark doesn’t break the fourth wall directly, but this is the next closest thing: Jesus’s voice carries authorial weight.

Mark asserts a form of meta-claim: this anonymous woman, unnamed by everyone in the room, is now known to you, the reader of this gospel because this is that telling, “told in memory of her.”

The clearest and strangest example of Mark’s self-referential voice appears in Mark 13:14. Jesus is giving a long apocalyptic speech about future tribulation. He says:

When you see the abomination of desolation standing where it ought not to be… (let the reader understand) …then let those who are in Judea flee to the mountains. (ESV)

In 13:14, translators struggle not with tense but with punctuation. Who’s talking? The phrase “let the reader understand” interrupts the discourse. It’s not addressed to the disciples. It’s not part of the speech’s internal logic. It’s not “let the listener understand,” or “let him who sees understand.” It’s: ὁ ἀναγινώσκων νοείτω: “Let the one who is reading understand.”

Jesus doesn’t speak this way elsewhere. This line isn’t addressed to anyone in the story. It’s aimed past them – to the reader. Though a few evangelists (William L. Lane, Craig Evans, and Robert Gundry) suggest that it could be Jesus’s voice, I think that highly unlikely. The phrase’s address to a “reader” is anachronistic for Jesus’ oral context if he spoke to disciples, not a reading audience. Its parenthetical form and alignment with Mark’s asides (e.g., Mark 7:19) suggest an editorial hand. Matthew’s clarification (“spoken of through the prophet Daniel”) and Luke’s omission (Luke 21:20) imply the phrase was seen by them as a saying of Jesus.

Instead, this is the voice of both the author and the narrator – conflated here – breaking through the frame to speak directly to the reader, not the narratee. Joel Marcus sees it as Mark’s instruction to interpret the “abomination” as destruction of the temple by Romans under Vespasian in 70 AD. Others suggest a reference to the more severe Roman response to the Simon bar Kokhba revolution under Hadrian in 136 AD.

Its literary significance holds regardless of the reference. It is the moment the gospel becomes unarguably self-referential. It admits it’s a text and knows it’s being read. It tells the reader to pay attention – to spot something. Remarkably, that something will not be explained.

This comes at one of the gospel’s most cryptic moments. Rather than clarify the “abomination of desolation” (reference to Daniel 9:27), Mark points directly to its ambiguity and places the burden of interpretation on you.

Ironically, this passage shows boldly that even when Mark speaks, he withholds. His parenthetical interjection is paradoxically employed to direct the reader’s gaze at the absence of explanation.

In a rhetorical move that could have been pulled straight from Samuel Beckett, Mark breaks the fourth wall to report that the fourth wall exists (let the reader understand).

Next: Strategies of Mark, Paul and James: The Silence, the Self and the Law

The Gospel of Mark: A Masterpiece Misunderstood, Part 4 – Silence and Power

Posted in History of Christianity on July 30, 2025

Silence and Prohibition as Rhetorical Trapdoor

For Mark, silence is a form of structure. His most famous silence comes at the end of the gospel, in 16:8, where the women flee the empty tomb and “said nothing to anyone, for they were afraid.” Here we have silence at the characters’ level and at the narrative level.

Mark uses silence like a line break. It isolates, heightens, and forces attention. His scenes close with hesitation. The fig tree withers, Jesus gives no explanation. Jesus heals by touch, the narrator doesn’t comment. At his trial, Jesus is silent when questioned (14:61).

In Mark 1:40–45, Jesus heals a leper and sternly warns him to tell no one. The man spreads the news widely. Jesus then retreats into desolate places. The rest is silence. There is no commentary on the man’s disobedience, no indication that Jesus is angry, no explanation of what Jesus’s withdrawal means.

These silences create enough interpretive space to lure a thoughtful reader. A key moment comes in the boat immediately after the second feeding miracle. The disciples are worried they’ve forgotten to bring bread. Jesus asks:

Do you not yet perceive or understand? Are your hearts hardened? Having eyes do you not see, and having ears do you not hear? (8:17–18 ESV)

He’s just fed thousands–twice, and they’re panicking about lunch. The moment seems to glance past the disciples and land somewhere else. The burden of understanding has been handed to the reader

The Messianic Secret: Command as Rhetoric

Repeatedly, Mark’s Jesus performs a miracle, then demands the characters to be silent. He heals a leper, then says: “See that you say nothing to anyone” (1:44). He raises Jairus’s daughter, then “strictly charged them that no one should know” (5:43). He opens a deaf man’s ears and “charged them to tell no one” (7:36). After Peter confesses him as the Christ, Jesus “strictly charged them to tell no one about him” (8:30). The Messianic Secret refers to these repeated instructions to demons and healed individuals, prominent only in Mark’s Gospel, to keep his identity as the Messiah hidden.

Scholars offer various explanations, reflecting different approaches to the text. Some give a historical explanation. Jesus commanded secrecy to avoid arrest by Roman authorities, protecting his ministry. A theological alternative postulates that Jesus kept his identity secret to challenge Jewish expectations of a political Messiah, not the role the suffering Jesus plays in the gospels. Some see it as purely practical – a way to manage crowds to avoid interference with his teaching. This theory fits well with the healing the leper (1:45) and the blind man at Bethsaida (8:22) but poorly with the recognition by Jesus of demons (1:23, 1:34, 3:11) and after Peter’s confession (8:30).

I see it, especially in its repetition, like William Wrede did in the 1800s, as a literary device. Unlike Wrede, I am not concerned with the theological question of whether Jesus was the Messiah from the start, preordained since the beginning of time, as in John 1:1, or whether he became the Messiah at the point of crucifixion, as Phillipians 2:6 can be read. Wrede’s argument for the messianic secret being a literary device hinged on this distinction, along with the question of Markan priority. Mine does not. Wrede and many other explanations of the messianic secret miss the point that is obvious in a reader-response analysis of Mark.

Mark is delaying public understanding to increase private responsibility. If the characters can’t see what happened, then the reader has to see it for them. The messianic identity remains hidden inside the story. It becomes visible to those who can read the signs.

Those reading Mark only for its theology or to judge its historicity miss the continuity between the silence and Jesus’s explanation of the parables: “…but for those outside everything is in parables…” This is blatant. Jesus isn’t hiding from everyone;he’s only hiding within the story. But Jesus, through the narrator, reveals himself directly, to the reader. And Mark rewards the reader for not needing to be told.

It’s the Reader Who Sees the Pattern

Mark’s combination of rhetorical choices – the silence, the repetition, the warnings not to tell anyone – shape an experience that forces the reader to see what the disciples do not, and to do so without the narrator confirming it. It’s why no one inside the story “gets it.” The entire gospel is a structure of discovery, designed not for the narratee, but for you, the reader.

You understand the feeding miracles. You understand the anointing. You suspect, if your rhetorical skills are sharp, that the fig tree is about the temple. You hear the Roman centurion’s words – “Truly this man was the Son of God” – and realize no one else has said anything like that through the entire gospel.

Mark’s narrator doesn’t hand insight to you. You earn it. But on another level, Mark the author, one level up, did hand it to you. Isolating the reader is Mark’s deepest rhetorical move. It’s not that he just delays meaning; he narrows its access. This narrative isolation creates a private moment of insight for the reader alone.

Mark’s positioning of the reader as sole witness is seen in the transfiguration’s muffled epiphany (9:2). Jesus takes Peter, James, and John up a mountain. They see him transfigured, his clothes radiant white, flanked by Moses and Elijah (echoing Malachi 4:5-6). Considered “the greatest miracle” by Aquinas, we might expect it to be the clearest scene in the gospel.

But what happens in Mark’s telling? Peter blurts out something foolish. A voice from heaven addresses an unspecified listener: “This is my beloved Son: hear ye him.” Then, “suddenly looking round about, they saw no one any more.” Jesus tells the disciples “tell no man what things they had seen” (ASV).

The moment has closed on itself, the vision collapsed to silence. The disciples are clueless and are told to be silent. Who’s left to interpret Jesus’s miracle? Only, you, the reader. Hear ye him.

In the garden of Gethsemane, Jesus undergoes his moment of greatest anguish. He tells his disciples to watch and pray, but they fall asleep. Three times. You, the reader, are fully awake. You are present for every word of his prayer. You see his sorrow. You watch the drops of isolation gather around him. This scene, as Mark paints it, isn’t about the disciples’ inattention; it’s about your attention.

Mark’s structure puts you in a lonely place. You are the only one who sees the pattern. You are the only one who notices the parallels, the ironies, the betrayals. You’re the only one who sees what kind of Messiah this is. Mark doesn’t want you to pity the disciples. He wants you to step over the blocks on which they’ve stumbledand keep on going.

Silence Plus Inversion

Throughout Mark, people are constantly told to be silent – and they rarely obey. The leper in chapter 1 is told to “say nothing to anyone.” He spreads the news. After Jairus’s daughter is raised, Jesus instructs them to keep quiet. They are “immediately overcome with amazement” and, presumably, do not obey. The deaf man in chapter 7 is healed. Jesus charges them to tell no one. “But the more he charged them, the more zealously they proclaimed it.”

It’s a pattern: commanded silence, followed by disobedient speech. But at the tomb, the pattern is reversed. The women are not told to be silent. In fact, they are given a clear message to deliver:

Go, tell his disciples and Peter that he is going before you to Galilee (Mark 16:7 ESV)

But this time, they say nothing.

And they went out and fled from the tomb, for trembling and astonishment had seized them, and they said nothing to anyone, for they were afraid. (Mark 16:8 ESV)

It’s the only moment in the gospel when someone actually complies with silence – despite being told not to.

This reversal is Mark’s final irony. He has trained us to expect speech after commands for silence. But now, when the resurrection itself is announced, when the story should break open, the characters fall silent.

The women are continuing the pattern of misunderstanding and fear that runs through the entire narrative. Even here, at the resurrection, Mark offers no closure. The characters don’t overcome their limitations; they give in to them. And the reader is drawn in.

Mark’s Redefinition of Power

From the midpoint of Mark onward, the tone darkens. Jesus has healed the sick, fed the hungry, walked on water, and rebuked storms. He has astonished crowds, exorcised demons, and taught in riddles that burn their way into the mind. But once Peter names him the Messiah in Mark 8, things shift.

And he began to teach them that the Son of Man must suffer many things… (Mark 8:31 ESV)

This is the pivot. From here on, Jesus repeats the same strange message: he won’t rule as a king but will be rejected. He won’t be crowned; he’ll suffer and dieand rise again. Each time he says it, the disciples, on cue, fail to understand. Mark builds his second half on this theme.

In Mark 8:29, Peter finally names Jesus as the Christ. In a rhetorically less shrewd telling, this would be framed as the breakthrough. In cinema it would be the classic zoom-out, where we are invited to consider the character Jesus and his state of mind before understanding the context around him. But here, Mark’s Jesus story tracks in rather than zooming out. Jesus, in a full-screen close-up, tells the disciples to tell no one and then says “the Son of Man must suffer.”

Peter pulls him aside and says that can’t be right. Jesus responds with the harshest tone, unparalleled in the other gospels:

Get behind Me, Satan; for you are not setting your mind on God’s purposes, but on man’s. (8:33)

This is a clash between two visions of power. Peter gets the title right but fills it with the wrong content. He imagines a crowned victor; Jesus offers a condemned servant. It’s both rebuke and reversal.

In 8:33, Mark shows us something else: Peter is not the intended reader. This isn’t a Vaudeville wink or Groucho’s fourth-wall smirk. It isn’t postmodern self-reference either. It’s something subtler – a direct address the narrator doesn’t acknowledge, but the reader feels. The Greeks called it metalepsis.

In this metalepsis Mark sets up the Christ-confession not as insight but as a foil for the insight that hasn’t happened yet. The reader is meant to notice the disjunction. The narrator doesn’t explain it. But, like a theatrically and rhetorically literate ancient Greek, you’re supposed to feel it.

Mark has three predictions of the Passion (8:31, 9:31, 10:33). In the first we learn that the Son of Man must suffer many things, in the second that he will be delivered. The third has specificity:

The Son of Man will be handed over to the chief priests… They will mock him and spit on him, and flog him and kill him. (10:33–34 NASB)

Mark uses a clear escalation in both content and tone. Each is followed by the disciples’ embarrassing descent into misapprehension. By the third, the reader is actively frustrated when James and John ask for seats of glory. They’re imagining Jesus enthroned in messianic splendor, and they want the top cabinet posts – prime minister and chief of staff. Their political expectation shows the disciples’ continued misunderstanding of what Jesus’s “kingdom” is. Mark uses their request to stage one of the gospel’s key reversals. Jesus responds (10:42–45) by redefining power entirely.

This is one of the more elegant places where inherited harmonization dulls Mark’s edge. Readers come to the scene already believing that Jesus is a spiritual king. But Mark wants us to see the disciples as tragically, almost comically mistaken. If you read Mark with fresh eyes – no John 18:36, no Pauline theology, no Sunday school overlays – it hits different. Jesus has predicted torture and death. James and John are jostling for promotions.

As a reader, you wince, like Mark intended. How can they be this obtuse? How can they hear “mocked, spat upon, killed” and respond with “Can we sit at your right and left hand?” The scene mirrors the ironic humor of Jason’s naive optimism in Euripides’ Medea, which similarly served to deepen the audience’s engagement.

Then there’s the final irony. The two men who are actually at Jesus’s right and left when he “comes into his glory” are mocking, low-life thieves, nailed up beside him. Mark explicitly states that one is on his right and one on the left. The seats coveted by James and John are occupied by the damned. Mark makes that detail land like a death knell to any political or triumphalist reading of Jesus’s kingship. Luke seems to want one last flicker of hope; one of his thieves repents and is saved. Mark leaves it dark, no repentance. Readers’ background knowledge of Luke contaminates Mark’s narrative. Harmonized memory, doctrinal catechesis, and liturgical exposure overwrite Mark’s internal logic and makes readers miss Mark’s brutal wit.

Mark’s storytelling shares much with Greek tragic form, but he uses its elements with new intent. Critics have written detailed comparisons between ancient Greek literature and the books of the New Testament. Like the protagonists of Sophocles’ Oedipus or Euripides’ Hippolytus, Jesus is a noble figure with a divine mission, yet he faces suffering and betrayal. The centurion’s declaration at Jesus’ death is a standard Greek anagnorisis, a moment of recognition where a character realizes the true identity of the protagonist. Many more examples appear in Mark.

I’m not pursuing an analysis of parallels here, particularly because I’m not portraying Mark as a standard Greek author but as an innovative one. His tools clearly emerge from that tradition, but he combines them in uncommon ways to push the artform into the future, as befits the explosion of a new form of religion.

Like Euripides in Medea and in Alcestis, Mark has introduced mildly comic elements into what is nominally a tragedy. These comic elements aren’t there to lighten the mood but to embarrass you on behalf of dimwitted characters in the story. Mark, in service of Jesus’s redefinition of power, has put this device to novel use.

Mark is teaching the reader not just to reject the disciples’ response, but to reject the assumption behind it: that power is triumph, authority is dominance, and victory means avoidance of pain.

For Mark, power is something else entirely. To the disciples’ disbelief, power points downward. When James and John make their request, Jesus answers:

You do not know what you are asking. Are you able to drink the cup that I drink…? (10:38 NASB)

They say yes, because they still don’t get it. And then Jesus delivers what may be the clearest statement of power redefinition in the New Testament:

…whoever wants to be first among you shall be slave of all… For even the Son of Man did not come to be served, but to serve, and to give his life as a ransom for many. (10:43–45 NASB)

Jesus is not telling them to act humble while being powerful. He’s telling them that the act of humiliation – the path downward,through rejection, suffering, and death– is the power.

As expected, Mark does not explain this principle, he dramatizes it. The ostensibly powerful figures in Mark – Herod, Pilate, the Sanhedrin (high priests, elders, and scribes) – are all shown to be weak. They fear crowds and make cowardly decisions. The disciples, given the chance to stand with Jesus, scatter.

Jesus remains steady and silent. When accused, he does not defend himself. When struck, he doesn’t retaliate. When mocked, he gives no response. The reader is left with the realization: this is what power looks like. It doesn’t come with thunder or reach for titles. It’s patient and does not boast. It walks through pain, fearing no evil, knowing what lies beyond.

Jesus’s redefinition of power is for the reader. The disciples aren’t punished for their dullness. The story moves forward without them. They do not greet the resurrection.

But you do. You’re taken through all of it, with increasing quiet. Mark’s tone descends lower still, until finally, in the silence of the tomb, you are the only one left. Mark doesn’t conclude with a lesson, but an echo. And in the subsequent hush, the story belongs to you, the reader.

Next: Mark’s Interpreter Speaks

The Gospel of Mark: A Masterpiece Misunderstood, Part 3 – Rhetorical Strategies

Posted in History of Christianity on July 30, 2025

When Matthew rewrites Mark, he gives names to faceless characters, supplies motives, removes redundancies, and adds explanation. These edits have historically been interpreted as clarifications, refinements, or improvements, a claim rooted in late classical ideas of rhetorical polish.

I take issue with that interpretation. To call these additions clarifications is, at best, apologetic. A post-resurrection appearance doesn’t clarify Mark – it changes the story. Whether Matthew refined Mark is a theological judgment. Whether he improved him is an aesthetic one. Rhetorical skill, in the end, lies in the eye of the reader.

If we shift the criteria used to judge a gospel from didactic elegance to the ability to implicate the reader, then Mark is doing something monumental. By examining Mark’s tools, we can see his strategy at work. At the heart of that strategy is a tool most of us associate with sarcasm, but in Greek literature runs deeper: irony.

When modern readers use the word, they usually mean something like “when the opposite of what you expect happens,” or they simply mean a dry or mocking tone. For the ancient Greeks, irony was more refined. At its core, irony occurs when there’s a gap between what a character and the reader understand.

In Mark, the gap is huge. The disciples repeatedly fail to understand who Jesus is or what he’s doing. Jesus will explain something directly, and they still miss the point. But you, the reader, can see it. That’s dramatic irony, and Mark uses it repeatedly. Here we’ll explore Mark’s irony and the rhetorical devices he couples with it.

In Mark’s crucifixion scene, bystanders taunt Jesus as he dies on the cross, sneering:

He saved others; He cannot save Himself! Let this Christ, the King of Israel, come down now from the cross, so that we may see and believe! (15:31-32, NAS)

The irony works on two levels. First, the mockers’ words drip with sarcasm, derisively labeling Jesus as the Christ and King of Israel (irony as the term is popularly used). Second, unbeknownst to them, their taunts actually ring true (ancient irony). You the reader, unlike the characters, accept the narrator’s firm belief that Jesus is indeed the Christ, the King of Israel, making the mockery unintentionally truthful. Then at the cross, only the Roman centurion – and you the reader – realize what just happened (15:39).

That’s Ernest Hemingway’s iceberg principle 2000 years before A Farewell to Arms. It’s Mark letting the reader rise above both the narrator and the narratee. It’s how he creates an experience of epiphany rather than exposition.

Antithesis and Parataxis

Antithesis sets opposites side by side – darkness and light, silence and speech. (That sentence uses literal antithesis.) Parataxis is this: short clauses, side by side, no hierarchy, no conjunctions. (That sentence is paratactic.)

Mark uses literal antithesis (e.g., “not to be served but to serve”, 10:45, 7:15, 10:34) like the other gospels do, but he does so much less often. He uses conceptual antithesis (e.g. 9:35, first and last, greatness and servanthood) at roughly the same frequency as the other gospels, but his style is less systematic. Mark’s antithesis builds narrative tension rather than highlighting explicit teaching moments.

As an example, consider the demoniac of Mark 5:1–20. He recognizes Jesus immediately, begs to stay with him, and is sent out as a witness. The disciples, who are with Jesus constantly, resist his identity and mission (Mark 4:13, 6:52). The narrative antithesis is that those who should be inside the circle of understanding are blind while the demon-possessed outsider sees clearly.

The Widow’s Offering vs. Temple Grandeur contrasts the poor widow who gives all she had with lavish display and institutional grandeur. The juxtaposition does the work without a sermon. James and John ask Jesus for status (Mark 10:35–45) while Bartimaeus, a blind beggar, “sees” Jesus as Son of David and follows him immediately. The supposedly enlightened are self-seeking while the blind man has true sight.

Mark 1:32–34 shows his parataxis at work, particularly in Young’s Literal Translation. Each clause lands like a drumbeat, event after event, rough and ready, without transitions, no reflection or interiority, just stacked actions at a ballistic pace:

And evening having come, when the sun did set, they brought unto him all who were ill, and who were demoniacs, and the whole city was gathered together near the door,and he healed many who were ill of manifold diseases, and many demons he cast forth, and was not suffering the demons to speak, because they knew him.

In Mark 37-39, the storm escalates clause by clause, each introduced with and. No breath taken, no interpretive because. It throws the reader into the middle of the chaos and preserves the disciples’ panic. Mark phrases this in the historic present, bouncing between present and past tense, a device we’ll examine later:

“And there cometh a great storm of wind, and the waves were beating on the boat, so that it is now being filled, and he himself was upon the stern, upon the pillow sleeping, and they wake him up, and say to him, ‘Teacher, art thou not caring that we perish?’” (YLT)

If rhetorical skill is measured only by traditional standards – the kind favored by W. D. Davies, B. H. Streeter, R. T. France, and Dale Allison – such as formal balance, polished diction, or sermonic structure – then Mark fares poorly. But judged by how rhetoric drives tension, irony, and narrative momentum, Mark excels. His narrative and linguistic oddities are strategies – winning strategies I think – effective ones, as a comparison with Matthew makes clear:

| Passage | Mark’s Devices | Matthew’s Devices |

| Messiah’s mission | parataxis, sharp antithesis, irony | polished antithesis, didactic tone |

| Demoniac healing | parataxis, symbolism, psychological depth, inversion | pacing, emphasis on danger, Jesus’s authority |

| Fig tree judgment | symbolism, narrative antithesis | explicit moralizing, longer narrative |

| Peter’s confession | irony, abruptness, parataxis | theological exposition, smoother narrative |

| Blind man healing | symbolism, narrative pacing | miracle story, immediate healing |

| Jesus’s baptism | cosmic rupture, lean narration | fulfillment formula, dialog with John |

| Temptation in the wilderness | brevity, starkness, no dialogue | extended dialogue, scriptural quotation |

| Parables of the kingdom | cryptic delivery, framing with irony | explanatory framing, allegorical expansion |

| Walking on water | parataxis, abrupt shift from fear to awe, irony | clearer theological emphasis, worship motif |

| Cleansing the temple | sudden action, compressed sequence | moral explanation, Old Testament citation |

| Passion narrative | escalating irony, silence, fractured pacing | narrative order, fulfillment citations, dramatic clarity |

Rudolf Bultmann and other early form critics dismissed Mark’s Gospel as a loose patchwork of oral traditions, lightly stitched together by a primitive eschatological scheme. In their view, Matthew provided the literary and theological coherence that Mark lacked. But further analysis of Mark’s rhetorical devices – beyond the narrow frame of late-classical Greek norms – undermines that judgment. If we assess Mark using modern literary standards, the contrast with Matthew becomes a matter of aesthetics, not competence.

Two of Mark’s favorite narrative devices are incomplete vignettes and doublets. Matthew rarely uses incomplete vignettes, and when he does they are smoothed. Mark’s are abrupt. Matthew and Mark both use doublets extensively, but in Matthew they are didactic and thematic – reinforcing ethical teachings (emphatic parallelism). Mark’s doublets increase the tension between what Jesus teaches and what the disciples want, putting the reader in cognitive competition with the disciples. Incomplete vignettes and doublets are used by the writers for very different purposes.

Incomplete Vignettes

One of Mark’s strangest and most troubling moments is an incomplete vignette that has perplexed or embarrassed some evangelists. In Mark 11:12–14, Jesus sees a fig tree in the distance. He approaches it, finds it has no fruit, and curses it. The next day, the disciples notice the tree has withered. Mark states explicitly that it wasn’t the season for figs.

This, on a simplistic reading, either makes Jesus foolish and ill-tempered or Mark a sloppy writer. Matthew “fixes” it by making the tree wither immediately and not mentioning that figs are out of season. Luke omits the story entirely.

Mark knows it’s weird, and he likes it that way. We can tell that from what Mark does next: he sandwiches the fig tree scene around another one, the cleansing of the temple. First, Jesus curses the fig tree. Then he drives out the money changers in the temple. Then they pass by the now-dead tree. Fig tree → temple → fig tree.

Mark’s execution is ruthlessly efficient. The fig tree has leaves but no fruit, just like the temple – and possibly Jerusalem itself – which looks holy but is spiritually barren. Jesus’s rage and actions in the temple are the fulfillment of the fig tree’s parable. The tree is the temple. It’s been weighed, found wanting, and marked for death.

In Mark 14:3–9, Jesus is at the house of Simon the leper in Bethany. An unnamed woman enters, breaks an alabaster jar of expensive nard, and pours it on Jesus’ head. Some present criticize her for wasting the ointment, suggesting it could have been sold with the proceeds given to the poor. Jesus defends her, saying she has done a “beautiful thing,” anointing his body for burial, and her act will be remembered wherever the gospel is preached. The woman’s identity, motives, and fate are unstated; the critics are anonymous; and the transition to the next scene (Judas’ betrayal, Mark 14:10–11) is abrupt.

Scholars have addressed the perceived incompleteness of Mark 14:3–9 through several lenses. I think Adela Yarbro Collins best accounts for the abrupt transition to Judas. It underscores Mark’s theme of misunderstanding versus true discipleship, with the woman as a model disciple. Its brevity heightens dramatic impact, focusing on the anointing as a prophetic act. The promise that the woman’s act will be remembered (14:9) serves as a meta-narrative climax, linking her deed to the gospel’s spread. A story-level climax would be both unnecessary and distracting.

In Mark 5:25–34, a woman who has suffered from a hemorrhage for twelve years, having spent all her money on ineffective physicians, hears about Jesus, touches his garment in a crowd, and is immediately healed. Mark 5:30 states:

And Jesus, perceiving in himself that power had gone out from him, immediately turned about in the crowd and said, “Who touched my garments?” And His disciples said to Him, “You see the crowd pressing in on You, and You say, ‘Who touched Me?’” (ESV).

The disciples question his inquiry given the crowd’s size. The woman confesses, and Jesus affirms her faith, sending her in peace. The verse begs for detail about Jesus’ perception process, his emotional state, and the crowd’s reaction to his question. The question creates cinematic-style suspense, prompting readers to anticipate the woman’s revelation. Past scholars saw the scene as inviting questions about Jesus’s human limitations, but surely, gospel harmonization is behind that consideration. Mark’s gospel shows no sign of concern with Jesus’s human limitations.

While Mark 5:25–34 is narratively complete, it contains the kind of internal tension that characterizes Mark’s larger rhetorical style. Jesus does not seem to control the miracle. And while the woman’s healing is affirmed, the scene exposes threads that Mark forces the reader to notice though they are left theologically dangling.

Mark’s narrator withholds the interpretation, even when the narrator sees everything. In this sense, the assertion by past reader-response critics that Mark’s narrator is omniscient is simply too broad a brush to accurately paint this scene. Omniscient yes, but to what end, if the narrator withholds what he knows? The distance between author and narrator here is clear. Mark the author, isn’t asking you to see and believe, but to notice and wonder.

Parables, Concealment, and the Reader’s Role

At first glance, Mark’s explanation of parables sounds disturbingly exclusionary. In 4:11–12, Jesus says to his disciples:

To you has been given the secret of the kingdom of God, but for those outside, everything is in parables, so that they may indeed see but not perceive… (ESV)

This seems, on its face, to make parables a kind of punishment – an obscuring of the truth. If Jesus came to proclaim good news, why deliver it in riddles that no one can understand?

The problem fades if we stop imagining Mark’s portrayal as being of real-time recipients of salvation, and start understanding them as dramatic figures in a rhetorical composition. The parables are not traps laid for first-century peasants. They are tests set before the readers of this gospel. In Mark’s world, the disciples are slow, as are the crowds. But the reader sees what they don’t. The parables are there not to communicate with the characters but to reveal the reader’s insight by comparison.

If we assume the story as told exists to be interpreted, then the parables make perfect sense: they are devices that reward attention. They are rhetorical mirrors. They divide not the faithful from the wicked, but the passive from the alert. In that division, Mark shifts the focus of the gospel – from belief taken on command to perception earned by reading.

The Function of Doublets