Bill Storage

This user hasn't shared any biographical information

The Arch of Constantine

Posted in History of Art on October 31, 2025

This is for Mike and Andrea, on their first visit to Rome.

Some people take up gardening. I dug into the Arch of Constantine. Deep. I’ll admit it, I got a little obsessed. What started as a quick look turned into a full dig through the dust of Roman politics as seen by art historians and classicists, writers with a gift for making the obvious sound profound and the profound impenetrable. Think of a collaboration between poets, lawyers, and a Latin thesaurus. One question led to another, and before I knew it, I was knee-deep in relief panels, inscriptions, and bitter academic feuds from 1903. If this teaser does anything for you, order a pizza and head over to my long version, revised today to incorporate recent scholarship, which is making great strides.

The Arch of Constantine stands just beside the Colosseum, massive and pale against the traffic. It was dedicated in 315 CE to celebrate Emperor Constantine’s victory over his rival Maxentius at the Battle of the Milvian Bridge. On paper it’s a “triumphal arch,” but that’s not quite true. Constantine never held a formal triumph, and the monument itself was assembled partly from spare parts of older imperial projects.

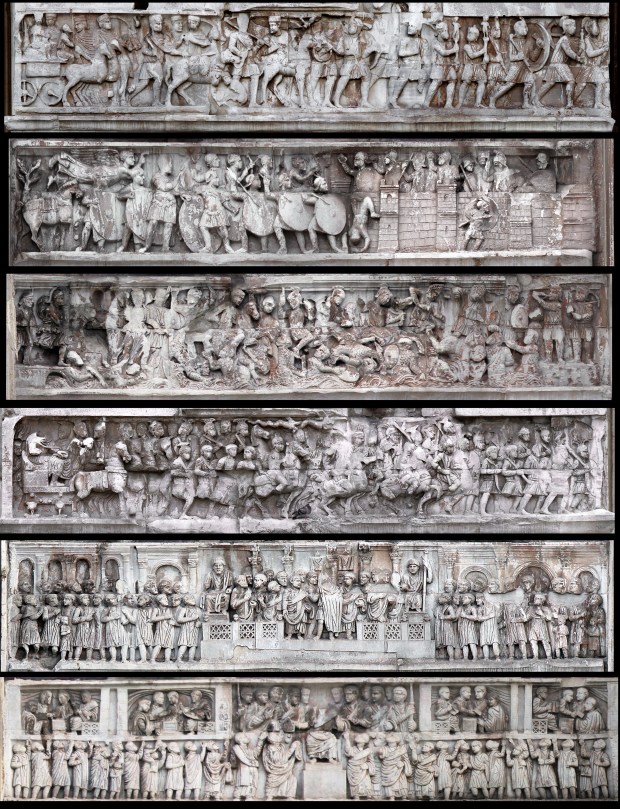

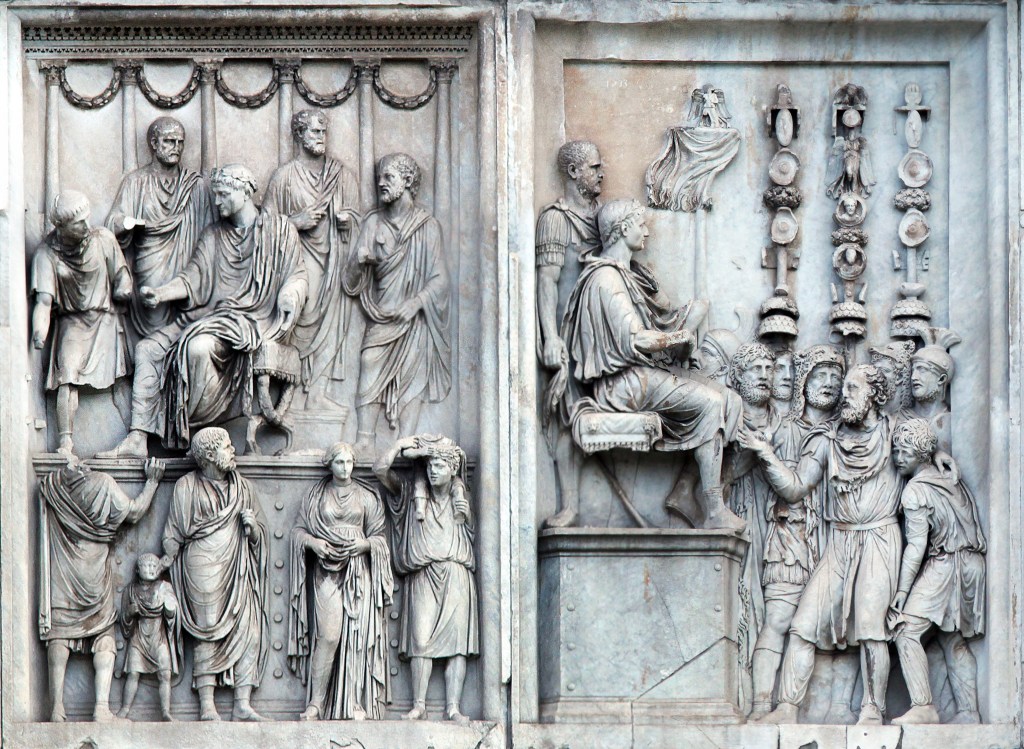

Most of what you see wasn’t made for Constantine at all. His builders raided earlier monuments – especially from the reigns of Hadrian, Trajan, and Marcus Aurelius – and grafted those sculptures onto the new structure. Look closely and you can still spot the mismatches. The heads have been recut. A scene that once showed Emperor Hadrian hunting a lion now shows Constantine doing the honors, with a few clumsy adjustments to the drapery. Other panels, taken from Marcus Aurelius’s monuments, show the emperor addressing troops or granting clemency, only now it’s Constantine’s face and Constantine’s name.

These borrowed panels aren’t just decoration. They were carefully chosen to tie Constantine to the “good emperors” of the past, especially Marcus Aurelius, the philosopher-king. By mixing their images with his own, Constantine claimed continuity with Rome’s golden age while quietly erasing the messy years between.

The long strip of carving that wraps around the lower part of the arch is the one section made entirely for Constantine’s time. It’s a running narrative of his civil war against Maxentius. Starting on the west side, you can see Constantine setting out from Milan, soldiers marching behind his chariot. Around the corner, he besieges a walled city – probably Verona – and towers over his men, twice their size, a new kind of emperor who commands by sheer presence. The next panel shows the chaotic battle at the Milvian Bridge, where Maxentius’s troops drown in the Tiber while Constantine’s army presses forward. The story ends with Constantine entering Rome and addressing the citizens from a raised platform, a ruler both human and divine.

The figures look stiff and simplified compared to the older reliefs above them, but that’s part of the shift the arch represents. Art was moving away from naturalism toward symbolism. Constantine isn’t shown as an individual man but as an idea: the chosen ruler, the earthly image of divine authority.

That message runs through the inscription carved across the top. It declares that Constantine won instinctu divinitatis – “by divine inspiration.” The phrase is unique; no one had used it before. It’s deliberately vague, as if leaving room for different gods to take the credit. For pagans, it could mean Apollo or Sol Invictus. For Christians, it sounded like the hand of the one God. Either way, it announced a new kind of emperor, one who ruled not just with the favor of the gods but through them.

The Arch of Constantine isn’t simply a monument to a battle. It’s a scrapbook of Rome’s artistic past and a statement of political legitimacy. Read carefully, it is an early sign that the empire was in for religion, hard times, and down-sizing.

Photos and text copyright 2025 by William K Storage

The End of Science Again

Posted in History of Science, Philosophy of Science on October 24, 2025

Dad says enough of this biblical exegesis and hermeneutics nonsense. He wants more science and history of science for iconoclasts and Kuhnians. I said that if prophetic exegesis was good enough for Isaac Newton – who spent most of his writing life on it – it’s good enough for me. But to keep the family together around the spectroscope, here’s another look at what’s gone terribly wrong with institutional science.

It’s been thirty years since John Horgan wrote The End of Science, arguing that fundamental discovery was nearing its end. He may have overstated the case, but his diagnosis of scientific fatigue struck a nerve. Horgan claimed that major insights – quantum mechanics, relativity, the big bang, evolution, the double helix – had already given us a comprehensive map of reality unlikely to change much. Science, he said, had become a victim of its own success, entering a phase of permanent normality, to borrow Thomas Kuhn’s term. Future research, in his view, would merely refine existing paradigms, pose unanswerable questions, or spin speculative theories with no empirical anchor.

Horgan still stands by that thesis. He notes the absence of paradigm-shifting revolutions and a decline in disruptive research. A 2023 Nature study analyzed forty-five million papers and nearly four million patents, finding a sharp drop in genuinely groundbreaking work since the mid-twentieth century. Research increasingly consolidates what’s known rather than breaking new ground. Horgan also raises the philosophical point that some puzzles may simply exceed our cognitive reach – a concern with deep historical roots. Consider consciousness, quantum interpretation, or other problems that might mark the brain’s limits. Perhaps AI will push those limits outward.

Students of History of Science will think of Auguste Comte’s famous claim that we’d never know the composition of the stars. He wasn’t stupid, just cautious. Epistemic humility. He knew collecting samples was impossible. What he couldn’t foresee was spectrometry, where the wavelengths of light a star emits reveal the quantum behavior of its electrons. Comte and his peers could never have imagined that; it was data that forced quantum mechanics upon us.

The same confidence of finality carried into the next generation of physics. In 1874, Philipp von Jolly reportedly advised young Max Planck not to pursue physics, since it was “virtually a finished subject,” with only small refinements left in measurement. That position was understandable: Maxwell’s equations unified electromagnetism, thermodynamics was triumphant, and the Newtonian worldview seemed complete. Only a few inconvenient anomalies remained.

Albert Michelson, in 1894, echoed the sentiment. “Most of the grand underlying principles have been firmly established,” he said. Physics had unified light, electricity, magnetism, and heat; the periodic table was filled in; the atom looked tidy. The remaining puzzles – Mercury’s orbit, blackbody radiation – seemed minor, the way dark matter does to some of us now. He was right in one sense: he had interpreted his world as coherently as possible with the evidence he had. Or had he?

Michelson’s remark came after his own 1887 experiment with Morley – the one that failed to detect Earth’s motion through the ether and, in hindsight, cracked the door to relativity. The irony is enormous. He had already performed the experiment that revealed something was deeply wrong, yet he didn’t see it that way. The null result struck him as a puzzle within the old paradigm, not a death blow to it. The idea that the speed of light might be constant for all observers, or that time and space themselves might bend, was too far outside the late-Victorian imagination. Lorentz, FitzGerald, and others kept right on patching the luminiferous ether.

Logicians will recognize the case for pessimistic meta-induction here: past prognosticators have always been wrong about the future, and inductive reasoning says they will be wrong again. Horgan may think his case is different, but I can’t see it. He was partially right, but overconfident about completeness – treating current theories as final, just as Comte, von Jolly, and Michelson once did.

Where Horgan was most right – territory he barely touched – is in seeing that institutions now ensure his prediction. Science stagnates not for lack of mystery but because its structures reward safety over risk. Peer review, grant culture, and the fetish for incrementalism make Kuhnian normal science permanent. Scientific American canned Horgan soon after The End of Science appeared. By the mid-90s, the magazine had already crossed the event horizon of integrity.

While researching his book, Horgan interviewed Edward Witten, already the central figure in the string-theory marketing machine. Witten rejected Kuhn’s model of revolutions, preferring a vision of seamless theoretical progress. No surprise. Horgan seemed wary of Witten’s confidence. He sensed that Witten’s serene belief in an ever-tightening net of theory was itself a symptom of closure.

From a Feyerabendian perspective, the irony is perfect. Paul Feyerabend would say that when a scientific culture begins to prize formal coherence, elegance, and mathematical completeness over empirical confrontation, it stops being revolutionary. In that sense, the Witten attitude itself initiates the decline of discovery.

String theory is the perfect case study: an extraordinary mathematical construct that’s absorbed immense intellectual capital without yielding a falsifiable prediction. To a cynic (or realist), it looks like a priesthood refining its liturgy. The Feyerabendian critique would be that modern science has been rationalized to death, more concerned with internal consistency and social prestige than with the rude encounter between theory and world. Witten’s world has continually expanded a body of coherent claims – they hold together, internally consistent. But science does not run on a coherence model of truth. It demands correspondence. (Coherence vs. correspondence models of truth was a big topic in analytic philosophy in the last century.) By correspondence theory of truth, we mean that theories must survive the test against nature. The creation of coherent ideas means nothing without it. Experience trumps theory, always – the scientific revolution in a nutshell.

Horgan didn’t say – though he should have – that Witten’s aesthetic of mathematical beauty has institutionalized epistemic stasis. The problem isn’t that science has run out of mysteries, as Horgan proposed, but that its practitioners have become too self-conscious, too invested in their architectures to risk tearing them down. Galileo rolls over.

Horgan sensed the paradox but never made it central. His End of Science was sociological and cognitive; a Feyerabendian would call it ideological. Science has become the very orthodoxy it once subverted.

Mark as Midrash

Posted in Biblical Criticism on October 23, 2025

Some New Testament scholars argue that Gospel Mark synthesizes a Jesus narrative purely from Old Testament passages. On this view, the writer of Mark was not recounting eyewitness memory or even oral history but was constructing a narrative solely using Israel’s scriptures as template and sourcebook. The basic idea is often called scripturalization or midrashic composition, after the rabbinic tradition of midrash halakha, which seeks to uncover deeper meaning in scripture by delving into its gaps.

A quick look at the case for gospel construction focuses on the direct scriptural allusions. Mark is thick with echoes of the OT that are not simply ornamental. Most are structural. Mark’s baptism of Jesus echoes Exodus and Isaiah’s “prepare the way” (Mark 1:2–3 cites Malachi and Isaiah). Jesus’s wilderness temptation scene mirrors Israel’s 40 years in the desert, and also Elijah’s and Moses’s desert experiences. The feeding of the 5,000 resembles Moses feeding Israel with manna and Elisha multiplying loaves. Mark’s transfiguration scene parallels Sinai theophany. Mark includes a bright cloud, divine voice, terrified companions. The Passion Narrative is rich with Psalmic and prophetic motifs (Psalm 22, Isaiah 53, Zechariah 13). Curiously, Mark rarely mentions his source material.

Scholars arguing for scripturalization in Mark point to typology and scripted roles. Jesus is cast as a type of multiple OT figures: Moses, David, Elijah, Elisha, Joseph, and especially the Suffering Servant. As they see it, Mark doesn’t merely reference these figures – he constructs scenes that replay their stories. The cleansing of the temple recalls prophetic critiques in Jeremiah and Malachi. The entry into Jerusalem on a colt enacts Zechariah 9:9. The cry of dereliction on the cross (Mark 15:34) is lifted straight from Psalm 22.

They also cite lack of biographical detail. Mark omits nearly everything one would expect in the life of a historical figure. There is no discussion of birth, family lineage, or youth. (If this comes as a surprise, see my deeper analysis here.) Mark takes no interest in Jesus’s appearance, habits, or daily life. Indeed, some suggest that when you remove OT source material, nothing is left of Mark’s Jesus.

Mark’s gospel moves in large, literary strokes – like a passion play or prophetic drama. This line of argument has been advanced by Thomas L. Brodie, who suggests the gospel is “a mosaic of scripture” rather than a biography, and by Randel Helms, who argues in Gospel Fictions that Mark invents Jesus’s deeds by repurposing OT texts.

Counterpoints to the argument claim that Mark used scripture as language, not as a blueprint. The ancient Jewish imagination was steeped in scripture. To narrate meaningfully was to echo scripture. But this doesn’t mean the events were invented wholesale. Modern minds separate “event” from “interpretation.” Ancient writers, including the Greeks (Mark’s author was unquestionably Greek), did not – as is evidenced by The Iliad and the Odyssey. Even if a healing story echoes Elisha, it doesn’t follow that the story was created ex nihilo.

Opponents of scripturalization also cite dissimilarities and non-scriptural details. Some episodes lack clear scriptural antecedents, e.g., the episode with the Syrophoenician woman (Mark 7), or Jesus’s use of spittle to heal a blind man. Likewise, Jesus’s family thinking he’s mad (Mark 3:21) and the dullard disciples don’t map onto Jewish scripture. Though, as I argue here, Mark’s narrative role for the disciples might operate as a higher layer on top of any scripturalization.

Many scholars, particularly those with theological perspectives, still maintain that Mark draws on oral traditions – stories shaped by community memory and theology, but not necessarily fabricated from texts. Scripture may provide the interpretive frame, but not always the content.

The same scholars often ask: if Mark is working from scratch using only OT texts, why invent such a flawed and cryptic messiah? Why depict such dense disciples and an abandoned, dying Jesus? This is the “criterion of embarrassment” (still controversial). It suggests Mark didn’t invent everything. If he had, he would have left out the embarrassing stuff. Some material looks like it had to be explained, not devised. I find this unconvincing, because I believe Mark used “embarrassing” moments as a literary device with great skill.

The strongest position may be a middle ground. I find this plausible, purely from a literary perspective, independent of any argument about the historicity of Jesus or any position on Mark’s beliefs or theological agenda. Mark likely used scripture to narrate meaning, not to fabricate events. He may have witnessed the events, heard them second hand, received them in oral tradition, or created them as literature; the text remains silent on this. He does something better than either writing history or inventing fiction, and he deserves credit for keeping us confused. He puts the reader in command. Mark produced a sacred narrative in a form recognizable to his audience – a kind of theological storytelling that blurs the line between reporting and interpreting.

_____

For those interested in Mark’s use of the OT, Here’s my list. You may know of others.

| Mark Passage | OT Source | Nature of Connection | Comment |

|---|---|---|---|

| 1:2–3 | Malachi 3:1; Isaiah 40:3 | Direct quotation | Combines two texts to frame John the Baptist as forerunner; sets tone of fulfillment through re-interpretation. |

| 1:11 (Voice from heaven: “You are my beloved Son…”) | Psalm 2:7; Isaiah 42:1 | Allusion | Merges royal and Servant imagery: messianic kingship and chosen Servant. |

| 1:12–13 (Temptation in the wilderness) | Exodus 14–16; 1 Kings 19; Psalm 91 | Typological echo | Jesus relives Israel’s wilderness testing and Elijah’s exile. |

| 2:23–28 (Plucking grain on Sabbath) | 1 Samuel 21:1–6 | Narrative parallel | David’s hunger legitimates violation of ritual law; Jesus invokes same precedent. |

| 4:3–9, 14–20 (Parable of the sower) | Isaiah 6:9–10 | Quotation and thematic link | Hearing but not understanding, reinforcing prophetic pattern of rejection. |

| 4:35–41 (Calming the storm) | Psalm 107:23–30; Jonah 1 | Thematic echo | God stills storm; Jonah and Psalm depict divine power over chaos. |

| 5:1–20 (Gerasene demoniac) | Isaiah 65:1–7 | Imagistic echo | The “tombs” and unclean imagery recall Isaiah’s picture of Israel’s impurity. |

| 6:34 (“Sheep without a shepherd”) | Numbers 27:17; Ezekiel 34:5 | Quotation | Traditional prophetic critique of failed leaders. |

| 6:41; 8:6 (Feeding miracles) | Exodus 16; 2 Kings 4:42–44; Psalm 23 | Typological echo | Moses, Elisha, and the Shepherd provide bread from heaven. |

| 8:31; 9:12; 10:33–34 (Predictions of suffering) | Isaiah 50:6; 52:13–53:12; Psalm 22 | Allusion | The Servant’s suffering and the righteous sufferer of the Psalms form the template. |

| 9:12 (“How is it written… that he should suffer many things?”) | Isaiah 53 (esp. 3, 5, 12) | Indirect reference | Points to the “written” prophecy of the suffering righteous one. |

| 10:45 (“To give his life a ransom for many”) | Isaiah 53:10–12 | Conceptual allusion | “For many” mirrors “he bore the sin of many”; Servant’s life given for others. |

| 14:24 (“Blood of the covenant poured out for many”) | Exodus 24:8; Isaiah 53:12 | Typological + verbal echo | Mosaic covenant language fused with Servant’s self-sacrifice. |

| 14:27 (“I will strike the shepherd…”) | Zechariah 13:7 | Direct quotation | Predicts scattering of disciples as flock. |

| 15:24 (Casting lots for garments) | Psalm 22:18 | Direct quotation | Psalm of the suffering righteous man reframed as prophetic. |

| 15:29–32 (Mockery at the cross) | Psalm 22:7–8; Wisdom 2:13–20 | Thematic echo | Taunts of the righteous sufferer repeated verbatim. |

| 15:33 (Darkness at noon) | Amos 8:9 | Prophetic motif | Cosmic mourning over injustice. |

| 15:34 (“My God, my God, why have you forsaken me?”) | Psalm 22:1 | Direct quotation | Anchors the Passion in Israel’s lament tradition. |

| 16:5 (Young man in white) | Daniel 10:5–6 | Theophany echo | Heavenly messenger motif. |

Physics for Cold Cavers: NASA vs Hefty and Husky

Posted in Engineering & Applied Physics on October 21, 2025

In the old days, every caver I knew carried a space blanket. Many still do. They come vacuum-packed in little squares that look like tea bricks, promising to help prevent hypothermia. They were originally marketed as NASA technology – because, in fact, they were. The original Mylar “space blanket” came out of the 1960s U.S. space program, meant to keep satellites and astronauts thermally stable in the vacuum of orbit. They reflected infrared radiation superbly, but only because they were surrounded by nothing. In a cave, you’re surrounded by something – cold, wet air – and that’s a very different problem.

A current ad says:

Reflects up to 90% of body heat to help prevent hypothermia and maintain core temperature during emergencies.

Another says:

Losing body heat in emergencies can quickly lead to hypothermia. Without understanding the science behind thermal protection, you might not use these tools effectively when they’re needed most.

This is your standard science-washing, techno-fetish, physics-theater advertising. Feynman called it cargo-cult science. But even Google Gemini told me:

For a vapor barrier in an emergency, a space blanket is better than a trash bag because it reflects radiant heat.

Premise true, conclusion false – non sequitur, an ancient Roman would say. It seems likely that advertising narratives played a quiet role in Google’s large-model-based AI system’s space-blanket blunder. That’s a topic for another time I guess.

Back to cold reality. At 50 degrees and 100 percent humidity, radiative heat loss is trivial. Radiative transfer at 10–15°C delta T is a rounding error compared to where your heat really goes. What matters is conduction to damp rock and convection to moving air, both of which a shiny sheet does little to stop. Worse, a rectangle of Mylar always leaks. Every fold and corner is a draft path, and soon you’re sitting in a crinkling echo chamber, shivering and reflecting nothing but a bad decision.

The contractor-size trash bag, meanwhile, is an unsung hero of survival. Poke a face hole near the bottom, pull it over your head, and you have a passable poncho that sheds drips instead of channeling them down your collar. You can sit on it, wrap your feet, and trap a pocket of warm, humid air that actually slows heat loss. Let a little moisture vent, and it works for an hour or two without turning clammy.

The space blanket survives mostly on myth – the glamour of NASA and the gleam of techno-hype. The homely trash bag simply works – better than anything near its size will. One was made for space, the other for the world we actually live in. Losing body heat in emergencies can quickly lead to hypothermia. Without getting the basics of thermal protection, you might not pick the best tool.

—

For those who need the physics, read on…

Heat leaves the body by three routes: conduction, convection, and radiation. Conduction is direct contact – warm skin meeting cool air, damp limestone, and mud. Convection is moving air stealing that warmed boundary layer and replacing it with fresh, cold air. Warm air is lighter. You warm the nearby air. It rises and sucks in fresh, cooler air. A circulating current arises.

At 50°F in a cave, conduction and convection dominate. The air is dense and wet, which means excellent thermal coupling. Each tiny current of air wicks warmth away far more effectively than dry air would. A trash bag stops that process cold. By trapping a thin layer of air and sealing it from drafts, it cuts off convective loss almost completely. It also slows conductive loss because still air, though not as good as down, is a decent insulator.

Radiation is infrared energy emitted from your skin and clothing into the surroundings. It follows the Stefan–Boltzmann law: power scales with the fourth power of absolute temperature. That sounds dramatic until you run the numbers. Between an 85°F body (skin temperature, roughly, 300° Kelvin) and a 50°F cave wall, the difference is about 27 Kelvin.

q = σ T4 A where q is heat transfer per unit time, T is temperature difference in Kelvins, and A is the radiative area.

Plug it in, and the radiative loss is about one percent of total heat loss – barely measurable compared to what you’re losing through air movement. The “90 percent reflection” claim is technically true in a vacuum, but in a cave or in Yosemite it’s a rounding error dressed up as science.

So the short version: the shiny Mylar sheet reflects an irrelevant component of heat loss while ignoring the main ones. The humble and opaque trash bag attacks the real physics of staying warm. It doesn’t sparkle, it gets the job done.

I’m Only Neurotic When – Engineering Edition

Posted in Commentary, Engineering & Applied Physics on October 7, 2025

The USB Standard of Suffering

The USB standard was born in the mid-1990s from a consortium of Intel, Microsoft, IBM, DEC, NEC, Nortel, and Compaq. They formed the USB Implementers Forum to create a universal connector. The four pins for power and data were arranged asymmetrically to prevent reverse polarity damage. But the mighty consortium gave us no way to know which side was up.

The Nielsen Norman Group found that users waste ten seconds per insertion. Billions of plugs times thirty years. We could have paved Egypt with pyramids. I’m not neurotic. I just hate death by a thousand USB cuts.

The Dyson Principle

I admire good engineering. I also admire honesty in materials. So naturally, I can’t walk past a Dyson vacuum without gasping. The thing looks like it was styled by H. R. Giger after a head injury. Every surface is ribbed, scooped, or extruded as if someone bred Google Gemini with CAD software, provided the prompt “manifold mania,” and left it running overnight. Its transparent canister resembles an alien lung. There are ducts that lead nowhere, fins that cool nothing, and bright colors that imply importance. It’s all ornamental load path.

To what end? Twice the size and weight of a sensible vacuum, with eight times the polar moment of inertia. (You get the math – of course you do.) You can feel it fighting your every turn, not from friction, but from ego. Every attempt at steering carries the mass distribution of a helicopter rotor. I’m not cleaning a rug, I’m executing a ground test of a manic gyroscope.

Dyson claims it never loses suction. Fine, but I lose patience. It’s a machine designed for showroom admiration, not torque economy. Its real vacuum is philosophical: the absence of restraint. I’m not neurotic. I just believe a vacuum should obey the same physical laws as everything else in my house. I’m told design is where art meets engineering. That may be true, but in Dyson’s case, it’s also where geometry goes to die. There’s form, there’s function, and then there’s what happens when you hire a stylist who dreams in centrifugal-manifold Borg envy.

Frank Lloyd Wright’s Physics

No one but Frank Lloyd Wright could have designed these cantilevered concrete roof supports, the tour guide at the Robie House intoned reverently, as though he were describing Moses with a T-square. True – and Mr. Wright couldn’t have either. The man drew poetry in concrete, but concrete does not care for poetry. It likes compression. It hates tension and bending. It’s like trying to make a violin out of breadsticks.

They say Wright’s genius was in making buildings that defied gravity. True in a sense – but only because later generations spent fifty times his budget figuring ways to install steel inside the concrete so gravity and the admirers of his genius wouldn’t notice. We have preserved his vision, yes, but only through subterfuge and eternal rebar vigilance.

Considered the “greatest American architect of all time” by people who can name but one architect, Wright made it culturally acceptable for architects to design expressive, intensely personal museums. The Guggenheim continues to thrill visitors with a unique forum for contemporary art. Until they need the bathroom – a feature more of an afterthought for Frank. Try closing the door in there without standing on the toilet. Paris hotels took a cue.

The Interface Formerly Known as Knob

Somewhere, deep in a design studio with too much brushed aluminum and not enough common sense, a committee decided that what drivers really needed was a touch screen for everything. Because nothing says safety like forcing the operator of a two-ton vehicle to navigate a software menu to adjust the defroster.

My car had a knob once. It stuck out. I could find it. I could turn it without looking. It was a miracle of tactile feedback and simple geometry. Then someone decided that physical controls were “clutter.” Now I have a 12-inch mirror that reflects my fingerprints and shame. To change the volume, I have to tap a glowing icon the size of an aspirin, located precisely where sunlight can erase it. The radio tuner is buried three screens deep, right beside the legal disclaimer that won’t go away until I hit Accept. Every time I start the thing. And the Bluetooth? It won’t connect while the car is moving, as if I might suddenly swerve off the road in a frenzy of unauthorized pairing. Design meets an army of failure-to-warn attorneys.

Human factors used to mean designing for humans. Now it means designing obstacles that test our compliance. I get neurotic when I recall a world where you could change the volume by touch instead of prayer.

Automation Anxiety

But the horror of car automation goes deeper, far beyond its entertainment center. The modern car no longer trusts me. I used to drive. Now I negotiate. Everything’s “smart” except the decisions. I rented one recently – some kind of half-electric pseudopod that smelled of despair and fresh software – and tried to execute a simple three-point turn on a dark mountain road. Halfway through, the dashboard blinked, the transmission clunked, and without warning the thing threw itself into Park and set the emergency brake.

I sat there in the dark, headlamps cutting into trees, wondering what invisible crime I’d committed. No warning lights, no chime, no message – just mutiny. When I pressed the accelerator, nothing. Had it died of fright? Then I remembered: modern problems require modern superstitions. I turned it off and back on again. Reboot – the digital age’s holy rite of exorcism. It worked.

Only later did I learn, through the owner’s manual’s runic footnotes, that the car had seen “an obstacle” in the rear camera and interpreted it as a cliff. In reality it was a clump of weeds. The AI mistook grass for death.

So now, in 2025, the same species that landed on the Moon has produced a vehicle that prevents a three-point turn for my own good. Not progress, merely the illusion of it – technology that promises safety by eliminating the user. I’m not neurotic. I just prefer my machines to ask before saving my life by freezing in place as headlights come around the bend.

The Illusion of Progress

There’s a reason I carry a torque wrench. It’s not to maintain preload. It’s to maintain standards. Torque is truth, expressed in foot-pounds. The world runs on it.

Somewhere along the way, design stopped being about function and started being about feelings. You can’t torque a feeling. You can only overdo it. Hence the rise of things that are technically advanced but spiritually stupid. Faucets that require a firmware update, refrigerators with Twitter accounts. Cars that disable half their features because you didn’t read the EULA while merging onto the interstate.

I’m told this is innovation. No, it’s entropy with a bottomless budget. After the collapse, I expect future archaeologists to find me in a fossilized Subaru, finger frozen an inch from the touchscreen that controlled the wipers.

Until then, I’ll keep my torque wrench, thank you. And I’ll keep muting TikTok’s #lifehacks tag, before another self-certified engineer shows me how to remove stripped screws with a banana. I’m not neurotic. I’ve learned to live with people who do it wrong.

I’m Only Neurotic When You Do It Wrong

Posted in Commentary on October 6, 2025

I don’t think of myself as obsessive. I think of myself as correct. Other people confuse those two things because they’ve grown comfortable in a world that tolerates sloppiness. I’m only neurotic when you do it wrong.

In Full Metal Jacket, Stanley Kubrick mocks the need for precision. Gunnery Sergeant Hartman, played by R. Lee Ermey, has a strict regimen for everything from cellular function on up. Kubrick has Hartman tell Private Pyle, “If there is one thing in this world that I hate, it is an unlocked footlocker!” Of course, Hartman hates an infinity of things, but all of them are things we secretly hate too. For those who missed the point, Kubrick has the colonel later tell Joker, “Son, all I’ve ever asked of my Marines is that they obey my orders as they would the word of God.”

The facets of life lacking due attention to detail are manifold, but since we’ve started with entertainment, let’s stay there. Entertainment budgets dwarf those of most countries. All I’ve ever asked of screenwriters is to hire historical consultants who can spell anachronism. Kubrick is credited with meticulous attention to detail. Hah. He might learn something from Sgt. Hartman. In Kubrick’s Barry Lyndon duel scene, a glance over Lord Bullingdon’s shoulder reveals a map with a decorative picture of a steam train, something not invented for another fifty years. The scene of the Lyndon family finances shows receipts bound by modern staples. Later, someone mentions the Kingdom of Belgium. Oops. Painterly cinematography and candlelit genius, yes – but the first thing that comes to mind when I hear Barry Lyndon is the Dom Pérignon bottle glaring on the desk, half a century out of place.

Soldiers carry a 13-star flag in The Patriot. Troy features zippers. Braveheart wears a kilt. Andy Dufresne hides his tunnel behind a Raquel Welch poster in Shawshank Redemption. Forrest Gump owns Apple stock. Need I go on? All I’ve ever asked of filmmakers is that they get every last detail right. I’m only neurotic when they blow it.

Take song lyrics. These are supposedly the most polished, publicly consumed lines in the English language. Entire industries depend on them. There are producers, mixers, consultants galore – whole marketing teams – and yet no one, apparently, ever said, “Hold on, Jim, that doesn’t make any sense.

Jim Morrison, I mean. Riders on the Storm is moody and hypnotic. On first hearing I settled in for what I knew, even at twelve, was an instant classic. Until he says of the killer: “his brain is squirming like a toad.” Not the brain of a toad, not a brain that toaded. There it was – a mental image of a brain doing a toad impression. The trance was gone. Minds squirm, not toads. Toads hold still, then hop, then hold still again. Rhyming dictionaries existed in 1970. He could have found anything else. Try: “His mind was like a dark abode.” Proofreader? Editor? QA department? Peer review? Fifty years on, I still can’t hear it without reliving my early rock-crooner trauma.

Rocket Man surely ranks near Elton’s John’s best. But clearly Elton is better at composition than at contractor oversight. Bernie Taupin wrote, “And all this science, I don’t understand.” Fair. But then: “It’s just my job, five days a week.” So wait, you don’t understand science, but NASA gave you a five-day schedule and weekends off because of what skill profile? Maybe that explains Challenger and Columbia.

Every Breath You Take by The Police. It’s supposed to be about obsession, but Sting (Sting? – really, Gordon Sumner?) somehow thought “every move you make, every bond you break” sounded romantic. Bond? Who’s out there breaking bonds in daily life? Chemical engineers? Sting later claimed people misunderstood it, but that’s because it’s badly written. If your stalker anthem is being played at weddings, maybe you missed a comma somewhere, Gordon.

“As sure as Kilimanjaro rises like Olympus above the Serengeti,” sings Toto in Africa. Last I looked, Kilimanjaro was in Tanzania, 200 miles from the Serengeti. Olympus is in Greece. Why not “As sure as the Eiffel Tower rises above the Outback”? The lyricist admitted he wrote it based on National Geographic photos. Translation: “I’m paid to look at pictures, not read the captions.”

“Plasticine porters with looking glass ties,” wrote John Lennon in Lucy in the Sky with Diamonds. Plasticine must have sounded to John like some high-gloss super-polymer. But as the 1960s English-speaking world knew, Plasticine is a children’s modeling clay. Were these porters melting in the sun? No other psychedelic substances available that day? The smell of kindergarten fails to transport me into Lennon’s hallucinatory dream world.

And finally, Take Me Home, Country Roads. This one I take personally. John Denver, already richer than God, sat down to write a love letter to West Virginia and somehow imported the Blue Ridge Mountains and Shenandoah River from Virginia. Maybe he looked at an atlas once, diagonally. The border between WV and VA is admittedly jagged, but at least try to feign some domain knowledge. Apologists say he meant blue-ridged mountains or west(ern) Virginia – which only makes it worse. The song should have been called Almost Geographically Adjacent to Heaven.

Precision may not make art, but art that ignores precision is just noise with a budget. I don’t need perfection – only coherence, proportion, and the occasional working map. I’m not obsessive. I just want a world where the train on the wall doesn’t leave the station half a century early. I’ve learned to live among the lax, even as they do it all wrong.

The Comet, the Clipboard, and the Knife

Posted in Commentary on October 2, 2025

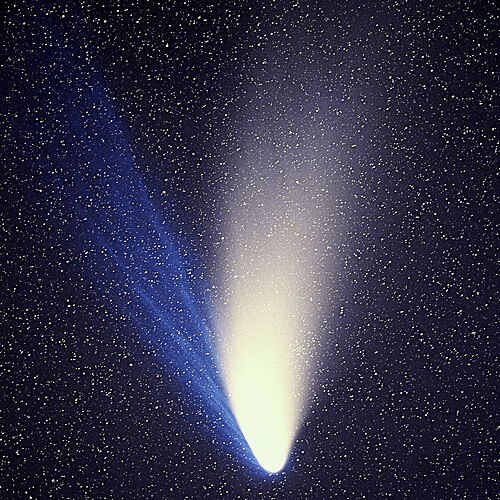

Background: My grandfather saw Comet Halley in 1910, and it was the biggest deal since the Grover Cleveland inaugural bash. We discussed it – the comet, not the inaugural – often in my grade school years. He told me of “comet pills” and kooks who killed themselves fearing cyanogens. Halley would return in 1986, an unimaginably far off date. Then out of nowhere in 1973, Luboš Kohoutek discovered a new comet, an invader from the distant Oort cloud – the flyover states of our solar system – and it was predicted to be the comet of the century. But Comet Kohoutek partied too hard somewhere near Saturn and arrived hungover, barely visible. And when Halley finally neared the sun in 1986, the earth was 180 degrees from it. Halley, like Kohoutek, was a flop. But 1996 brought Comet Hale-Bopp. Now, that was a sight even for urban stargazers. I saw it from Faneuil Hall in Boston and then bright above the Bay Bridge in San Francisco. It hung around for a year, its dual tails unforgettable. And as with anything cool, zealots stained its memory by freaking out.

A Sermon by Reverend Willie Storage, Minister of Peculiar Gospel

Brethren, we take our text today from The Book of Cybele, Chapter Knife, Verse Twenty-Three: “And lo, they danced in the street, and cut themselves, and called it joy, and their blood was upon their sandals, and the crowd applauded and took up the practice, for the crowd cannot resist a parade.”

To that we add The Epistle of Origen to the Scissors, Chapter Three, Verse Nine: “If thy member offend thee, clip it off, and if thy reason offend thee, chop that too, for what remains shall be called purity.”

These ancient admonitions are the ancestors of our story today, which begins not in Alexandria, nor the temples of Asia Minor, nor the starving castles of Languedoc, but in California, that golden land where individuality is a brand, rebellion is a style guide, and conformity is called freedom. Once it was Jesus on the clouds, then the Virgin in the sun, then a spaceship hiding behind a comet’s tail.

Thus have the ages spoken, and thus, too, spoke California in the year of our comet, 1997, when Hale-Bopp streaked across the sky like a match-head struck on the dark roof of the world. In Iowa, folk looked up and said, “Well, I’ll be damned – pass the biscuits.” In California, they looked up and said, “It conceals a spaceship,” and thirty-nine of them set their affairs in order, cut their hair to regulation style and length, pulled on black uniforms, laced up their sneakers, “prepared their vehicles for the Great Next Level,” and died at their own hands.

Now, California is the only place on God’s earth where a man can be praised for “finding himself” by joining a committee, and then be congratulated for the originality and bravery of this act. It is the land of artisan individuality in bulk: rows of identically unique coffee shops, each an altar to self-expression with the same distressed wood and imitation Edison bulbs. Rows of identically visionary cults, each one promising your personal path to the universal Next Level. Heaven’s Gate was not a freak accident of California. It was California poured into Grande-size cups and called “Enlightenment.”

Their leader, Do – once called Marshall Applewhite or something similarly Texan – explained that a spacecraft followed the comet, hiding like a pea under a mattress, ready to transport them to salvation. His co-founder, Ti, had died of cancer, inconveniently, but Do explained it in terms Homer Simpson could grasp: Ti had merely “shed her vehicle.” More like a Hertz than a hearse, and the rental period of his faithful approached its earthly terminus. His flock caught every subtle allusion. Thus did they gather, not as wild-eyed fanatics, but as the most polite of martyrs.

The priests of Cybele danced and bled. Origen of Alexandria may have cut himself off in private, so to speak, as Eusebius explains it. The Cathars starved politely in Languedoc. And the Californians, chased by their own doctrine into a corner of Rancho Santa Fe creativity, bought barbiturates at a neighborhood pharmacy, added a vodka chaser, then followed a color-coded procedure and lay down in rows like corn in a field. Their sacrament was order, procedure, and videotaped cheer. Californians, after all, enjoy their own performances.

Even the ancients were sometimes similarly inclined. Behold a relief from Ostia Antica of a stern priest nimbly handling an egg – proof, some claim, that men have long been anxious about inconvenient appendages, and that Easter’s chocolate bounty has more in common with the castrated ambitions of holy men than with springtime joy. Emperor Claudius, more clever than most, outlawed such celebrations – or tried to.

Brethren, it is not only the comet that inspires folly. Consider Sherry Shriner – a Kent State graduate of journalism and political science – who rose on the Internet just this century, a prophet armed with a megaphone, announcing that alien royalty, shadowy cabals, and cosmic paperwork dictated human destiny, and that obedience was the only path to salvation. She is a recent echo of Applewhite, of Origen, of priests of Cybele, proving that the human appetite for secret knowledge, cosmic favor, and procedural holiness only grows with new technology. Witness online alien reptile doomsday cults.

Now, California is a peculiar land which – to paraphrase Brother Richard Brautigan – draws Kent State grads like a giant Taj Mahal in the shape of a parking meter. Only there could originality be mass-produced in identical black uniforms, only there could a suicide cult be entirely standardized, only there could obedience to paperwork masquerade as freedom. The Heaven’s Gate crowd prized individuality with the same rigor that the Froot Loops factory prizes the relationship between each loop piece’s color and its flavor. And yet, in this implausible perfection, we glimpse an eternal truth: the human animal will organize itself into committees, assign heavenly responsibilities, and file for its own departure from the body with the same diligence it reserves for parking tickets.

And mark these words, it’s not finished. If the right comet comes again, some new flock will follow it, tidy as ever, clipboard in hand. Perhaps it won’t be a flying saucer but a carbon-neutral ark. Perhaps it will be the end of meat, of plastic, of children. You may call it Extinction Rebellion or Climate Redemption or Earth’s Last Stand. They may chain themselves to the rails and glue themselves to Botticelli or to Newbury Street, fast themselves to death for Mother Goddess Earth. It is a priest of Cybele in Converse high tops.

“And the children of the Earth arose, and they glued themselves to the paintings, and they starved themselves in the streets, saying, ‘We do this that life may continue.’ And a prophet among them said, ‘To save life ye must first abandon it.’”

If you must mutilate something, mutilate your credulity. Cut it down to size. Castrate your certainty. Starve your impulse to join the parade. The body may be foolish, but it has not yet led you into as much trouble as the mind.

Sing it, children.

—

Cave Bolts – 3/8″ or 8mm? – Or Wrong Question?

Posted in Engineering & Applied Physics on September 2, 2025

Three eighths inch bolts – or 8mm? You’ll hear this debate as you drift off to sleep at the Old Timers Reunion. Peter Zabrok laughs it off: quarter inch, he says, for climbing. Sure, on El Capitan, where Pete hangs out, quarter inch is justifiable – clean granite, smooth walls, long pitches. But caves – water-carved knife edges, mud, rock of wildly varying strength, and the chance of being skewered on jagged breakdown – give rise to a different calculus of bolt selection.

It’s easy to look up manufacturers’ data and see that 8mm is “super good enough.” The phrase comes from a YouTube channel that teaches– perhaps inadvertently – that ultimate strength is all that matters. I’m cursed with a background in fasteners. I’ve looked at too many failed bolts under scanning electron microscopes. I’ve been an expert witness in cases where bolts took down airplanes and killed people. From that perspective, ultimate breaking strength is a lousy measure of gear. Let’s reframe the 3/8 vs 8mm (M8) diameter question with an engineer’s eye – and then look at bolt length.

The Basics without the Fetish

Let’s keep this down to earth. I’ll mostly use English units – pounds and inches. Most cavers I know can picture 165 pounds but have no feel for a kilonewton. Physics should be relatable, not a fetish. Note: 8mm is close to 5/16 inch (0.314 vs 0.3125), but don’t mix metric drills with imperial bolts.

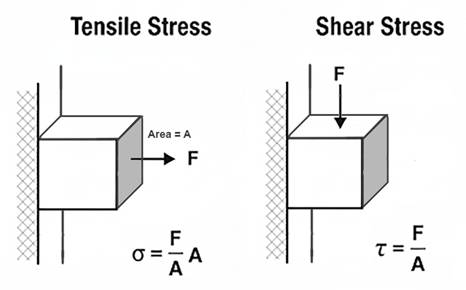

Stress = force ÷ area. Pull 10 pounds on a one-square-inch rod, you get 10 psi. Pull 100 pounds on ten square inches, you also get 10 psi. This is an example of tensile stress.

Shear stress is the sideways cousin – one part of a bolt sliding past the other, as when a hanger tries to cut it in half, to cut (shear) the bolt across its cross-section.

Ultimate stress (ultimate strength) is the max before breakage. Yield stress (yield strength) is the point where a bolt stops bouncing back and bends or stretches permanently. For metals, engineers define yield strength as 0.2% permanent deformation. Ratios of yield-to-ultimate vary wildly between alloys, which matters in picking metals. Note here that “strength” refers to an amount in pounds (or newtons) when applied to a part like a bolt but to an amount in pounds per square inch (or pascals) when applied to the material the part is made from.

Bolts in Theory, Bolts in Caves

The strength of wedge anchor made of 304 stainless depends on 304’s ultimate tensile strength (UTS) and the effective stress area of the bolt’s threaded region. Standard numbers: UTS ≈ 515 MPa (75,000 psi). For an M8 coarse bolt, tensile area = 36.6 mm². For a 3/8-16 UNC, it’s 50 mm².

As detailed elsewhere, a properly installed (properly torqued) bolt is not loaded in shear, regardless of the bolt orientation (vertical or horizontal) or the load application direction (any combination of vertical or sideways). But most bolts installed in caves are not properly installed. So we’ll assume that vertical bolts are properly torqued (otherwise they would fall out) and that horizontal bolts are untorqued. In such cases, horizontal bolts are in fact loaded in shear; the hanger bears directly on the bolt.

We can first look at the tension case – a wedge anchor in the ceiling; you hang from it. The axial (tensile) strength is calculated as UTS × A. This formula falls out of the definition of tensile stress: σ = F / A_t, where F is the axial force and A_t is the effective area over which the tensile stress acts. Shear stress (conventionally denoted τ where tensile stress is denoted σ) is defined as τ = F / A_s, where A_s is the area over which the shear stress acts.

In a bolt, A_t and A_s would seem to be identical. In fact, they are slightly different because the shear plane often passes through the threaded section at a slight angle from the tensile plane, thereby reducing the effective area. More importantly, ductile materials like 304 stainless steel undergo plastic deformation at the microscopic scale in a way that renders the basic theoretical formula (τ = F / A_s) less applicable. In this situation, the von Mises yield criterion (aka distortion energy theory) is typically used to predict failure under combined stresses. This criterion relates shear ultimate strength to tensile yield strength. The maximum shear stress a material can withstand (τ_max) is approximately equal to σ_yield / √3 × σ_yield. For predicting ultimate shear strength (USS), theory and empirical test data show that bolts made of ductile metals like mild carbon steel or 304 stainless have ultimate shear strength that is about 0.6 × their ultimate tensile strength.

The tensile stress area (A_s) for an M8 coarse thread bolt is 36.6 mm² (0.057 in²). For a 3/8-16 UNC bolt, A_s is 50 mm² (0.078 in²).

Simple math says:

| Diameter | Tensile Stress Area | Axial Strength | Shear Strength |

| M8 | 36.6 mm² | 4,236 lb | 2,542 lb |

| 3/8 inch | 50 mm² | 5,798 lb | 3,479 lb |

The 3/8 inch bolt has 37% higher tensile and shear strength than the M8 bolt, due to its larger effective cross-section. These values are ultimate strengths of the bolts themselves. Actual load capacities (strengths) of the anchor placement might be lower – if a hanger breaks, if the rock breaks (a cone of rock pulls away), or if the bolt pulls out (the rock yields where the bolt’s collar presses into it).

For reasons cited above (von Mises etc.), the shear strength of each bolt size is less than its tensile strength. For the 8mm bolt, is 2500 pounds (11 kn) strong enough? That’s about a factor of 14 greater than the weight of a 180 pound (80 kg) caver. That’s 14 Gs, which is about the maximum force that humans survive in harnesses designed to prevent a person’s back from bending backward – lumbar hyperextension. Caving harnesses, because of the constraints of single rope technique (SRT), do not supply this sort of back protection. Five to eight Gs is often cited as a likely maximum for survivability in a caving harness.

So 2500 pounds of shear strength seems strong enough, though possibly not super strong enough, whatever that might mean. Is the ratio of bolt strength to working load big enough? The ratio of survivable load to bolt strength? How might a person expecting to experience only the force of his body weight suddenly experience 5Gs?

The UIAA (International Climbing and Mountaineering Federation) sets a maximum allowable impact force for ropes at 12 kN (2700 lb) for a single rope, which means roughly 6-9 Gs for an average climber (75 kg, 165 lb.)

When a bolt is preloaded (tightened to a specified torque, often approaching its yield strength), it induces a compressive force in the clamped materials (the hanger, washer, and the rock) and a tensile stress of equal magnitude in the bolt. For a preloaded bolt, an externally applied load does not increase the tensile stress in the bolt until the external load approaches the preload force. This is because the external load first reduces the compressive force in the clamped materials rather than adding to the bolt’s tension. This behavior is well-documented in bolted joint mechanics (e.g., Shigley’s Mechanical Engineering Design).

For loads perpendicular to the bolt axis, preload can significantly enhance the bolted joint’s shear capacity. The improvement comes from the frictional resistance generated between the clamped surfaces (e.g., the hanger and concrete) due to the preload-induced compressive force. This friction can resist shear loads before the bolt itself is subjected to shear stress.

Basing preload on the yield strength of the bolts’ 304 stainless material (215 MPa, 31,200 psi) and the cross-sectional area of the threads used above gives the following preload forces:

M8 bolt preload: 215 MPa × 36.6 mm² ≈ 7,869 N (1,767 lb).

3/8 inch bolt preload: 215 MPa × 50 mm² ≈ 10,750 N (2,413 lb).

If we assume a coefficient of friction of 0.4 between hanger and bedrock, we can calculate the frictional forces perpendicular to horizontally placed bolts. These frictional forces can fully resist perpendicular (vertical) loads up to a limit of μ × preload (where μ is the friction coefficient and F_friction = μ × F_preload). For μ = 0.4, the shear resistance from friction alone could be:

M8: 0.4 × 7,869 N ≈ 3,148 N (707 lb).

3/8 inch: 0.4 × 10,750 N ≈ 4,300 N (966 lb).

These frictional capacities are substantial, meaning the bolt’s shear strength becomes relevant only if the frictional capacity is exceeded. The preload is highly desirable, because it prevents the rock and the bolt from “feeling” the applied load, and therefore prevents any cyclic loading of the bolt, even when cyclic loads are applied to the joint (via the hanger).

However, the frictional capacity (707 lb for M8) usually does not add to the shear capacity of the bolt, once preload is exceeded. Its shear capacity remains at 2542 lb as calculated above, because once the hanger slips relative to the rock, the bolt itself begins to bear the shear load directly.

Now, with properly torqued, preloaded bolts, we can return to the main question: are M8 bolts “good enough”? Two categories of usage come to mind – aid climbing and permanent rigging. Let’s examine each, being slightly conservative. For example, we’ll assume no traction or embedding of the hanger, something that often but not always exists, which results in an effective coefficient of friction between rock and hanger of 1.0 or more. We’ll use 8Gs as a threshold of survivability and 0.4 as a coefficient of friction – though friction becomes mostly irrelevant in this worst-case analysis.

Comparative Analysis – 3/8 vs M8 (first order approximations)

For an M8 bolt, preload near yield (215 MPa × 36.6 mm² = 7.9 kN / 1,767 lb) gives a frictional capacity of 0.4 × 7.9 kN = 3.16 kN (707 lb).

For a 3/8 inch bolt (215 MPa × 50 mm² = 10.8 kN / 2,413 lb), it’s 0.4 × 10.8 kN = 4.3 kN (966 lb).

The 8 G threshold (80 kg climber, 8 × 785 N = 6.3 kN / 1,412 lb) exceeds both frictional capacities, meaning the joint slips, and the bolt bears shear stress in these high-load cases, regardless of torquing.

Once friction is exceeded, the bolt’s shear strength governs: 11.3 kN (2,542 lb) for 8mm, 15.5 kN (3,479 lb) for 3/8 inch (based on 0.6 × UTS = 309 MPa).

Both M8 and 3/8 exceed 6.3 kN, confirming that the analysis hinges on shear strength, not friction, for high-load cases. Torquing is critical to achieve the assumed preload (near yield) and to confirm placement quality (a torqued bolt indicates a successful installation). However, in high-load cases (≥6.3 kN), the frictional capacity is irrelevant once exceeded, and the analysis stands on the bolt’s shear strength and the rock integrity.

Since high-load cases (e.g., 8 G = 6.3 kN) exceed the frictional capacity of both bolt diameters (3.16 kN for 8mm, 4.3 kN for 3/8 inch), the decision rests on shear strength margin:

M8: 11.3 kN (2,542 lb) provides a ~1.8x factor of safety (see note at bottom on factors of safety) over 6.3 kN.

3/8 inch: 15.5 kN (3,479 lb) offers a ~2.5x factor, ~37% higher, giving more buffer against rock variability or slight overloads.

In some limestone (10–100 MPa), the rock will fail (e.g., pullout) well below the bolt’s shear strength. Remember that with torqued bolts the rock does not “feel” any load until the axial load exceeds preload or the perpendicular load exceeds the friction force generated by the preload. But in softer (low compressive strength) limestone, once those thresholds are exceeded, the rock often fails before the bolt fails in shear or tension. 3/8 inch’s larger diameter distributes load better, reducing rock stress (bearing stress = force / diameter × embedment).

Most of us use redundant anchors for permanent rigging, and you should too. A dual-anchor system with partial equalization (double figure eight, bunny-loop-knot, 1–3 inch drop limit) ensures no single failure is catastrophic. A 3-inch drop would add ~1 kN to the force felt by the surviving anchor. This is within the backup bolt’s shear capacity, making 8mm viable.

What about practical factors? M8 bolts save ~20–35% battery life and weight, critical for remote locations. M8 does not align with ASC/UIAA standards (≥3/8 inch preferred). 3/8 is obviously better for permanent anchors in marginal rock, not because the bolt is stronger, but because the contact stresses are about 35% lower – a potentially significant difference.

Effect of Bolt Length on Anchor Failure in Limestone

In typical installations of wedge bolts in limestone, axial (tensile) loading, steel failure often governs (e.g., the bolt fractures at the threads), while in shear loading, the anchor typically experiences partial pullout with bending, followed by a cone-shaped rock breakout (pry-out failure). This is consistent with industrial experience in concrete, where tensile failures are steel-dominated due to the anchor’s expansion mechanism providing sufficient grip, but shear failures involve pry-out because the load induces bending and leverages the embedment. The collar (sleeve, expansion clip) in most brands is identical for all bolt lengths of a given diameter. The gripping mechanism doesn’t change with length. The primary difference is the effective embedment depth (h_ef), which affects load distribution in the rock. Longer bolts increase the volume of rock engaged and better resistance to breakout, but this benefit is more pronounced in shear than tension, as preload clamping compresses a larger rock section under the hanger, distributing stresses and reducing localized crushing.

To estimate failure loads for 2.5 inch vs. 3.5 inch total lengths, we can use standard engineering formulas adapted from ACI 318* (* I won’t violate copyright by linking to outlaw PDFs, but I think standards bodies that sell specs for hundreds of dollars do the world a huge injustice) for post-installed wedge anchors, treating limestone as analogous to concrete, with adjustments for its variable strength.

The compressive strength of limestone (f_c’) varies from 1,000 psi (soft, e.g., oolitic limestone) to 10,000 psi (harder types). We’ll use 4,000 psi (27.6 MPa) based on typical Appalachian limestone values. For stronger (compressive strength) limestone (e.g., 8,000 psi / 55 MPa), capacities increase by1.4x (proportional to the square root of f_c’).

Embedment Depth (h_ef) is the bolt length minus hanger thickness (~0.25 inch) and nut/washer (~0.375 inch). Thus, h_ef ≈ 1.875 inches for 2.5 inch bolt; h_ef ≈ 2.875 inches for 3.5 inch bolt. This assumes that a “good” hole has been drilled, allowing the collar to catch immediately as the bolt is torqued.

We’ll assume 304 stainless, ultimate tensile ~5,798 lb (25.8 kN), ultimate shear ~3,479 lb (15.5 kN), as previously calculated. 316 alloy would give similar results. We’ll assume proper torquing for preload and no edge effects, meaning the bolt is at least 10 bolt-diameters from edges and cracks.

Formulas (ACI-based, ultimate loads):

- Tensile Rock Breakout: N_cb ≈ 17 × √f_c’ × h_ef^{1.5} lb (k_c=17 for post-installed in cracked conditions; use for conservatism; f_c’ in psi, h_ef in inches).

- Axial Failure Load: Min(N_cb, steel tensile).

- Shear Pry-Out: V_cp ≈ k_cp × N_cb (k_cp=1 for h_ef < 2.5 inches; k_cp=2 for h_ef ≥ 2.5 inches, reflecting increased resistance to rotation).

- Shear Failure Load: Min(V_cp, steel shear), but with bending preceding rock failure.

- Capacities are ultimate (failure); apply safety factors (e.g., 4:1 per UIAA) for working loads.

With these formulas we can compare different bolt lengths in axial loading. Longer bolts increase h_ef, enlarging the breakout cone and distributing tensile stresses over greater rock volume. Preload clamping compresses the rock under the hanger (area ~0.5-1 in² depending on washer diameter), and longer bolts may slightly reduce localized stress concentrations at the surface due to better load transfer deeper in the hole. If rock breakout capacity exceeds steel strength, the bolt fractures. In weaker limestone, rock governs; in harder, steel does. The identical sleeve means expansion grip is consistent, so length primarily affects rock engagement.

So for 4000 psi limestone and 3/8 bolts in tension, axially loaded, we get:

2.5 inch (h_ef ≈ 1.875 in): N_cb ≈ 17 × 63.25 × (1.875)^{1.5} ≈ 2,765 lb (12.3 kN). Rock breakout governs (cone failure).

3.5 inch (h_ef ≈ 2.875 in): N_cb ≈ 17 × 63.25 × (2.875)^{1.5} ≈ 5,240 lb (23.3 kN). Rock breakout governs (cone-pullout).

For M8 bolts, axially loaded (2.5 in. ≈ 64mm, 3.5 in ≈ 90mm):

2.5 inch (h_ef ≈ 1.875 in): N_cb ≈ 17 × √4,000 × (1.875)^{1.5} ≈ 17 × 63.25 × 2.576 ≈ 2,765 lb (12.3 kN). Steel tensile = 4,236 lb (18.8 kN). Rock breakout governs (cone failure).

3.5 inch (h_ef ≈ 2.875 in): N_cb ≈ 17 × 63.25 × (2.875)^{1.5} ≈ 17 × 63.25 × 4.873 ≈ 5,240 lb (23.3 kN). Steel tensile = 4,236 lb (18.8 kN). Steel fracture governs (bolt breaks at threads, matching test observations).

2,765 lb (for both 3/8 and M8 bolts), particularly in redundant anchors, seems reasonable, based on the limits of human survivability and on the other gear in the chain. Nevertheless, this result surprised me. One-inch greater length doubles the effective anchor strength for axial loads.

When a shear load is large enough to exceed bolt preload (which should never happen with actual working loads), the shear force induces bending (lever arm from hanger to expansion point) and pry-out, where the bolt rotates, pulling out the back side and causing a cone breakout. Longer bolts increase h_ef, enhancing pry-out resistance by engaging more rock mass and distributing compressive stresses. If pry-out exceeds steel shear capacity, the bolt bends and shears. Industrial studies show embedment beyond 10x diameter (3.75 inches for 3/8 inch, 80mm for M8 bolts) adds minimal shear benefit.

For 4,000 psi limestone and 3/8 bolts with tensile loads:

2.5 inch (h_ef ≈ 1.875 in < 2.5 in): V_cp ≈ 1 × 2,765 lb ≈ 2,765 lb (12.3 kN). Rock pry-out governs (partial pullout, bending, then cone breakout).

3.5 inch (h_ef ≈ 2.875 in > 2.5 in): V_cp ≈ 2 × 5,240 lb ≈ 10,480 lb (46.6 kN) > steel shear → Steel governs (~3,479 lb [15.5 kN], with bending preceding shear failure).

For stronger limestone (8,000 psi compressive), 3/8 bolt capacities are ~1.4x higher (e.g., 3,870 lb for 2.5 in pry-out; steel 3,479 lb for 3.5 in), emphasizing length’s role in shifting from rock to steel failure.

For 4,000 psi limestone and M8 bolts with shear loads:

2.5 inch (h_ef ≈ 1.875 in < 2.5 in): V_cp ≈ 1 × 2,765 lb ≈ 2,765 lb (12.3 kN). Steel shear = 2,542 lb (11.3 kN). Steel shear governs (barely – bolt bends, then shears, with partial pullout).

3.5 inch (h_ef ≈ 2.875 in > 2.5 in): V_cp ≈ 2 × 5,240 lb ≈ 10,480 lb (46.6 kN). Steel shear = 2,542 lb (11.3 kN). Steel shear governs (bolt bends/shears before rock pry-out).

For harder limestone (8,000 psi), M8/8 bolt capacities are ~1.4x higher, again emphasizing length’s role in shifting from rock to steel failure.

2.5 inch: V_cp ≈ 1 × 3,870 lb ≈ 3,870 lb (17.2 kN). Steel = 2,542 lb. Steel shear governs.

3.5 inch: V_cp ≈ 2 × 7,340 lb ≈ 14,680 lb (65.3 kN). Steel = 2,542 lb. Steel shear governs.

Summary – Failure Loads in 1,000, 4,000, and 8,000 psi Limestone

([S] indicates steel failure, [R] indicates rock failure. Loads given in pounds and (kilonewtons):

| Bolt Size | 2.5 in Axial | 2.5 in Shear | 3.5 in Axial | 3.5 in Shear |

| 1000 psi limestone | ||||

| M8 (8mm) | 1,382 (6.15) [R] | 1,382 (6.15) [R] | 2,620 (11.7) [R] | 2,542 (11.3) [S] |

| 3/8 inch | 1,382 (6.15) [R] | 1,382 (6.15) [R] | 2,620 (11.7) [R] | 2,620 (11.7) [R] |

| 4000 psi limestone | ||||

| M8 (8mm) | 2,765 (12.3) [R] | 2,542 (11.3) [S] | 4,236 (18.8) [S] | 2,542 (11.3) [S] |

| 3/8 inch | 2,765 (12.3) [R] | 2,765 (12.3) [R] | 5,240 (23.3) [R] | 3,479 (15.5) [S] |

| 8000 psi limestone | ||||

| M8 (8mm) | 3,870 (17.2) [R] | 2,542 (11.3) [S] | 4,236 (18.8) [S] | 2,542 (11.3) [S] |

| 3/8 inch | 3,870 (17.2) [R] | 3,870 (17.2) [R] | 5,798 (25.8) [S] | 3,479 (15.5) [S] |

Bottom Line

For me, the key insight is that shear pry-out capacity in limestone anchors scales significantly with embedment depth. Extending bolt length from 2.5 to 3.5 inches increases pry-out resistance by approximately 100–200%, driven by the deeper rock engagement and the ACI 318 k_cp factor (1 for h_ef < 2.5 inches, 2 for h_ef ≥ 2.5 inches), though it’s ultimately capped by the bolt’s steel shear strength (2,542 lb / 11.3 kN for 8mm, 3,479 lb / 15.5 kN for 3/8 inch). When rock strength governs failure, as it often does in weaker (compressive strength) limestone (e.g., 1,000–4,000 psi), 3/8 inch bolts offer no advantage over 8mm (M8), as both have identical rock-limited capacities (e.g., 1,382 lb in 1,000 psi, 2,765 lb in 4,000 psi at 2.5 inches). Thus, choosing a 3.5 inch bolt over a 2.5 inch bolt is typically more critical than choosing between 3/8 inch and 8mm diameters.

Most bolts, particularly wall anchors in aid climbing or permanent setups, experience perpendicular loads. These are initially resisted by friction from tensile preload (e.g., 707 lb for 8mm, 966 lb for 3/8 inch with μ = 0.4), but when loads exceed this – as in a severe 8 G fall (1,412 lb / 6.3 kN for an 80 kg climber) – shear stress initiates. In caves I visit, permanent anchors are redundant, using dual bolts with crude equalization to limit drops to 1–3 inches, ensuring no single failure is catastrophic. In aid climbing, dynamic belays and climbing methodology/technique reduce criticality of single bolt failures. While 3/8 inch bolts provide ~37% higher steel strength (e.g., 3,479 lb vs. 2,542 lb shear), this margin is not a significant safety improvement in an engineering analysis, given typical climber weights (80–100 kg) and redundant anchor systems. Few people use stainless for aid climbs, but the numbers above still roughly apply for mild-steel bolts. In weak limestone (1,000 psi), rock failure governs at low capacities (e.g., 1,382 lb), making length critical and diameter secondary. In harder limestone (8,000 psi), 3/8 inch offers a slight edge, but redundancy and proper placement outweigh diameter differences. For engineering analysis, you can substitute 5/16 inch bolts for M8 in the above; just don’t mix components from each.

25-28 ft-lb seems a good torque for preloading 3/8-16 304 bolts and is consistent with manufacturers’ dry-torque recommendations. For 8mm and 5/16-18 304 bolts, manufacturers’ recommendations range from 11 (Fastenal, Engineer’s Edge, Bolt Depot) to 18 ft-lb (Allied Bolt Inc). For 304 SS (yield ~32 ksi), the tensile stress area of a 5/16-18 bolt is ~0.0524 in², so yield preload is about 1650 pounds. Most manufacturers seem a bit conservative on torque recommendations, likely because construction workers sometimes tend to overtorque. Using T = K × D × P (K ~0.2–0.35 dry for SS, D = 0.3125 in), 11 ft-lb, we get ~1,000–1,900 lb preload (below yield), while 18 ft-lb corresponds to ~1,700–3,100 lb. of preload. The latter is above yield for standard 304 stainless; Allied Bolt’s hardware appears to be a high-yield variant (ASTM F593-24) of 304. 304 can be cold-worked to achieve yield strengths above 70,000 psi. I’m using 32,000 psi for these calculations, so I’ll aim for 11-12 ft-lb of torque underground.

“Factor of Safety” Is a Crutch

We throw around “factor of safety.” It’s a crude ratio of strength to expected load. For example, M8 shear = 11.3 kN vs. 6.3 kN load → 1.8x. But that’s a false comfort. Real engineering moved past simple safety factors decades ago. Load and resistance factors, environment, materials, inspection – all matter more.

In the era of steam trains, designers would calculate the required cross section of a bolt based on design loads, and then “slap on a 3X” (factor of safety) and be done with it. The world then moved to limit-state design, damage tolerance, environment-specific factors, inspection and maintenance schedules, and probabilistic risk assessment. As a design philosophy, factor of safety is dead. As a bureaucratic metric for certification, even sometimes in aerospace, it persists.

The factor of safety, expressed as a ratio (e.g., 1.8 for an 8mm bolt’s shear strength of 11.3 kN over a 6.3 kN load), implies a simple buffer against failure. This can foster a false sense of security among non-technical users, suggesting that a bolt is “safe” as long as the ratio is greater than 1 (or pick a number). In reality, the concept oversimplifies the complexities of anchor performance in real-world conditions.

Factor of safety tends to roll up all sorts of unrelated ways that a piece of equipment or its placement might, in practice, not live up to its theory. It groups all the ways a part might degrade in use together – and groups all the ways any part in your hand might differ from the one(s) that got tested. In short, it is an overly sloppy concept that plays little part in the design of serious gear. Some parts don’t wear. Some manufacturing processes render every specimen of equal size, strength, and surface finish to a fraction of a percent. Some materials corrode like hell. Environments matter. Limestone compressive strength can range from 1 to 100 MPa in the same geologic formation. A poor placement with no preload can leave a 3 inch bolt that can be pulled out when the climber leaned back on it. Not an exaggeration; I have seen this happen – and saw the belayer, Andrea Futrell, go skidding six feet across the floor as a result. Never raised her voice. Dynamic belay par excellence.

Overemphasizing factor of safety can lead to dangerous assumptions, such as trusting a single anchor without redundancy, regardless of its size (do we really need more half inch bolts rusting away atop big drops), or neglecting regular gear inspection. For bolt placement, prudence and sanity insist that no single failure can be catastrophic. As is apparent from the above, proper torquing of bolts removes a great deal of unknowns from the equation.

I stress that “factor of safety” is a crude talking point that often reveals a poor understanding of engineering. So let’s be clear: survivable caving isn’t about safety factors. It’s about redundancy, placement, inspection, and understanding your rock.

That’s how you prevent overconfidence – and make informed decisions about stuff that will kill you if you screw up.

Roxy Music and Forgotten Social Borders

Posted in History of Art on August 20, 2025

In the early 1970s rock culture was diverse, clannish and fiercely territorial. Musical taste usually carried with it an entire identity, including hair length and style, clothing – including shoes/boots – politics, and which record stores you could haunt. King Crimson, Yes, Pink Floyd, and Emerson, Lake & Palmer belonged to the progressive end of the spectrum.

By the early 1970s, progressive rock (prog, as shorthand began to appear in music press) was musical descriptor and social signal. Calling a band “progressive” implied a certain seriousness, technical sophistication, and intellectual ambition. It marked a listener as someone who prized virtuosity, complexity, and concept albums over pop singles. The label carried subtle class and educational connotations: prog fans were expected to appreciate classical references, odd time signatures, extended solos, and experimental studio techniques. King Crimson was often called avant-garde rock, though Henry Cow deserved the label much more. ELP was called symphonic rock, Pink Floyd was psychedelic rock, and Yes was Epic rock – but they were all prog. And listening to all this stuff made you smart. Or pretentious.

Across the divide, the early 70s saw greaser rock and the emerging ’50s nostalgia circuit. Sha Na Na, the sock-hop revival, the idea that a gold lamé suit was a passport to a simpler age ushered in the Happy Days craze and its music. Few people straddled those camps. A Crimson devotee wouldn’t admit to liking Sha Na Na if he wanted to keep his dignity. Rock music was attitude, self-image, and worldview.

Into that landscape stepped Roxy Music in 1972, and they were utterly bewildering. Bryan Ferry came dressed like a lounge lizard from a time-warped jukebox, crooning with a sincerity that clearly wasn’t parody or caricature. Still, it was far too stylized to be mere mimicry. His band conjured a storm of dissonant non-keyboard electronics, angular rhythms, and Brian Eno’s futuristic treatments. Roxy Music embraced rather than mocked the early rock gestures of Elvis’s era. Ferry gave listeners permission to take Jerry Lee Lewis seriously, even reverently. Lewis was suddenly an avant-garde icon, pounding the keys with the same abandon that Eno applied to his electronics (witness Richard Trythall’s 1977 musique concrète: Omaggio a Jerry Lee Lewis).

That was the radicalism of early Roxy Music, which cannot be grasped retrospectively, even by the most avid young musicologist. Roxy dissolved the borders that the tribes of 1972 held sacred. They showed that ’50s rock, glam stylization, and avant-garde electronics could coexist in an unstable but persistent alloy. The shock of that is hard to grasp from today’s vantage point, when music is not tied to identity and “classic-rock” Roxy Music is remembered for Ferry’s Avalon-era suave crooning.

Oddly, and I think almost uniquely, as the band moved mainstream over the next fifteen years, the noisy, Eno-era chaos was retroactively smoothed into the same brand identity as Avalon. For later fans, there was no sharp rupture; the old chaos was domesticated and folded back into the same style sensibility.

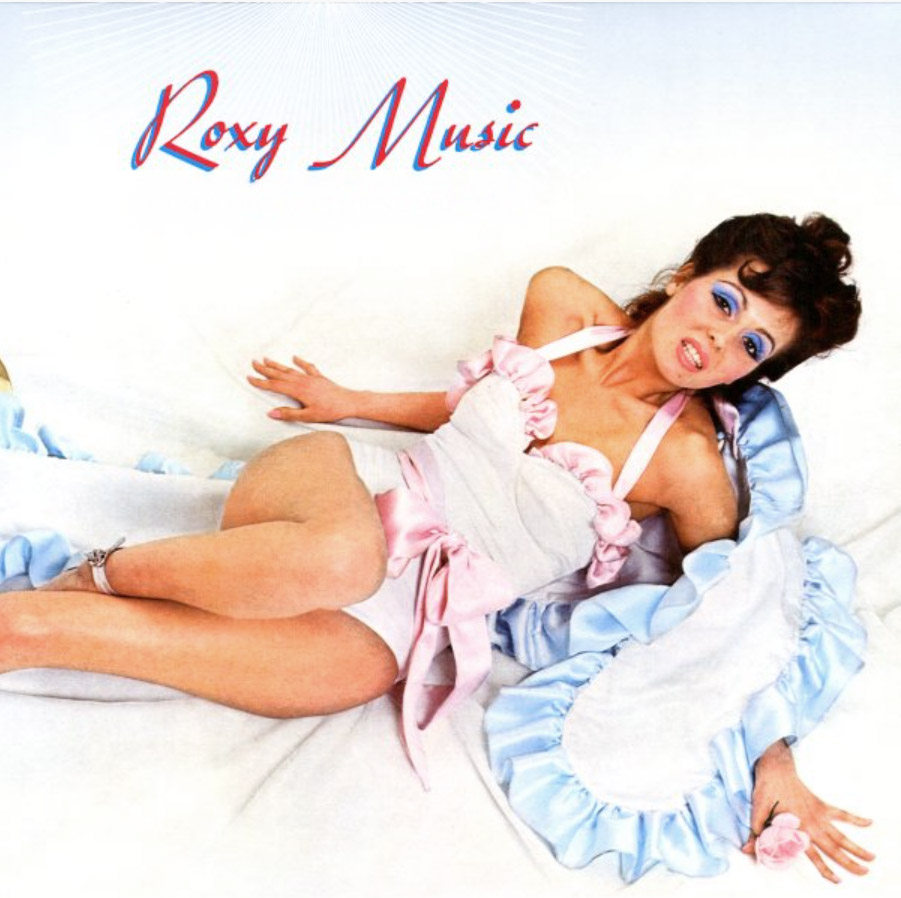

But the rupture had existed. Their cover art reinforced it. Roxy Music (1972) with Kari-Ann Muller posing like a mid-century pin-up, was tame in skin exposure compared to H.R. Giger’s biomechanical nudity on ELP’s Brain Salad Surgery. The boldness of Roxy Music’s cover lay in context, not ribaldry. The sleeve was bluntly terrestrial. For a prog listener used to studying a Roger Dean landscape on a first listen of a new Yes album, Roxy Music surely seemed an insult to seriousness.

When Fleetwood Mac reinvented themselves in 1975, new listeners treated it as rebirth. The Peter Green blues band that authored Black Magic Woman and the Buckingham–Nicks hit machine lived in separate mental compartments. Very few Rumours-era fans felt obliged to revisit Then Play On or Kiln House, and most who did saw them as curiosities. Similarly, Genesis underwent a hard split. Its listeners did not treat Foxtrot and Invisible Touch as facets of a single project.

Roxy Music’s retrospective smoothing is almost unique in rock. Their chaos was polished backward into elegance. The Velvet Underground went the other way. At first their noise was cultish, even disposable. But as the legend of Reed, Cale, and Nico grew, the past was recoded as prophecy. White Light/White Heat became the seed of punk. The Velvet Underground & Nico turned into the Bible of indie rock. Even Loaded – a deliberate grab for radio play, stripped of abrasion – was absorbed into the myth and remembered as avant-garde. It wasn’t. But the halo of the band’s legend bled forward and made every gesture look radical.

Roxy Music remains an oddity. The suave Avalon listener in 1982 could put on Virginia Plain without embarrassment and believe that those early tracks were nearby on a continuum. Ferry’s suave sound bled backward and redefined the chaos. He retroactively re-coded the Eno-era racket. The radical rupture was smoothed out beneath the gloss of brand identity.

That’s why early Roxy is so hard to hear as it was first heard. In 1972 it was unclassifiable, a collision of tribes and eras. To grasp it, you have to forget everything that came after. Imagine a listener whose vinyl shelf ended with The Yes Album, Aqualung, Tarkus, Ash Ra Tempel, Curved Air, Meddle, Nursery Cryme, and Led Zeppelin IV. Sha Na Na was a trashy novelty act recycling respected antiques – Dion and the Belmonts, Ritchie Valens, Danny and the Juniors. Disco, punk, new wave? They didn’t exist.

Now, in that silence, sit back and spin up Ladytron.

Deficient Discipleship in Environmental Science

Posted in Commentary, Philosophy of Science on October 28, 2025

Bear with me here.

Daniel Oprean’s “Portraits of Deficient Discipleship” (Kairos, 2024) argues that Gospel Matthew 8:18–27 presents three kinds of failed or immature discipleship, each corrected by Jesus’s response.

Oprean reads Matthew 19–20 as discipleship without costs. The “enthusiastic scribe” volunteers to follow Jesus but misunderstands the teacher he’s addressing. His zeal lacks awareness of cost. Jesus’s lament about having “nowhere to lay his head,” Oprean says, reveals that true discipleship entails homelessness, marginalization, and suffering.

As an instance of discipleship without commitment (vv. 21–22), a second disciple hesitates. His request to bury his father provokes Jesus’s radical command: “Follow me, and let the dead bury their own dead.” Oprean takes this as divided loyalty, a failure of commitment even among genuine followers.

Finally comes discipleship without hardships (vv. 23–27). The boat-bound disciples obey but panic in the storm. Their fear shows lack of trust. Jesus rebukes their “little faith.” His calming of the sea becomes a paradigm of faith maturing only through trial.

Across these scenes, Matthew’s Jesus confronts enthusiasm without realism, religiosity without surrender, faith without endurance. Authentic discipleship, Oprean concludes, must include cost, commitment, and hardship.

Oprean’s essay is clear and perfectly conventional evangelical exegesis. The tripartite symmetry – cost, commitment, hardship – works neatly, though it imposes a moral taxonomy on what Matthew presents as narrative tension (a pale echo of Mark’s deeper ironies). Each scene may concern not moral failure but stages of revelation: curiosity, obedience, awe. By moralizing them, Oprean flattens Matthew’s literary dynamism and theological ambiguity for devotional ends.