Innumeracy and Overconfidence in Medical Training

Posted by Bill Storage in History of Science on May 4, 2020

Most medical doctors, having ten or more years of education, can’t do simple statistics calculations that they were surely able to do, at least for a week or so, as college freshmen. Their education has let them down, along with us, their patients. That education leaves many doctors unquestioning, unscientific, and terribly overconfident.

A disturbing lack of doubt has plagued medicine for thousands of years. Galen, at the time of Marcus Aurelius, wrote, “It is I, and I alone, who has revealed the true path of medicine.” Galen disdained empiricism. Why bother with experiments and observations when you own the truth. Galen’s scientific reasoning sounds oddly similar to modern junk science armed with abundant confirming evidence but no interest in falsification. Galen had plenty of confirming evidence: “All who drink of this treatment recover in a short time, except those whom it does not help, who all die. It is obvious, therefore, that it fails only in incurable cases.”

Galen was still at work 1500 years later when Voltaire wrote that the art of medicine consisted of entertaining the patient while nature takes its course. One of Voltaire’s novels also described a patient who had survived despite the best efforts of his doctors. Galen was around when George Washington died after five pints of bloodletting, a practice promoted up to the early 1900s by prominent physicians like Austin Flint.

But surely medicine was mostly scientific by the 1900s, right? Actually, 20th century medicine was dragged kicking and screaming to scientific methodology. In the early 1900’s Ernest Amory Codman of Massachusetts General proposed keeping track of patients and rating hospitals according to patient outcome. He suggested that a doctor’s reputation and social status were poor measures of a patient’s chance of survival. He wanted the track records of doctors and hospitals to be made public, allowing healthcare consumers to choose suppliers based on statistics. For this, and for his harsh criticism of those who scoffed at his ideas, Codman was tossed out of Mass General, lost his post at Harvard, and was suspended from the Massachusetts Medical Society. Public outcry brought Codman back into medicine, and much of his “end results system” was put in place.

But surely medicine was mostly scientific by the 1900s, right? Actually, 20th century medicine was dragged kicking and screaming to scientific methodology. In the early 1900’s Ernest Amory Codman of Massachusetts General proposed keeping track of patients and rating hospitals according to patient outcome. He suggested that a doctor’s reputation and social status were poor measures of a patient’s chance of survival. He wanted the track records of doctors and hospitals to be made public, allowing healthcare consumers to choose suppliers based on statistics. For this, and for his harsh criticism of those who scoffed at his ideas, Codman was tossed out of Mass General, lost his post at Harvard, and was suspended from the Massachusetts Medical Society. Public outcry brought Codman back into medicine, and much of his “end results system” was put in place.

20th century medicine also fought hard against the concept of controlled trials. Austin Bradford Hill introduced the concept to medicine in the mid 1920s. But in the mid 1950s Dr. Archie Cochrane was still fighting valiantly against what he called the God Complex in medicine, which was basically the ghost of Galen; no one should question the authority of a physician. Cochrane wrote that far too much of medicine lacked any semblance of scientific validation and knowing what treatments actually worked. He wrote that the medical establishment was hostile the idea of controlled trials. Cochrane fought this into the 1970s, authoring Effectiveness and Efficiency: Random Reflections on Health Services in 1972.

Doctors aren’t naturally arrogant. The God Complex is passed passed along during the long years of an MD’s education and internship. That education includes rights of passage in an old boys’ club that thinks sleep deprivation builds character in interns, and that female med students should make tea for the boys. Once on the other side, tolerance of archaic norms in the MD culture seems less offensive to the inductee, who comes to accept the system. And the business of medicine, the way it’s regulated, and its control by insurance firms, pushes MDs to view patients as a job to be done cost-effectively. Medical arrogance is in a sense encouraged by recovering patients who might see doctors as savior figures.

As Daniel Kahneman wrote, “generally, it is considered a weakness and a sign of vulnerability for clinicians to appear unsure.” Medical overconfidence is encouraged by patients’ preference for doctors who communicate certainties, even when uncertainty stems from technological limitations, not from doctors’ subject knowledge. MDs should be made conscious of such dynamics and strive to resist inflating their self importance. As Allan Berger wrote in Academic Medicine in 2002, “we are but an instrument of healing, not its source.”

Many in medical education are aware of these issues. The calls for medical education reform – both content and methodology – are desperate, but they are eerily similar to those found in a 1924 JAMA article, Current Criticism of Medical Education.

Covid19 exemplifies the aspect of medical education I find most vile. Doctors can’t do elementary statistics and probability, and their cultural overconfidence renders them unaware of how critically they need that missing skill.

A 1978 study, brought to the mainstream by psychologists like Kahnemann and Tversky, showed how few doctors know the meaning of a positive diagnostic test result. More specifically, they’re ignorant of the relationship between the sensitivity and specificity (true positive and true negative rates) of a test and the probability that a patient who tested positive has the disease. This lack of knowledge has real consequences In certain situations, particularly when the base rate of the disease in a population is low. The resulting probability judgements can be wrong by factors of hundreds or thousands.

In the 1978 study (Cascells et. al.) doctors and medical students at Harvard teaching hospitals were given a diagnostic challenge. “If a test to detect a disease whose prevalence is 1 out of 1,000 has a false positive rate of 5 percent, what is the chance that a person found to have a positive result actually has the disease?” As described, the true positive rate of the diagnostic test is 95%. This is a classic conditional-probability quiz from the second week of a probability class. Being right requires a), knowing Bayes Theorem, and b), being able to multiply and divide. Not being confidently wrong requires only one thing: scientific humility – the realization that all you know might be less than all there is to know. The correct answer is 2% – there’s a 2% likelihood the patient has the disease. The most common response, by far, in the 1978 study was 95%, which is wrong by 4750%. Only 18% of doctors and med students gave the correct response. The study’s authors observed that in the group tested, “formal decision analysis was almost entirely unknown and even common-sense reasoning about the interpretation of laboratory data was uncommon.”

As mentioned above, this story was heavily publicized in the 80s. It was widely discussed by engineering teams, reliability departments, quality assurance groups and math departments. But did it impact medical curricula, problem-based learning, diagnostics training, or any other aspect of the way med students were taught? One might have thought yes, if for no reason than to avoid criticism by less prestigious professions having either the relevant knowledge of probability or the epistemic humility to recognize that the right answer might be far different from the obvious one.

Similar surveys were done in 1984 (David M Eddy) and in 2003 (Kahan, Paltiel) with similar results. In 2013, Manrai and Bhatia repeated Cascells’ 1978 survey with the exact same wording, getting trivially better results. 23% answered correctly. They suggesting that medical education “could benefit from increased focus on statistical inference.” That was 35 years after Cascells, during which, the phenomenon was popularized by the likes of Daniel Kahneman, from the perspective of base-rate neglect, by Philip Tetlock, from the perspective of overconfidence in forecasting, and by David Epstein, from the perspective of the tyranny of specialization.

Over the past decade, I’ve asked the Cascells question to doctors I’ve known or met, where I didn’t think it would get me thrown out of the office or booted from a party. My results were somewhat worse. Of about 50 MDs, four answered correctly or were aware that they’d need to look up the formula but knew that it was much less than 95%. One was an optometrist, one a career ER doc, one an allergist-immunologist, and one a female surgeon – all over 50 years old, incidentally.

Despite the efforts of a few radicals in the Accreditation Council for Graduate Medical Education and some post-Flexnerian reformers, medical education remains, as Jonathan Bush points out in Tell Me Where It Hurts, basically a 2000 year old subject-based and lecture-based model developed at a time when only the instructor had access to a book. Despite those reformers, basic science has actually diminished in recent decades, leaving many physicians with less of a grasp of scientific methodology than that held by Ernest Codman in 1915. Medical curriculum guardians, for the love of God, get over your stodgy selves and replace the calculus badge with applied probability and statistical inference from diagnostics. Place it later in the curriculum later than pre-med, and weave it into some of that flipped-classroom, problem-based learning you advertise.

55 Saves Lives

Posted by Bill Storage in Systems Engineering on April 15, 2020

Congress and Richard Nixon had no intention to pull a bait-and-switch when the enacted the National Maximum Speed Law (NMSL) on Jan. 2, 1974. The emergency response to an embargo, NMSL (Public Law 93-239), specified that it was “an act to conserve energy on the Nation’s highways.” Conservation, in this context, meant reducing oil consumption to prevent the embargo proclaimed by the Organization of Arab Petroleum Exporting in October 1973 from seriously impacting American production or causing a shortage of oil then used for domestic heating. There was a precedent. A national speed limit had been imposed for the same reasons during World War II.

By the summer of 1974 the threat of oil shortage was over. But unlike the case after the war, many government officials, gently nudged by auto insurance lobbies, argued that the reduced national speed limit would save tens of thousands of lives annually. Many drivers conspicuously displayed their allegiance to the cause with bumper stickers reminding us that “55 Saves Lives.” Bad poetry, you may say in hindsight, a sorry attempt at trochaic monometer. But times were desperate and less enlightened drivers had to be brought onboard. We were all in it together.

Over the next ten years, the NMSL became a major boon to jurisdictions crossed by interstate highways, some earning over 80% of their revenues from speeding fines. Studies reached conflicting findings over whether the NMSL had saved fuel or lives. The former seems undeniable at first glance, but the resulting increased congestion caused frequent brake/stop/accelerate effects in cities, and the acceleration phase is a gas guzzler. Those familiar with fluid mechanics note that the traffic capacity of a highway is proportional to the speed driven on it. Some analyses showed decreased fuel efficiency (net miles per gallon). The most generous analyses reported a less than 1% decrease in consumption.

No one could argue that 55 mph collisions were more dangerous than 70 mph collisions. But some drivers, particularly in the west, felt betrayed after being told that the NMSL was an emergency measure (”during periods of current and imminent fuel shortages”) to save oil and then finding it would persist indefinitely for a new reason, to save lives. Hicks and greasy trucker pawns of corporate fat cats, my science teachers said of those arguing to repeal the NMSL.

The matter was increasingly argued over the next twelve years. The states’ rights issue was raised. Some remembered that speed limits had originally been set by a democratic 85% rule. The 85th percentile speed of drivers on an unposted highway became the limit for that road. Auto fatality rates had dropped since 1974, and everyone had their theories as to why. A case was eventually made for an experimental increase to 65 mph, approved by Congress in December 1987. The insurance lobby predicted carnage. Ralph Nader announced that “history will never forgive Congress for this assault on the sanctity of human life.”

Between 1987 and 1995, 40 states moved to the 65 limit. Auto fatality rates continued to decrease as they had done between 1973 and 1987, during which time some radical theorists had argued that the sudden drop in fatality rate in early 1974 had been a statistical blip regressed to the mean a year later and that better cars and seat belt usage accounted for the decreased mortality. Before 1987, those arguments were commonly understood to be mere rationalizations.

In December 1995, more than twenty years after being enacted, Congress finally undid the NMSL completely. States had the authority to set speed limits. An unexpected result of increasing speed limits to 75 mph in some western states was that, as revealed by unmanned radar, the number of vehicles driving above 80 mph dropped by 85% compared to when the speed limit was 65.

From a systems-theory perspective, it’s clear that the highway transportation network is a complex phenomenon, one resistant to being modeled through facile conjecture about causes and effects, naive assumptions about incentives and human behavior, and ivory-tower analytics.

The Covid Megatilt

Posted by Bill Storage in Uncategorized on April 3, 2020

Playing poker online is far more addictive than gambling in a casino. Online poker, and other online gambling that involves a lot of skill, is engineered for addiction. Online poker allows multiple simultaneous tables. Laptops, tablets, and mobile phones provide faster play than in casinos. Setup time, for an efficient addict, can be seconds per game. Better still, you can rapidly switch between different online games to get just enough variety to eliminate any opportunity for boredom that has not been engineered out of the gaming experience. Completing a hand of Texas Holdem in 45 seconds online increases your chances of fast wins, fast losses, and addiction.

Tilt is what poker players call it when a particular run of bad luck, an opponent’s skill, or that same opponent’s obnoxious communications put you into a mental state where you’re playing emotionally and not rationally. Anger, disgust, frustration and distress is precipitated by bad beats, bluffs gone awry, a run of dead cards, losing to a lower ranked opponent, fatigue, or letting the opponent’s offensive demeanor get under your skin.

Tilt is so important to online poker that many products and commitment devices have emerged to deal with it. Tilt Breaker provides services like monitoring your performance to detect fatigue and automated stop-loss protection that restricts betting or table count after a run of losses.

A few years back, some friends and I demonstrated biometric tilt detection using inexpensive heart rate sensors. We used machine learning with principal dynamic modes (PDM) analysis running in a mobile app to predict sympathetic (stress-inducing, cortisol, epinephrine) and parasympathetic (relaxation, oxytocin) nervous system activity. We then differentiated mental and physical stress using the mobile phone’s accelerometer and location functions. We could ring an alarm to force a player to face being at risk of tilt or ragequit, even if he was ignoring the obvious physical cues. Maybe it’s time to repurpose this technology.

In past crises, the flow of bad news and peer communications were limited by technology. You could not scroll through radio programs or scan through TV shows. You could click between the three news stations, and then you were stuck. Now you can consume all of what could be home work and family time with up to the minute Covid death tolls while blasting your former friends on Twitter and Facebook for their appalling politicization of the crisis.

You yourself are of course innocent of that sort of politicizing. As a seasoned poker player, you know that the more you let emotions take control your game, the farther your judgments will stray from rational ones.

Still yet, what kind of utter moron could think that the whole response to Covid is a media hoax? Or that none of it is.

Intertemporal Choice, Delayed Gratification and Empty Marshmallow Promises

Posted by Bill Storage in History of Science, Philosophy of Science on March 14, 2020

Everyone knows about the marshmallow test. Kids were given a marshmallow and told that they’d get a second one if they resisted eating the first one for a while. The experimenter then left the room and watched the kids endure marshmallow temptation. Years later, the kids who had been able to fight temptation were found to have higher SAT scores, better jobs, less addiction, and better physical fitness than those who succumbed. The meaning was clear; early self control, whether innate or taught, is key to later success. The test results and their interpretation were, scientifically speaking, too good to be true. And in most ways they weren’t true.

That wrinkle doesn’t stop the marshmallow test from being trotted out weekly on LinkedIn and social sites where experts and moralists opine. That trotting out comes with behavioral economics lessons, dripping with references to Kahnemann, Ariely and the like about our irrationality as we face intertemporal choices, as they’re known in the trade. When adults choose an offer of $1000 today over an offer for $1400 to be paid in one year, even when they have no pressing financial need, they are deemed irrational or lacking self control, like the marshmallow kids.

That wrinkle doesn’t stop the marshmallow test from being trotted out weekly on LinkedIn and social sites where experts and moralists opine. That trotting out comes with behavioral economics lessons, dripping with references to Kahnemann, Ariely and the like about our irrationality as we face intertemporal choices, as they’re known in the trade. When adults choose an offer of $1000 today over an offer for $1400 to be paid in one year, even when they have no pressing financial need, they are deemed irrational or lacking self control, like the marshmallow kids.

The famous marshmallow test was done by Walter Mischel in the 1960s through 1980s. Not only did subsequent marshmallow tests fail to show as much correlation between not waiting for the second marshmallow and a better life, but, more importantly, similar tests for at least twenty years have pointed to a more salient result, one which Mischel was aware of, but which got lost in popular retelling. Understanding the deeper implications of the marshmallow tests, along with a more charitable view of kids who grabbed the early treat, requires digging down into the design of experiments, Bayesian reasoning, and the concept of risk neutrality.

Intertemporal choice tests like the marshmallow test involve choices between options that involve different payoffs at different times. We face these choices often. And when we face them in the real world, our decision process is informed by memories and judgments about our past choices and their outcomes. In Bayesian terms, our priors incorporate this history. In real life, we are aware that all contracts, treaties, and promises for future payment come with a finite risk of default.

In intertemporal choice scenarios, the probability of the deferred payment actually occurring is always less than 100%. That probability is rarely known and is often unknowable. Consider choices A and B below. This is how the behavioral economists tend to frame the choices.

| A | B |

| $1,000 now | $1,400 paid next year |

But this framing ignores an important feature of any real-world, non-hypothetical intertemporal choice situation: the probability of choice B is always less than 100%. In the above example, even risk-neutral choosers (those indifferent to all choices having the same expected value) would pick choice A over choice B if they judge the probability of non-default (actually getting the deferred payment) to be less than a certain amount.

| A | B | C |

| $1000 now | $1,400 in one year, P= .99 | $1,400 in one year, P= 0.7 |

| Expected value =$1000 | Expected value = $1386 | Expected value = $980 |

As shown above, if choosers believe the deferred payment likelihood to be less than about 70%, they cannot be called irrational for choosing choice A.

Lack of Self Control – or Rational Intuitive Bayes?

Now for the final, most interesting twist in tests like the marshmallow test, almost universally ignored by those who cite them. Unlike my example above where the wait time is one year, in the marshmallow tests, the time period during which the subject is tempted to eat the first marshmallow is unknown to the subject. Subjects come into the game with a certain prior – a certain belief about the probability of non-default. But, as intuitive Bayesians, these subjects update the probability they assign to non-default, during their wait, based on the amount of time they have been waiting. The speed at which they revise their probability downward depends on their judgment of the distribution of wait times experienced in their short lives.

If kids in the marshmallow tests have concluded, based on their experience, that adults are not dependable, choice A makes sense; they should immediately eat the first marshmallow, since the second one may never materialize. Kids who endure temptation for a few minutes only to give in and eat their first marshmallow are seen as both irrational and being incapable of self-control.

But if those kids adjust their probability judgments that the second marshmallow will appear based on a prior distribution that is not a normal distribution (i.e., if as intuitive Bayesians they model wait times imposed by adults as a power-law distribution), then their eating the first marshmallow after some test-wait period makes perfect sense. They rightly conclude, on the basis of available evidence, that wait times longer than some threshold period may be very long indeed. These kids aren’t irrational, and self-control is not their main problem. Their problem is that they have been raised by irresponsible adults who have both displayed a tendency to default on payments and who are late to fulfill promises by time durations obeying power-law distributions.

Subsequent marshmallow tests have verified this. In 2013, psychologist Laura Michaelson, after more sophisticated versions of the marshmallow test, concluded “implications of this work include the need to revise prominent theories of delay of gratification.” Actually, tests going back over 50 years have shown similar results (A.R. Mahrer, The role of expectancy in delayed reinforcement, 1956).

In three recent posts (first, second, third) I suggested that behavioral economists and business people who follow them are far too prone to seeing innate bias everywhere, when they are actually seeing rational behavior through their own bias. This is certainly the case with the common misuse of the marshmallow tests. Interpreting these tests as rational behavior in light of subjects’ experience is a better explanatory theory, one more consistent with the evidence, and one that coheres with other explanatory observations, such as humans’ capacity for intuitive Bayesian belief updates.

Charismatic pessimists about human rationality twist the situation so that their pessimism is framed as good news, in the sense that they have at least illuminated an inherent human bias. That pessimism, however cheerfully expressed, is both misguided and harmful. Their failure to mention the more nuanced interpretation of marshmallow tests is dishonest and self-serving. The problem we face is not innate, and it is mostly curable. Better parenting can fix it. The marshmallow tests measure parents more than they measure kids.

Walter Mischel died in 2018. I heard his 2016 talk at the Long Now Foundation in San Francisco. He acknowledged the relatively weak correlation between marshmallow test results and later success, and he mentioned that descriptions of his experiments in popular press were rife with errors. But his talk still focused almost solely on the self-control aspect of the experiments. He missed a great opportunity to help disseminate a better story about the role of trustworthiness and reliability of parents in delayed gratification of children.

A better description of the way we really work through intertemporal choices would require going deeper into risk neutrality and how, even for a single person, our departure from risk neutrality – specifically risk-appetite skewness – varies between situations and across time. I have enjoyed doing some professional work in that area. Getting it across in a blog post is probably beyond my current blog-writing skills.

The Naming and Numbering of Parts

Posted by Bill Storage in History of Science on February 28, 2020

Counting Crows – One for Sorrow, Two for Joy…

Remember in junior high when Mrs. Thistlebottom made you memorize the nine parts of speech. That was to help you write an essay on what William Blake might have been thinking when he wrote The Tyger. In Biology, Mr. Sallow taught you that nature was carved up into a seven taxonomic categories (domains, kingdoms, phyla, etc.) and that there were five kingdoms. If your experience was similar to mine, your Social Studies teacher then had you memorize the four causes of the Civil War.

Four causes? There I drew the line. Parts of speech might be counted with integers along with the taxa and the five kingdoms, but not causes of war. But in 8th grade I lacked the confidence and the vocabulary to make my case. It bugs me still, as you see. Assigning exactly four causes to the Civil War was a projection of someone’s mental model of the war onto the real war, which could rightly have been said to have any number of causes. Causes are rarely the sort of things that nature numbers. And as it turned out, nor are parts of speech, levels of taxa, or the number of kingdoms. Life isn’t monophyletic. Is Archaea a domain or a kingdom? Plato is wrong again; you cannot carve nature at her joints. Life’s boundaries are fluid.

Can there be any reason that the social sciences still insist that their world can be carved at its joints? Are they envious of the solid divisions of biology but unaware that these lines are now understood to be fictions, convenient only at the coarsest levels of study?

A web search reveals that many causes and complex phenomena in the realm of social science can be counted, even in peer reviewed papers. Consider the three causes each for crime, the Great Schism in Christianity, and of human trafficking in Africa. Or the four kinds each of ADHD (Frontiers in New Psychology), Greek love, and behavior (Current Directions in Psychological Science). Or the five effects each of unemployment, positive organizational behavior, and hallmarks of Agile Management (McKinsey).

In each case it seems that experts, by using the definite article “the” before their cardinal qualifier, might be asserting that their topic has exactly that many causes, kinds, or effects. And that the precise number they provide is key to understanding the phenomenon. Perhaps writing a technical paper titled simply Four Kinds of ADHD (no “The”) might leave the reader wondering if there might in fact be five kinds, though the writer had time to explore only four. Might there be highly successful people with eight habits?

The latest Diagnostic and Statistical Manual of Mental Disorders (DSM–5), issued by the American Psychiatric Association lists over 300 named conditions, not one of which has been convincingly tied to a failure of neurotransmitters or any particular biological state. Ten years in the making, the DSM did not specify that its list was definitive. In fact, to its credit, it acknowledges that the listed conditions overlap along a continuum.

Still, assigning names to 300 locations along a spectrum – a better visualization might be across an n-dimensional space – does not mean you’ve found 300 kinds of anything. Might exploring the trends, underlying systems, processes, and relationships between symptoms be more useful?

Still, assigning names to 300 locations along a spectrum – a better visualization might be across an n-dimensional space – does not mean you’ve found 300 kinds of anything. Might exploring the trends, underlying systems, processes, and relationships between symptoms be more useful?

A few think so at least. Thomas Insel, former director of the NIMH wrote that he was doubtful of the DSM’s usefulness. Insel said that the DSM’s categories amounted to consensus about clusters of clinical symptoms, not any empirical laboratory measure. They were equivalent, he said, “to creating diagnostic systems based on the nature of chest pain or the quality of fever.” As Kurt Grey, psychologist at UNC put it, “intuitive taxonomies obscure the underling processes of psychopathology.”

Meanwhile in business, McKinsey consultants still hold that business interactions can be optimized around the four psychological functions – sensation, intuition, feeling, and thinking, despite that theory’s (Myers Briggs) pitifully low evidential support.

The Naming of Parts

“Today we have naming of parts. Yesterday, We had daily cleaning…” Henry Reed, Naming of Parts, 1942.

Richard Feynman told a story of being a young boy and noticing that when his father jerked his wagon containing a ball forward, the ball appeared to move backward in the wagon. Feynman asked why it did that. His dad said that no one knows, but that “we call it inertia.”

Feynman also talked about walking with his father in the woods. His dad, a uniform salesman, said, “See that bird? It’s a brown-throated thrush, but in Germany it’s called a halzenfugel, and in Chinese they call it a chung ling and even if you know all those names for it, you still know nothing about the bird, absolutely nothing about the bird. You only know something about people – what they call the bird.” Feynman said they then talked about the bird’s pecking and its feathers.

Back at the American Psychiatric Association, we find controversy over whether Premenstrual Dysphoria Disorder (PMDD) is an “actual disorder” or merely a strong case of Premenstrual Syndrome (PMS).

Science gratifies us when it tries to explain things, not merely to describe them, or, worse yet, to merely name them. That’s true despite all the logical limitations to scientific knowledge, like the underdetermination of theory by evidence and the problem of induction that David Hume made famous in 1739.

Carl Linnaeus, active at the same time as Hume, devised the system Mr. Sallow taught you in 8th grade Biology. It still works, easing communications around manageable clusters of organisms, and demarcating groups of critters that are endangered. But Linnaeus was dead wrong about the big picture: “All the species recognized by Botanists came forth from the Almighty Creator’s hand, and the number of these is now and always will be exactly the same,” and “nature makes no jumps.,” he wrote. So parroting Linnaeus’s approach to science will naturally lead to an impasse.

Social sciences (of which there are precisely nine), from anthropology to business management might do well to recognize that their domains will never be as lean, orderly, or predictive as the hard sciences are, and to strive for those science’s taste for evidence rather than venerating their ontologies and taxonomies.

Now why do some people think that labeling a thing explains the thing? Because they fall prey to the Nominal Fallacy. Nudge.

One for sorrow,

Two for mirth

Three for a funeral,

Four for birth

Five for heaven

Six for hell

Seven for the devil,

His own self

– Proverbs and Popular Saying of the Seasons, Michael Aislabie Denham, 1864

The Trouble with Doomsday

Posted by Bill Storage in Philosophy, Probability and Risk on February 4, 2020

Doomsday just isn’t what is used to be. Once the dominion of ancient apologists and their votary, the final destiny of humankind now consumes probability theorists, physicists. and technology luminaries. I’ll give some thoughts on probabilistic aspects of the doomsday argument after a brief comparison of ancient and modern apocalypticism.

Apocalypse Then

The Israelites were enamored by eschatology. “The Lord is going to lay waste the earth and devastate it,” wrote Isaiah, giving few clues about when the wasting would come. The early Christians anticipated and imminent end of days. Matthew 16:27: some of those who are standing here will not taste death until they see the Son of Man coming in His kingdom.

From late antiquity through the middle ages, preoccupation with the Book of Revelation led to conflicting ideas about the finer points of “domesday,” as it was called in Middle English. The first millennium brought a flood of predictions of, well, flood, along with earthquakes, zombies, lakes of fire and more. But a central Christian apocalyptic core was always beneath these varied predictions.

Right up to the enlightenment, punishment awaited the unrepentant in a final judgment that, despite Matthew’s undue haste, was still thought to arrive any day now. Disputes raged over whether the rapture would be precede the tribulation or would follow it, the proponents of each view armed with supporting scripture. Polarization! When Christianity began to lose command of its unruly flock in the 1800’s, Nietzsche wondered just what a society of non-believers would find to flog itself about. If only he could see us now.

Apocalypse Now

Our modern doomsday riches include options that would turn an ancient doomsayer green. Alas, at this eleventh hour we know nature’s annihilatory whims, including global pandemic, supervolcanoes, asteroids, and killer comets. Still in the Acts of God department, more learned handwringers can sweat about earth orbit instability, gamma ray bursts from nearby supernovae, or even a fluctuation in the Higgs field that evaporates the entire universe.

As Stephen Hawking explained bubble nucleation, the Higgs field might be metastable at energies above a certain value, causing a region of false vacuum to undergo catastrophic vacuum decay, causing a bubble of the true vacuum expanding at the speed of light. This might have started eons ago, arriving at your doorstep before you finish this paragraph. Harold Camping, eat your heart out.

Hawking also feared extraterrestrial invasion, a view hard to justify with probabilistic analyses. Glorious as such cataclysms are, they lack any element of contrition. Real apocalypticism needs a guilty party.

Thus anthropogenic climate change reigned for two decades with no creditable competitors. As self-inflicted catastrophes go, it had something for everyone. Almost everyone. Verily, even Pope Francis, in a covenant that astonished adherents, joined – with strong hand and outstretched arm – leftists like Naomi Oreskes, who shares little else with the Vatican, ideologically speaking.

While Global Warming is still revered, some prophets now extend the hand of fellowship to some budding successor fears, still tied to devilries like capitalism and the snare of scientific curiosity. Bioengineered coronaviruses might be invading as we speak. Careless researchers at the Large Hadron Collider could set off a mini black hole that swallows the earth. So some think anyway.

Nanotechnology now gives some prominent intellects the willies too. My favorite in this realm is Gray Goo, a catastrophic chain of events involving molecular nanobots programmed for self-replication. They will devour all life and raw materials at an ever-increasing rate. How they’ll manage this without melting themselves due to the normal exothermic reactions tied to such processes is beyond me. Global Warming activists may become jealous, as the very green Prince Charles himself now diverts a portion of the crown’s royal dread to this upstart alternative apocalypse.

My cataclysm bucks are on full-sized Artificial Intelligence though. I stand with chief worriers Bill Gates, Ray Kurzweil, and Elon Musk. Computer robots will invent and program smarter and more ruthless autonomous computer robots on a rampage against humans seen by the robots as obstacles to their important business of building even smarter robots. Game over.

The Mathematics of Doomsday

The Doomsday Argument is a mathematical proposition arising from the Copernican principle – a trivial application of Bayesian reasoning – wherein we assume that, lacking other info, we should find ourselves, roughly speaking, in the middle of the phenomenon of interest. Copernicus didn’t really hold this view, but 20th century thinkers blamed him for it anyway.

Applying the Copernican principle to human life starts with the knowledge that we’ve been around for 200 hundred thousand years, during which 60 billion of us have lived. Copernicans then justify the belief that half the humans that will have ever lived remain to be born. With an expected peak earth population of 12 billion, we might, using this line of calculating, expect the human race to go extinct in a thousand years or less.

Adding a pinch of statistical rigor, some doomsday theorists calculate a 95% probability that the number of humans to have lived so far is less than 20 times the number that will ever live. Positing individual life expectancy of 100 years and 12 billion occupants, the earth will house humans for no more than 10,000 more years.

That’s the gist of the dominant doomsday argument. Notice that it is purely probabilistic. It applies equally to the Second Coming and to Gray Goo. However, its math and logic are both controversial. Further, I’m not sure why its proponents favor population-based estimates over time-based estimates. That is, it took a lot longer than 10,000 years, the proposed P = .95 extinction term, for the race to arrive at our present population. So why not place the current era in the middle of the duration of the human race, thereby giving us another 200,000 thousand years? That’s quite an improvement on the 10,000 year prediction above.

Even granting that improvement, all the above doomsday logic has some curious bugs. If we’re justified in concluding that we’re midway through our reign on earth, then should we also conclude we’re midway through the existence of agriculture and cities? If so, given that cities and agriculture emerged 10,000 years ago, we’re led to predict a future where cities and agriculture disappear in 10,000 years, followed by 190,000 years of post-agriculture hunter-gatherers. Seems unlikely.

Astute Bayesian reasoners might argue that all of the above logic relies – unjustifiably – on an uninformative prior. But we have prior knowledge suggesting we don’t happen to be at some random point in the life of mankind. Unfortunately, we can’t agree on which direction that skews the outcome. My reading of the evidence leads me to conclude we’re among the first in a long line of civilized people. I don’t share Elon Musk’s pessimism about killer AI. And I find Hawking’s extraterrestrial worries as facile as the anti-GMO rantings of the Union of Concerned Scientists. You might read the evidence differently. Others discount the evidence altogether, and are simply swayed by the fashionable pessimism of the day.

Finally, the above doomsday arguments all assume that we, as observers, are randomly selected from the set of all existing humans, including past, present and future, ever be born, as opposed to being selected from all possible births. That may seem a trivial distinction, but, on close inspection, becomes profound. The former is analogous to Theory 2 in my previous post, The Trouble with Probability. This particular observer effect, first described by Dennis Dieks in 1992, is called the self-sampling assumption by Nick Bostrom. Considering yourself to be randomly selected from all possible births prior to human extinction is the analog of Theory 3 in my last post. It arose from an equally valid assumption about sampling. That assumption, called self-indication by Bostrom, confounds the above doomsday reasoning as it did the hotel problem in the last post.

Th self-indication assumption holds that we should believe that we’re more likely to discover ourselves to be members of larger sets than of smaller sets. As with the hotel room problem discussed last time, self-indication essentially cancels out the self-sampling assumption. We’re more likely to be in a long-lived human race than a short one. In fact, setting aside some secondary effects, we can say that the likelihood of being selected into any set is proportional to the size of the set; and here we are in the only set we know of. Doomsday hasn’t been called off, but it has been postponed indefinitely.

The Trouble with Probability

Posted by Bill Storage in Probability and Risk on February 2, 2020

The trouble with probability is that no one agrees what it means.

Most people understand probability to be about predicting the future and statistics to be about the frequency of past events. While everyone agrees that probability and statistics should have something to do with each other, no one agrees on what that something is.

Probability got a rough start in the world of math. There was no concept of probability as a discipline until about 1650 – odd, given that gambling had been around for eons. Some of the first serious work on probability was done by Blaise Pascal, who was assigned by a nobleman to divide up the winnings when a dice game ended unexpectedly. Before that, people just figured chance wasn’t receptive to analysis. Aristotle’s idea of knowledge required that it be universal and certain. Probability didn’t fit.

To see how fast the concept of probability can go haywire, consider your chance of getting lung cancer. Most agree that probability is determined by your membership in a reference class for which a historical frequency is known. Exactly which reference class you belong to is always a matter of dispute. How similar to them do you need to be? The more accurately you set the attributes of the reference population, the more you narrow it down. Eventually, you get down to people of your age, weight, gender, ethnicity, location, habits, and genetically determined preference for ice cream flavor. Your reference class then has a size of one – you. At this point your probability is either zero or one, and nothing in between. The historical frequency of cancer within this population (you) cannot predict your future likelihood of cancer. That doesn’t seem like what we wanted to get from probability.

Similarly, in the real world, the probabilities of uncommon events and of events with no historical frequency at all are the subject of keen interest. For some predictions of previously unexperienced events, like and airplane crashing due to simultaneous failure of a certain combination of parts, even though that combination may have never occurred in the past, we can assemble a probability from combining historical frequencies of the relevant parts using Boolean logic. My hero Richard Feynman seemed not to grasp this, oddly.

For worries like a large city being wiped out by an asteroid, our reasoning becomes more conjectural. But even for asteroids we can learn quite a bit about asteroid impact rates based on the details of craters on the moon, where the craters don’t weather away so fast as they do on earth. You can see that we’re moving progressively away from historical frequencies and becoming more reliant on inductive reasoning, the sort of thing that gave Aristotle the hives.

Finally, there are some events for which historical frequencies provide no useful information. The probability that nanobots will wipe out the human race, for example. In these cases we take a guess, maybe even a completely wild guess. and then, on incrementally getting tiny bits of supporting or nonsupporting evidence, we modify our beliefs. This is the realm of Bayesianism. In these cases when we talk about probability we are really only talking about the degree to which we believe a proposition, conjecture or assertion.

Breaking it down a bit more formally, a handful of related but distinct interpretations of probability emerge. Those include, for example:

Objective chances: The physics of flipping a fair coin tend to result in heads half the time.

Frequentism: Relative frequency across time: of all the coins ever flipped, one half have been heads, so expect more of the same.

Hypothetical frequentism: If you flipped coins forever, the heads/tails ratio would approach 50%.

Bayesian belief: Prior distributions equal belief: before flipping a coin, my personal expectation that it will be heads is equal to that of it being tails.

Objective Bayes: Prior distributions represent neutral knowledge: given only that a fair coin has been flipped, the plausibility of it’s having fallen heads equals that of it having been tails.

While those all might boil down to the same thing in the trivial case of a coin toss, they can differ mightily for difficult questions.

People’s ideas of probability differ more than one might think, especially when it becomes personal. To illustrate, I’ll use a problem derived from one that originated either with Nick Bostrom, Stuart Armstrong or Tomas Kopf, and was later popularized by Isaac Arthur. Suppose you wake up in a room after suffering amnesia or a particularly bad night of drinking. You find that you’re part of a strange experiment. You’re told that you’re in one of 100 rooms and that the door of your room is either red or blue. You’re instructed to guess which color it is. Finding a coin in your pocket you figure flipping it is as good a predictor of door color as anything else, regardless of the ratio of red to blue doors, which is unknown to you. Heads red, tails blue.

The experimenter then gives you new info. 90 doors are red and 10 doors are blue. Guess your door color, says the experimenter. Most people think, absent any other data, picking red is a 4 1/2 times better choice than letting a coin flip decide.

Now you learn that the evil experimenter had designed two different branches of experimentation. In Experiment A, ten people would be selected and placed, one each, into rooms 1 through 10. For Experiment B, 100 other people would be placed, one each, in all 100 rooms. You don’t know which room you’re in or which experiment, A or B, was conducted. The experimenter tells you he flipped a coin to choose between Experiment A, heads, and Experiment B, tails. He wants you to guess which experiment, A or B, won his coin toss. Again, you flip your coin to decide, as you have nothing to inform a better guess. You’re flipping a coin to guess the result of his coin flip. Your odds are 50-50. Nothing controversial so far.

Now you receive new information. You are in Room 5. What judgment do you now make about the result of his flip? Some will say that the odds of experiment A versus B were set by the experimenter’s coin flip, and are therefore 50-50. Call this Theory 1.

Others figure that your chance of being in Room 5 under Experiment A is 1 in 10 and under Experiment B is 1 in 100. Therefore it’s ten times more likely that Experiment A was the outcome of the experimenter’s flip. Call this Theory 2.

Still others (Theory 3) note that having been selected into a group of 100 was ten times more likely than having been selected into a group of 10, and on that basis it is ten times more likely that Experiment B was the result of the experimenter’s flip than Experiment A.

My experience with inflicting this problem on victims is that most people schooled in science – though certainly not all – prefer Theories 2 or 3 to Theory 1, suggesting they hold different forms of Bayesian reasoning. But between Theories 2 and 3, war breaks out.

Those preferring Theory 2 think the chance of having been selected into Experiment A (once it became the outcome of the experimenter’s coin flip) is 10 in 110 and the chance of being in Room 5 is 1 in 10, given that Experiment A occurred. Those who hold Theory 3 perceive a 100 in 110 chance of having been selected into Experiment B, once it was selected by the experimenter’s flip, and then a 1 in 100 chance of being in Room 5, given Experiment B. The final probabilities of being in room 5 under Theories 2 and 3 are equal (10/110 x 1/10 equals 1 in 110, vs. 100/110 x 1/100 also equals 1 in 110), but the answer to the question about the outcome of the experimenter’s coin flip having been heads (Experiment A) and tails (Experiment B) remains in dispute. To my knowledge, there is no basis for settling that dispute. Unlike Martin Gardner’s boy-girl paradox, this dispute does not result from ambiguous phrasing; it seems a true paradox.

The trouble with probability makes it all the more interesting. Is it math, philosophy, or psychology?

How dare we speak of the laws of chance. Is not chance the antithesis of all law? – Joseph Bertrand, Calcul des probabilités, 1889

Though there be no such thing as Chance in the world; our ignorance of the real cause of any event has the same influence on the understanding, and begets a like species of belief or opinion. – David Hume, An Enquiry Concerning Human Understanding, 1748

It is remarkable that a science which began with the consideration of games of chance should have become the most important object of human knowledge. – Blaise Pascal, Théorie Analytique des Probabilitiés, 1812

Actively Disengaged?

Posted by Bill Storage in Innovation management on January 28, 2020

Over half of employees in America are disengaged from their jobs – 85%, according to a recent Gallup poll. About 15% are actively disengaged – so miserable that they seek to undermine the productivity of everyone else. Gallup, ADP and Towers Watson have been reporting similar numbers for two decades now. It’s an astounding claim that signals a crisis in management and the employee experience. Astounding. And it simply cannot be true.

Think about it. When you shop, eat out, sit in a classroom, meet with an accountant, hire an electrician, negotiate contracts, and talk to tech support, do you get a sense that they truly hate their jobs? They might begrudge their boss. They might be peeved about their pay. But those problems clearly haven’t lead to enough employment angst and career choice regret that they are truly disengaged. If they were, they couldn’t hide it. Most workers I encounter at all levels reveal some level of pride in their performance.

According to Bersin and Associates, we spend about a billion dollars per year to cure employee disengagement. And apparently to little effect given the persistence of disengagement reported in these surveys. The disengagement numbers don’t reconcile with our experience in the world. We’ve all seen organizational dysfunction and toxic cultures, but they are easy to recognize; i.e., they stand out from the norm. From a Bayesian logic perspective, we have rich priors about employee sentiments and attitudes, because we see them everywhere every day.

How do research firms reach such wrong conclusions about the state of engagement? That’s not entirely clear, but it probably goes beyond the fact that most of those firms offer consulting services to cure the disengagement problem. Survey researchers have long known that small variations in question wording and order profoundly affect responses (e.g. Hadley Cantril, 1944). In engagement surveys, context and priming likely play a large part.

I’m not saying that companies do a good job of promoting the right people into management; and I’m not denying that Dilbert is alive and well. I’m saying that the evidence suggests that despite these issues, most employees seek mastery of vocation; and they somehow find some degree of purpose in their work.

Successful firms realize that people will achieve mastery on their own if you get out of their way. They’re organized for learning and sensible risk-taking, not for process compliance. They’ve also found ways to align employees’ goals with corporate mission, fostering employees’ sense of purpose in their work.

Mastery seems to emerge naturally, perhaps from intrinsic motivation, when people have a role in setting their goals. In contrast, purpose, most researchers find, requires some level of top-down communications and careful trust building. Management must walk the talk to bring a mission to life.

Long ago I worked on a top secret aircraft project. After waiting a year or so on an SBI clearance, I was surprised to find that despite the standard need-to-know conditions being stipulated, the agency provided a large amount of information about the operational profile and mission of the vehicle that didn’t seem relevant to my work. Sensing that I was baffled by this, the agency’s rep explained that they had found that people were better at keeping secrets when they knew they were trusted and knew that they were a serious part of a serious mission. Never before or since have I felt such a sense of professional purpose.

Being able to see what part you play in the big picture provides purpose. A small investment in the top-down communication of a sincere message regarding purpose and risk-taking can prevent a large investment in rehiring, retraining and searching for the sources of lost productivity.

A short introduction to small data

Posted by Bill Storage in Probability and Risk on January 13, 2020

How many children are abducted each year? Did you know anyone who died in Vietnam?

Wikipedia explains that big data is about correlations, and that small data is either about the causes of effects, or is an inference from big data. None of that captures what I mean by small data.

Most people in my circles instead think small data deals with inferences about populations made from the sparse data from within those populations. For Bayesians, this means making best use of an intuitive informative prior distribution for a model. For wise non-Bayesians, it can mean bullshit detection.

In the early 90’s I taught a course on probabilistic risk analysis in aviation. In class we were discussing how to deal with estimating equipment failure rates where few previous failures were known when Todd, a friend who was attending the class, asked how many kids were abducted each year. I didn’t know. Nor did anyone else. But we all understood where Todd was going with the question.

Todd produced a newspaper clipping citing an evangelist – Billy Graham as I recall – who claimed that 50,000 children a year were abducted in the US. Todd asked if we thought that yielded a a reasonable prior distribution.

Seeing this as a sort of Fermi problem, the class kicked it around a bit. How many kids’ pictures are on milk cartons right now, someone asked (Milk Carton Kids – remember, this was pre-internet). We remembered seeing the same few pictures of missing kids on milk cartons for months. None of us knew of anyone in our social circles who had a child abducted. How does that affect your assessment of Billy Graham’s claim?

What other groups of people have 50,000 members I asked. Americans dead in Vietnam, someone said. True, about 50,000 American service men died in Vietnam (including 9000 accidents and 400 suicides, incidentally). Those deaths spanned 20 years. I asked the class if anyone had known someone, at least indirectly, who died in Vietnam (remember, this was the early 90s and most of us had once owned draft cards). Almost every hand went up. Assuming that dead soldiers and our class were roughly randomly selected implied each of our social spheres had about 4000 members (200 million Americans in 1970, divided by 50,000 deaths). That seemed reasonable, given that news of Vietnam deaths propagated through friends-of-friends channels.

Now given that most of us had been one or two degrees’ separation from someone who died in Vietnam, could Graham’s claim possibly be true? No, we reasoned, especially since news of abductions should travel through social circles as freely as Vietnam deaths. And those Vietnam deaths had spanned decades. Graham was claiming 50,000 abductions per year.

Automobile deaths, someone added. Those are certainly randomly distributed across income, class and ethnicity. Yes, and, oddly, they occur at a rate of about 50,000 per year in the US. Anyone know someone who died in a car accident? Every single person in the class did. Yet none of us had been close to an abduction. Abductions would have to be very skewed against aerospace engineers for our car death and abduction experience to be so vastly different given their supposedly equal occurrence rates in the larger population. But the Copernican position that we resided nowhere special in the landscapes of either abductions or automobile deaths had to be mostly valid, given the diversity of age, ethnicity and geography in the class (we spanned 30 years in age, with students from Washington, California and Missouri).

One way to check the veracity of Graham’s claim would have been to do a bunch of research. That would have been library slow and would have likely still required extrapolation and assumptions about distributions and the representativeness of whatever data we could dig up. Instead we drew a sound inference from very small data, our own sampling of world events.

We were able to make good judgments about the rate of abduction, which we were now confident was very, very much lower than one per thousand (50,000 abductions per year divide by 50 million kids). Our good judgments stemmed from our having rich priors (prior distributions) because we had sampled a lot of life and a lot of people. We had rich data about deaths from car wrecks and Vietnam, and about how many kids were not abducted in each of our admittedly small circles. Big data gets the headlines, causing many of us to forget just how good small data can be.

Countable Infinity – Math or Metaphysics?

Posted by Bill Storage in Philosophy, Philosophy of Science on December 18, 2019

Are we too willing to accept things on authority – even in math? Proofs of the irrationality of the square root of two and of the Pythagorean theorem can be confirmed by pure deductive logic. Georg Cantor’s (d. 1918) claims on set size and countable infinity seem to me a much less secure sort of knowledge. High school algebra books (e.g., the classic Dolciani) teach 1-to-1 correspondence between the set of natural numbers and the set of even numbers as if it is a demonstrated truth. This does the student a disservice.

Following Cantor’s line of reasoning is simple enough, but it seems to treat infinity as a number, thereby passing from mathematics into philosophy. More accurately, it treats an abstract metaphysical construct as if it were math. Using Cantor’s own style of reasoning, one can just as easily show the natural and even number sets to be no non-corresponding.

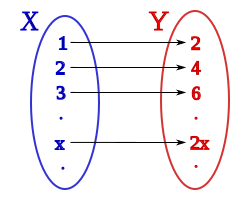

Cantor demonstrated a one-to-one correspondence between natural and even numbers by showing their elements can be paired as shown below:

1 <—> 2

2 <—> 4

3 <—> 6

…

n <—> 2n

This seems a valid demonstration of one-to-one correspondence. It looks like math, but is it? I can with equal validity show the two sets (natural numbers and even numbers) to have a 2-to-1 correspondence. Consider the following pairing. Set 1 on the left is the natural numbers. Set 2 on the right is the even numbers:

1 unpaired

2 <—> 2

3 unpaired

4 <—> 4

5 unpaired

…

2n -1 unpaired

2n <—> 2n

By removing all the unpaired (odd) elements from the set 1, you can then pair each remaining member of set 1 with each element of set 2. It seems arguable that if a one to one correspondence exists between part of set 1 and all of set 2, the two whole sets cannot support a 1-to-1 correspondence. By inspection, the set of even numbers is included within the set of natural numbers and obviously not coextensive with it. Therefore Cantor’s argument, based solely on correspondence, works only by promoting one concept – the pairing of terms – while suppressing an equally obvious concept, that of inclusion. Cantor indirectly dismisses this argument against set correspondence by allowing that a set and a proper subset of it can be the same size. That allowance is not math; it is metaphysics.

Digging a bit deeper, Cantor’s use of the 1-to-1 concept (often called bijection) is heavy handed. It requires that such correspondence be established by starting with sets having their members placed in increasing order. Then it requires the first members of each set to be paired with one another, and so on. There is nothing particularly natural about this way of doing things. It got Cantor into enough of a logical corner that he had to revise the concepts of cardinality and ordinality with special, problematic definitions.

Gottlob Frege and Bertrand Russell later patched up Cantor’s definitions. The notion of equipollent sets fell out of this work, along with complications still later addressed by von Neumann and Tarski. Finally, it seems to me that Cantor implies – but fails to state outright – that the existence of a simultaneous 2-to-1 correspondence (i.e., group each n and n+1 in set 1 with each 2n in set 2 to get a 1-to-1correspondence between the two sets) does no damage to the claims that 1-to-1correspondence between the two sets makes them equal in size. In other words, Cantor helped himself to an unnaturally restrictive interpretation (i.e., a matter of language, not of math) of 1-to-1 correspondence that favored his agenda. Finally, Cantor slips a broader meaning of equality on us than the strict numerical equality the rest of math. This is a sleight of hand. Further, his usage of the term – and concept of – size requires a special definition.

Cantor’s rule set for the pairing of terms and his special definitions are perfectly valid axioms for mathematical system, but there is nothing within mathematics that justifies these axioms. Believing that the consequences of a system or theory justify its postulates is exactly the same as believing that the usefulness of Euclidean geometry justifies Euclid’s fifth postulate. Euclid knew this wasn’t so, and Proclus tells us Euclid wasn’t alone in that view.

Galileo seems to have had a more grounded sense of the infinite than did Cantor. For Galileo, the concrete concept of mathematical equality does not reconcile with the abstract concept of infinity. Galileo thought concepts like similarity, countability, size, and equality just don’t apply to the infinite. Did the development of calculus create an unwarranted acceptance of infinity as a mathematical entity? Does our understanding that things can approach infinity justify allowing infinities to be measured and compared?

Cantor’s model of infinity is interesting and useful, but it is a shame that’s it’s taught as being a matter of fact, e.g., “infinity comes in infinitely many different sizes – a fact discovered by Georg Cantor” (Science News, Jan 8, 2008).

On countable infinity we might consider WVO Quine’s position that the line between analytic (a priori) and synthetic (about the world) statements is blurry, and that no claim is immune to empirical falsification. In that light I’d argue that the above demonstration of inequality of the sets of natural and even numbers (inclusion of one within the other) trumps the demonstration of equal size by correspondence.

Mathematicians who state the equal-size concept as a fact discovered by Cantor have overstepped the boundaries of their discipline. Galileo regarded the natural-even set problem as a true paradox. I agree. Does Cantor really resolve this paradox or is he merely manipulating language?

Recent Comments