Posts Tagged big data

A short introduction to small data

Posted by Bill Storage in Probability and Risk on January 13, 2020

How many children are abducted each year? Did you know anyone who died in Vietnam?

Wikipedia explains that big data is about correlations, and that small data is either about the causes of effects, or is an inference from big data. None of that captures what I mean by small data.

Most people in my circles instead think small data deals with inferences about populations made from the sparse data from within those populations. For Bayesians, this means making best use of an intuitive informative prior distribution for a model. For wise non-Bayesians, it can mean bullshit detection.

In the early 90’s I taught a course on probabilistic risk analysis in aviation. In class we were discussing how to deal with estimating equipment failure rates where few previous failures were known when Todd, a friend who was attending the class, asked how many kids were abducted each year. I didn’t know. Nor did anyone else. But we all understood where Todd was going with the question.

Todd produced a newspaper clipping citing an evangelist – Billy Graham as I recall – who claimed that 50,000 children a year were abducted in the US. Todd asked if we thought that yielded a a reasonable prior distribution.

Seeing this as a sort of Fermi problem, the class kicked it around a bit. How many kids’ pictures are on milk cartons right now, someone asked (Milk Carton Kids – remember, this was pre-internet). We remembered seeing the same few pictures of missing kids on milk cartons for months. None of us knew of anyone in our social circles who had a child abducted. How does that affect your assessment of Billy Graham’s claim?

What other groups of people have 50,000 members I asked. Americans dead in Vietnam, someone said. True, about 50,000 American service men died in Vietnam (including 9000 accidents and 400 suicides, incidentally). Those deaths spanned 20 years. I asked the class if anyone had known someone, at least indirectly, who died in Vietnam (remember, this was the early 90s and most of us had once owned draft cards). Almost every hand went up. Assuming that dead soldiers and our class were roughly randomly selected implied each of our social spheres had about 4000 members (200 million Americans in 1970, divided by 50,000 deaths). That seemed reasonable, given that news of Vietnam deaths propagated through friends-of-friends channels.

Now given that most of us had been one or two degrees’ separation from someone who died in Vietnam, could Graham’s claim possibly be true? No, we reasoned, especially since news of abductions should travel through social circles as freely as Vietnam deaths. And those Vietnam deaths had spanned decades. Graham was claiming 50,000 abductions per year.

Automobile deaths, someone added. Those are certainly randomly distributed across income, class and ethnicity. Yes, and, oddly, they occur at a rate of about 50,000 per year in the US. Anyone know someone who died in a car accident? Every single person in the class did. Yet none of us had been close to an abduction. Abductions would have to be very skewed against aerospace engineers for our car death and abduction experience to be so vastly different given their supposedly equal occurrence rates in the larger population. But the Copernican position that we resided nowhere special in the landscapes of either abductions or automobile deaths had to be mostly valid, given the diversity of age, ethnicity and geography in the class (we spanned 30 years in age, with students from Washington, California and Missouri).

One way to check the veracity of Graham’s claim would have been to do a bunch of research. That would have been library slow and would have likely still required extrapolation and assumptions about distributions and the representativeness of whatever data we could dig up. Instead we drew a sound inference from very small data, our own sampling of world events.

We were able to make good judgments about the rate of abduction, which we were now confident was very, very much lower than one per thousand (50,000 abductions per year divide by 50 million kids). Our good judgments stemmed from our having rich priors (prior distributions) because we had sampled a lot of life and a lot of people. We had rich data about deaths from car wrecks and Vietnam, and about how many kids were not abducted in each of our admittedly small circles. Big data gets the headlines, causing many of us to forget just how good small data can be.

Wisdom and Madness of the Yelp Crowd

Posted by Bill Storage in Crowd wisdom, Multidisciplinarians, Probability and Risk on April 20, 2012

I’ve been digging deep into Yelp and other sites that collect crowd ratings lately; and I’ve discovered wondrous and fascinating things. I’ve been doing this to learn more about when and how crowds are wise. Potential inferences about “why” are alluring too. I looked at two main groups of reviews, those for doctors and medical services, and reviews for restaurants and entertainment.

I’ve been digging deep into Yelp and other sites that collect crowd ratings lately; and I’ve discovered wondrous and fascinating things. I’ve been doing this to learn more about when and how crowds are wise. Potential inferences about “why” are alluring too. I looked at two main groups of reviews, those for doctors and medical services, and reviews for restaurants and entertainment.

As doctors, dentists and those in certain other service categories are painfully aware, Yelp ratings do not follow the expected distribution of values. This remains true despite Yelp’s valiant efforts to weed out shills, irate one-offs and spam.

Just how skewed are Yelp ratings when viewed in the aggregate? I took a fairly deep look and concluded that big bias lurks in the big data of Yelp. I’ll get to some hard numbers and take a crack at some analysis. First a bit of background.

Yelp data comes from a very non-random sample of a population. One likely source of this adverse selection is that those who are generally satisfied with service tend not to write reviews. Many who choose to write reviews want their ratings to be important, so they tend to avoid ratings near the mean value. Another source of selection bias stems from Yelp’s huge barrier – in polling terms anyway – to voting. Yelp users have to write a review before they can rate, and most users can’t be bothered. Further, those who vote are Yelp members who have (hopefully) already used the product or service, which means there’s a good chance they read other reviews before writing theirs. This brings up the matter of independence of members.

Plenty of tests – starting with Francis Galton’s famous ox-weighing study in 1906 – have shown that the median value of answers to quantitative questions in a large random crowd is often more accurate than answers by panels of experts. Crowds do very well at judging the number of jellybeans in the jar and reasonably well at guessing the population of Sweden, the latter if you take the median value rather than the mean. But gross misapplications of this knowledge permeate the social web. Fans of James Surowiecki’s “The Wisdom of Crowds” very often forget that independence is essential condition of crowd wisdom. Without that essential component to crowd wisdom, crowds can do things like burning witches and running up stock prices during the dot com craze. Surowiecki acknowledges the importance of this from the start (page 5):

There are two lessons to be drawn from the experiments. In most of them the members of the group were not talking to each other or working on a problem together.

Influence and communication love connections; but crowd wisdom relies on independence of its members, not collaboration between them. Surowiecki also admits, though rather reluctantly, that crowds do best in a subset of what he calls cognition problems – specifically, objective questions with quantitative answers. Surowiecki has great hope for use of crowds in subjective cognition problems along with coordination and cooperation problems. I appreciate his optimism, but don’t find his case for these very convincing.

In Yelp ratings, the question being answered is far from objective, despite the discrete star ratings. Subjective questions (quality of service) cannot be made objective by constraining answers to numerical values. Further, there is no agreement on what quality is really being measured. For doctors, some users rate bedside manner, some the front desk, some the outcome of ailment, and some billing and insurance handling. Combine that with self-selection bias and non-independence of users and the wisdom of the crowd – if present – can have difficulty expressing itself.

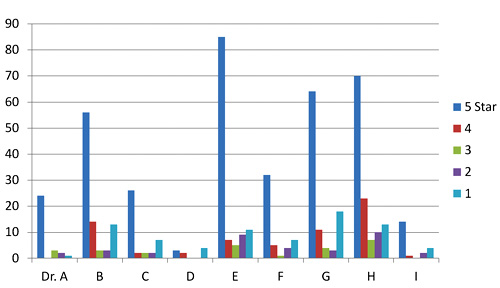

Two doctors on my block have mean Yelp ratings of 3.7 and 3.0 stars on a scale of 1 to 5. Their sample standard deviations are 1.7 and 1.9 (mean absolute deviations: 1.2 and 1.8). Since the maximum possible population standard deviation for a doctor on Yelp is 2.0, everything about this doctor data should probably be considered next to useless; it’s mean and even median aren’t reliable. The distributions of ratings isn’t merely skewed; it’s bimodal in these two cases and for half of the doctors in San Francisco. That means the rating survey yields highly conflicting results for doctors. Here are the Yelp scores of doctors in my neighborhood.

Yelp rating distribution for 9 nearby doctors

I’ve been watching the doctor ratings over the last few years. A year ago, Dr. E’s ratings looked rather like Dr. I’s ratings look today. Unlike restaurants, which experience a rating warm-start on Yelp, the 5-star ratings of doctors grow over time at a higher rate than their low ratings. Doctors, some having been in business for decades, appear to get better as Yelp gets more popular. Three possible explanations come to mind. The first deals with competition. The population of doctors, like any provider in a capitalist system, is not fixed. Those who fare poorly in ratings are likely to get fewer customers and go out of business. The crowd selects doctors for quality, so in a mature system, most doctors, restaurants, and other businesses will have above-average ratings.

The second possible explanation for the change in ratings over time deals with selection, not in the statistics sense (not adverse selection) but in the social-psychology sense (clan or community formation). This would seem more likely to apply to restaurants than to doctors, but the effect on urban doctors may still be large. People tend to select friends or communities of people like themselves – ethnic, cultural, political, or otherwise. Referrals by satisfied customers tend to bring in more customers who are more likely to be satisfied. Businesses end up catering to the preferences of a group, which pre-selects customers more likely to be satisfied and give high ratings.

A third reason for the change over time could be a social-influence effect. People may form expectations based on the dominant mood of reviews they read before their visit. So later reviews might greatly exaggerate any preferences visible in early reviews.

Automotive services don’t fare much better on Yelp than doctors and dentists. But rating distributions for music venues, hotels and restaurants, though skewed toward high ratings, aren’t bimodal like the doctor data. The two reasons given above for positive skew in doctors’ ratings are likely both at work in restaurants and hotels. Yelp ratings for restaurants give clues about those who contribute them.

I examined about 10,000 of my favorite California restaurants, excluding fast food chains. I was surprised to find that the standard deviation of ratings for each restaurant increased – compared to theoretical maximum values – as average ratings increased. If that’s hard to follow in words, the below scatter plot will drive the point home. It shows average rating vs. standard deviation for each of 10,000 restaurants. Ratings are concentrated at the right side of the plot, and are clustered fairly near the theoretical maximum standard deviation (the gray elliptical arc enclosing the data points) for any given average rating. Color indicate rough total rating counts contributing to each spot on the plot – yellow for restaurants with 5 or less ratings, red for those having 40 or less, and blue for those with more than 40 ratings. (Some points are outside the ellipse because it represents maximum population deviations and the points are sample standard deviations.)

I examined about 10,000 of my favorite California restaurants, excluding fast food chains. I was surprised to find that the standard deviation of ratings for each restaurant increased – compared to theoretical maximum values – as average ratings increased. If that’s hard to follow in words, the below scatter plot will drive the point home. It shows average rating vs. standard deviation for each of 10,000 restaurants. Ratings are concentrated at the right side of the plot, and are clustered fairly near the theoretical maximum standard deviation (the gray elliptical arc enclosing the data points) for any given average rating. Color indicate rough total rating counts contributing to each spot on the plot – yellow for restaurants with 5 or less ratings, red for those having 40 or less, and blue for those with more than 40 ratings. (Some points are outside the ellipse because it represents maximum population deviations and the points are sample standard deviations.)

The second scatter shows average rating vs. standard deviation for the Yelp users who rated these restaurants, with the same color scheme. Similarly, it shows that most raters rate high on average, but each voter still tends to rate at the extreme ends possible to yield his average value. For example, many raters whose average rating is 4 stars use far more 3 and 5-star ratings than nature would expect.

Scatter plot of standard deviation vs. average Yelp rating for about 10,000 restaurants

Scatter plot of standard deviation vs. average rating for users who rated 10,000 restaurants

Next I looked at the rating behavior of users who rate restaurants. The first thing that jumps out of Yelp user data is that the vast majority of Yelp restaurant ratings are made by users who have rated only one to five restaurants. A very small number have rated more than twenty.

Rating counts of restaurant raters by activity level

A look at comparative distribution of the three activity levels (1 to 5, 6 to 20, and over 20) as percentages of category total shows that those who rate least are more much more likely to give extreme ratings. This is a considerable amount of bias, throughout 100,000 users making half a million ratings. In a 2009 study of Amazon users, Vassilis Kostakos found similar results in their ratings to what we’re seeing here for bay area restaurants.

Normalized rating counts of restaurant raters by activity level

Can any practical wisdom be applied to this observation of crowd bias? Perhaps a bit. For those choosing doctors based on reviews, we can suggest that doctors with low rating counts, having both very high and very low ratings, will likely look better a year from now. Restaurants with low rating counts (count of ratings, not value) are likely to be more average than their average rating values suggest (no negative connotation to average here). Yelp raters should refrain from hyperbole, especially in their early days of rating. Those putting up rating/review sites should be aware that seemingly small barriers to the process of rating may be important, since the vast majority of raters only rate a few items.

This data doesn’t really give much insight into the contribution of social influence to the crowd bias we see here. That fascinating and important topic is at the intersection of crowdsourcing and social technology. More on that next time.