Archive for category Philosophy of Science

Countable Infinity – Math or Metaphysics?

Posted by Bill Storage in Philosophy, Philosophy of Science on December 18, 2019

Are we too willing to accept things on authority – even in math? Proofs of the irrationality of the square root of two and of the Pythagorean theorem can be confirmed by pure deductive logic. Georg Cantor’s (d. 1918) claims on set size and countable infinity seem to me a much less secure sort of knowledge. High school algebra books (e.g., the classic Dolciani) teach 1-to-1 correspondence between the set of natural numbers and the set of even numbers as if it is a demonstrated truth. This does the student a disservice.

Following Cantor’s line of reasoning is simple enough, but it seems to treat infinity as a number, thereby passing from mathematics into philosophy. More accurately, it treats an abstract metaphysical construct as if it were math. Using Cantor’s own style of reasoning, one can just as easily show the natural and even number sets to be no non-corresponding.

Cantor demonstrated a one-to-one correspondence between natural and even numbers by showing their elements can be paired as shown below:

1 <—> 2

2 <—> 4

3 <—> 6

…

n <—> 2n

This seems a valid demonstration of one-to-one correspondence. It looks like math, but is it? I can with equal validity show the two sets (natural numbers and even numbers) to have a 2-to-1 correspondence. Consider the following pairing. Set 1 on the left is the natural numbers. Set 2 on the right is the even numbers:

1 unpaired

2 <—> 2

3 unpaired

4 <—> 4

5 unpaired

…

2n -1 unpaired

2n <—> 2n

By removing all the unpaired (odd) elements from the set 1, you can then pair each remaining member of set 1 with each element of set 2. It seems arguable that if a one to one correspondence exists between part of set 1 and all of set 2, the two whole sets cannot support a 1-to-1 correspondence. By inspection, the set of even numbers is included within the set of natural numbers and obviously not coextensive with it. Therefore Cantor’s argument, based solely on correspondence, works only by promoting one concept – the pairing of terms – while suppressing an equally obvious concept, that of inclusion. Cantor indirectly dismisses this argument against set correspondence by allowing that a set and a proper subset of it can be the same size. That allowance is not math; it is metaphysics.

Digging a bit deeper, Cantor’s use of the 1-to-1 concept (often called bijection) is heavy handed. It requires that such correspondence be established by starting with sets having their members placed in increasing order. Then it requires the first members of each set to be paired with one another, and so on. There is nothing particularly natural about this way of doing things. It got Cantor into enough of a logical corner that he had to revise the concepts of cardinality and ordinality with special, problematic definitions.

Gottlob Frege and Bertrand Russell later patched up Cantor’s definitions. The notion of equipollent sets fell out of this work, along with complications still later addressed by von Neumann and Tarski. Finally, it seems to me that Cantor implies – but fails to state outright – that the existence of a simultaneous 2-to-1 correspondence (i.e., group each n and n+1 in set 1 with each 2n in set 2 to get a 1-to-1correspondence between the two sets) does no damage to the claims that 1-to-1correspondence between the two sets makes them equal in size. In other words, Cantor helped himself to an unnaturally restrictive interpretation (i.e., a matter of language, not of math) of 1-to-1 correspondence that favored his agenda. Finally, Cantor slips a broader meaning of equality on us than the strict numerical equality the rest of math. This is a sleight of hand. Further, his usage of the term – and concept of – size requires a special definition.

Cantor’s rule set for the pairing of terms and his special definitions are perfectly valid axioms for mathematical system, but there is nothing within mathematics that justifies these axioms. Believing that the consequences of a system or theory justify its postulates is exactly the same as believing that the usefulness of Euclidean geometry justifies Euclid’s fifth postulate. Euclid knew this wasn’t so, and Proclus tells us Euclid wasn’t alone in that view.

Galileo seems to have had a more grounded sense of the infinite than did Cantor. For Galileo, the concrete concept of mathematical equality does not reconcile with the abstract concept of infinity. Galileo thought concepts like similarity, countability, size, and equality just don’t apply to the infinite. Did the development of calculus create an unwarranted acceptance of infinity as a mathematical entity? Does our understanding that things can approach infinity justify allowing infinities to be measured and compared?

Cantor’s model of infinity is interesting and useful, but it is a shame that’s it’s taught as being a matter of fact, e.g., “infinity comes in infinitely many different sizes – a fact discovered by Georg Cantor” (Science News, Jan 8, 2008).

On countable infinity we might consider WVO Quine’s position that the line between analytic (a priori) and synthetic (about the world) statements is blurry, and that no claim is immune to empirical falsification. In that light I’d argue that the above demonstration of inequality of the sets of natural and even numbers (inclusion of one within the other) trumps the demonstration of equal size by correspondence.

Mathematicians who state the equal-size concept as a fact discovered by Cantor have overstepped the boundaries of their discipline. Galileo regarded the natural-even set problem as a true paradox. I agree. Does Cantor really resolve this paradox or is he merely manipulating language?

Paul Feyerabend, The Worst Enemy of Science

Posted by Bill Storage in History of Science, Philosophy of Science on December 10, 2019

“How easy it is to lead people by the nose in a rational way.”

A similarly named post I wrote on Paul Feyerabend seven years ago turned out to be my most popular post by far. Seeing it referenced in a few places has made me cringe, and made me face the fact that I failed to make my point. I’ll try to correct that here. I don’t remotely agree with the paper in Nature that called Feyerabend the worst enemy of science, nor do I side with the postmodernists that idolize him. I do find him to be one of the most provocative thinkers of the 20th century, brash, brilliant, and sometimes full of crap.

Feyerabend opened his profound Against Method by telling us to always remember that what he writes in the book does not reflect any deep convictions of his, but that he intends “merely show how easy it is to lead people by the nose in a rational way.” I.e., he was more telling us what he thought we needed to hear than what he necessarily believed. In his autobiography he wrote that for Against Method he had used older material but had “replaced moderate passages with more outrageous ones.” Those using and abusing Feyerabend today have certainly forgot what this provocateur, who called himself an entertainer, told us always to remember about him in his writings.

Any who think Feyerabend frivolous should examine the scientific rigor in his analysis of Galileo’s work. Any who find him to be an enemy of science should actually read Against Method instead of reading about him, as quotes pulled from it can be highly misleading as to his intent. My communications with some of his friends after he died in 1994 suggest that while he initially enjoyed ruffling so many feathers with Against Method, he became angered and ultimately depressed over both critical reactions against it and some of the audiences that made weapons of it. In 1991 he wrote, “I often wished I had never written that fucking book.”

I encountered Against Method searching through a library’s card catalog seeking an authority on the scientific method. I learned from Feyerabend that no set of methodological rules fits the great advances and discoveries in science. It’s obvious once you think about it. Pick a specific scientific method – say the hypothetico-deductive model – or any set of rules, and Feyerabend will name a scientific discovery that would not have occurred had the scientist, from Galileo to Feynman, followed that method, or any other.

Part of Feyerabend’s program was to challenge the positivist notion that in real science, empiricism trumps theory. Galileo’s genius, for Feyerabend, was allowing theory to dominate observation. In Dialogue Galileo wrote:

Nor can I ever sufficiently admire the outstanding acumen of those who have taken hold of this opinion and accepted it as true: they have, through sheer force of intellect, done such violence to their own senses as to prefer what reason told them over that which sensible experience plainly showed them to be the contrary.

For Feyerabend, against Popper and the logical positivists of the mid 1900’s, Galileo’s case exemplified a need to grant theory priority over evidence. This didn’t sit well with empiricist leanings of the the post-war western world. It didn’t set well with most scientists or philosophers. Sociologists and literature departments loved it. It became fuel for fire of relativism sweeping America in the 70’s and 80’s and for the 1990’s social constructivists eager to demote science to just another literary genre.

But in context, and in the spheres for which Against Method was written, many people – including Feyerabend’s peers from 1970 Berkeley, with whom I’ve had many conversations on the topic, conclude that the book’s goading style was a typical Feyerabendian corrective provocation to that era’s positivistic dogma.

Feyerabend distrusts the orthodoxy of social practices of what Thomas Kuhn termed “normal science” – what scientific institutions do in their laboratories. Unlike their friend Imre Lakatos, Feyerabend distrusts any rule-based scientific method at all. Instead, Feyerabend praises the scientific innovation and individual creativity. For Feyerabend science in the mid 1900’s had fallen prey to the “tyranny of tightly-knit, highly corroborated, and gracelessly presented theoretical systems.” What would he say if alive today?

As with everything in the philosophy of science in the late 20th century, some of the disagreement between Feyerabend, Kuhn, Popper and Lakatos revolved around miscommunication and sloppy use of language. The best known case of this was Kuhn’s inconsistent use of the term paradigm. But they all (perhaps least so Lakatos) talked past each other by failing to differentiate different meanings of the word science, including:

- An approach or set of rules and methods for inquiry about nature

- A body of knowledge about nature

- In institution, culture or community of scientists, including academic, government and corporate

Kuhn and Feyerabend in particular vacillated between meaning science as a set of methods and science as an institution. Feyerabend certainly was referring to an institution when he said that science was a threat to democracy and that there must be “a separation of state and science just as there is a separation between state and religious institutions.” Along these lines Feyerabend thought that modern institutional science resembles more the church of Galileo’s day than it resembles Galileo.

On the matter of state control of science, Feyerabend went further than Eisenhower did in his “military industrial complex” speech, even with the understanding that what Eisenhower was describing was a military-academic-industrial complex. Eisenhower worried that a government contract with a university “becomes virtually a substitute for intellectual curiosity.” Feyerabend took this worry further, writing that university research requires conforming to orthodoxy and “a willingness to subordinate one’s ideas to those of a team leader.” Feyerabend disparaged Kuhn’s normal science as dogmatic drudgery that stifles scientific creativity.

A second area of apparent miscommunication about the history/philosophy of science in the mid 1900’s was the descriptive/normative distinction. John Heilbron, who was Kuhn’s grad student when Kuhn wrote Structure of Scientific Revolutions, told me that Kuhn absolutely despised Popper, not merely as a professional rival. Kuhn wanted to destroy Popper’s notion that scientists discard theories on finding disconfirming evidence. But Popper was describing ideally performed science; his intent was clearly normative. Kuhn’s work, said Heilbron (who doesn’t share my admiration for Feyerabend), was intended as normative only for historians of science, not for scientists. True, Kuhn felt that it was pointless to try to distinguish the “is” from the “ought” in science, but this does not mean he thought they were the same thing.

As with Kuhn’s use of paradigm, Feyerabend’s use of the term science risks equivocation. He drifts between methodology and institution to suit the needs of his argument. At times he seems to build a straw man of science in which science insists it creates facts as opposed to building models. Then again, on this matter (fact/truth vs. models as the claims of science) he seems to be more right about the science of 2019 than he was about the science of 1975.

While heavily indebted to Popper, Feyerabend, like Kuhn, grew hostile to Popper’s ideas of demarcation and falsification: “let us look at the standards of the Popperian school, which are still being taken seriously in the more backward regions of knowledge.” He eventually expanded his criticism of Popper’s idea of theory falsification to a categorical rejection of Popper’s demarcation theories and of Popper’s critical rationalism in general. Now from the perspective of half a century later, a good bit of the tension between Popper and both Feyerabend and Kuhn and between Kuhn and Feyerabend seems to have been largely semantic.

For me, Feyerabend seems most relevant today through his examination of science as a threat to democracy. He now seems right in ways that even he didn’t anticipate. He thought it a threat mostly in that science (as an institution) held complete control over what is deemed scientifically important for society. In contrast, people as individuals or small competing groups, historically have chosen what counts as being socially valuable. In this sense science bullied the citizen, thought Feyerabend. Today I think we see a more extreme example of bullying, in the case of global warming for example, in which government and institutionalized scientists are deciding not only what is important as a scientific agenda but what is important as energy policy and social agenda. Likewise the role that neuroscience plays in primary education tends to get too much of the spotlight in the complex social issues of how education should be conducted. One recalls Lakatos’ concern against Kuhn’s confidence in the authority of “communities.” Lakatos had been imprisoned by the Nazis for revisionism. Through that experience he saw Kuhn’s “assent of the relevant community” as not much of a virtue if that community has excessive political power and demands that individual scientists subordinate their ideas to it.

As a tiny tribute to Feyerabend, about whom I’ve noted caution is due in removal of his quotes from their context, I’ll honor his provocative spirit by listing some of my favorite quotes, removed from context, to invite misinterpretation and misappropriation.

“The similarities between science and myth are indeed astonishing.”

“The church at the time of Galileo was much more faithful to reason than Galileo himself, and also took into consideration the ethical and social consequences of Galileo’s doctrine. Its verdict against Galileo was rational and just, and revisionism can be legitimized solely for motives of political opportunism.”

“All methodologies have their limitations and the only ‘rule’ that survives is ‘anything goes’.”

“Revolutions have transformed not only the practices their initiators wanted to change buy the very principles by means of which… they carried out the change.”

“Kuhn’s masterpiece played a decisive role. It led to new ideas, Unfortunately it also led to lots of trash.”

“First-world science is one science among many.”

“Progress has always been achieved by probing well-entrenched and well-founded forms of life with unpopular and unfounded values. This is how man gradually freed himself from fear and from the tyranny of unexamined systems.”

“Research in large institutes is not guided by Truth and Reason but by the most rewarding fashion, and the great minds of today increasingly turn to where the money is — which means military matters.”

“The separation of state and church must be complemented by the separation of state and science, that most recent, most aggressive, and most dogmatic religious institution.”

“Without a constant misuse of language, there cannot be any discovery, any progress.”

__________________

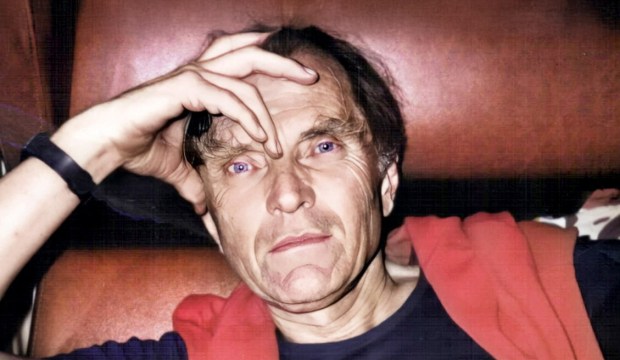

Photos of Paul Feyerabend courtesy of Grazia Borrini-Feyerabend

Yes, Greta, let’s listen to the scientists

Posted by Bill Storage in Philosophy of Science, Sustainable Energy on September 22, 2019

Young people around the world protested for climate action last week. 16-year old Greta Thunberg implored congress to “listen to the scientists” about climate change and fix it so her generation can thrive.

OK, let’s listen to them, and assume for sake of argument that we understand “them” to be a large majority of all relevant scientists, and that say with one voice that humans are materially affecting climate. And let’s take the IPCC’s projections for a 3.4 to 4.8 degree C rise by 2100 in the absence of policy changes. While activists and politicians report that scientific consensus exists, some reputable scientists dispute this. But for sake of discussion assume such consensus exists.

That temperature rise, scientists tell us, would change sea levels and would warm cold regions more than warm regions. “Existential crisis,” said Elizabeth Warren on Tuesday. Would that in fact pose an existential threat? I.e., would it cause human extinction? That question probably falls much more in the realm of engineering than in science. But let’s assume Greta might promote (or demote, depending on whether you prefer expert generalists to exert specialists) engineers to the rank of scientists.

The World Bank 4 Degrees – Turn Down the Heat report is often cited as concluding that uncontrolled human climate impact threatens the human race. It does not. It describes Sub-Saharan Africa food production risk, southeast Asia water scarcity and coastal productivity risk. It speaks of wakeup-calls and tipping points, and, lacking the ability to quantify risks, assumes several worst imaginable cases of cascade effects, while rejecting all possibility that innovation and engineering can, for example, mitigate water scarcity problems before they result in health problems. The language and methodology of this report is much closer to the realm of sociology than to that of people we usually call scientists. Should sociology count as science or as philosophy and ethics? I think the latter, and I think the World Bank’s analysis reeks of value-laden theory and theory-laden observations. But for sake of argument let’s grant that climate Armageddon, true danger to survival of the race, is inevitable without major change.

Now given this impending existential crisis, what can the voice of scientists do for us? Those schooled in philosophy, ethics, and the soft sciences might recall the is-ought problem, also known as Hume’s Guillotine, in honor of the first writer to make a big deal of it. The gist of the problem, closely tied to the naturalistic fallacy, is that facts about the world do not and cannot directly cause value judgments. And this holds regardless of whether you conclude that moral truths do or don’t exist. “The rules of morality are not conclusions of our reason,” observed Hume. For a more modern philosophical take on this issue see Simon Blackburn’s Ethics.

Strong statements on the non-superiority of scientists as advisers outside their realm come from scientists like Richard Feynman and Wifred Trotter (see below).

But let’s assume, for sake of argument, that scientists are the people who can deliver us from climate Armageddon. Put them on a pedestal, like young Greta does. Throw scientism caution to the wind. I believe scientists probably do have more sensible views on the matter than do activists. But if we’re going to do this – put scientists at the helm – we should, as Greta says, listen to those scientists. That means the scientists, not the second-hand dealers in science – the humanities professors, pandering politicians, and journalists with agendas, who have, as Hayek phrased it, absorbed rumors in the corridors of science and appointed themselves as spokesmen for science.

What are these scientists telling us to do about climate change? If you think they’re advising us to equate renewables with green, as young protesters have been taught to do, then you’re listening not to the scientists but to second-hand dealers of misinformed ideology who do not speak for science. How many scientist think that renewables – at any scale that can put a real dent in fossil fuel use – are anything remotely close to green? What scientist thinks utility-scale energy storage can be protested and legislated into existence by 2030? How many scientist think uranium is a fossil fuel?

The greens, whose plans for energy are not remotely green, have set things up so that sincere but uniformed young people like Greta have only one choice – to equate climate change mitigation with what they call renewable energy. Even under Mark Jacobson’s grossly exaggerated claims about the efficiency and feasibility of electricity generation from renewables, Greta and her generation would shudder at the environmental devastation a renewables-only energy plan would yield.

Where is the green cry for people like Greta to learn science and engineering so they can contribute to building the world they want to live in? “Why should we study for a future that is being taken away from us?” asked Greta. One good reason 16-year-olds might do this is that in 2025 they can have an engineering degree and do real work on energy generation and distribution. Climate Armageddon will not happen by 2025.

I feel for Greta, for she’s been made a stage prop in an education-system and political drama that keeps young people ignorant of science and engineering, ensures they receive filtered facts from specific “trustworthy” sources, and keeps them emotionally and politically charged – to buy their votes, to destroy capitalism, to rework political systems along a facile Marxist ideology, to push for open borders and world government, or whatever the reason kids like her are politically preyed upon.

If the greens really believed that climate Armageddon were imminent (combined with the fact the net renewable contribution to world energy is still less than 1%), they might consider the possibility that gas is far better than coal in the short run, and that nuclear risks are small compared to the human extinction they are absolutely certain is upon us. If the greens’ real concern was energy and the environment, they would encourage Greta to list to scientists like Nobel laureate in physics, Ivar Giaever, who says climate alarmism is garbage, and then to identify the points on which Giaever is wrong. That’s what real scientists do.

But it isn’t about that, is it? It’s not really about science or even about climate. As Saikat Chakrabarti, former chief of staff for Ocasio-Cortez, admitted: “the interesting thing about the Green New Deal is it wasn’t originally a climate thing at all.” “Because we really think of it as a how-do-you-change-the-entire-economy thing,” he added. To be clear, Greta did not endorse the Green New Deal, but she is their pawn.

Frightened, indoctrinated, science-ignorant kids are really easy to manipulate and exploit. Religions – particularly those that silence dissenters, brand heretics, and preach with righteous indignation of apocalypses that fail to happen – have long understood this. The green religion understands it too.

Go back to school, kids. You can’t protest your way to science. Learn physics, not social studies – if you can – because most of your teachers are puppets and fools. Learn to decide for yourself who you will listen to.

.

I believe that a scientist looking at nonscientific problems is just as dumb as the next guy — and when he talks about a nonscientific matter, he will sound as naive as anyone untrained in the matter. – Richard Feynman, The Value of Science, 1955.

Nothing is more flatly contradicted by experience than the belief that a man, distinguished in one of the departments of science is more likely to think sensibly about ordinary affairs than anyone else. – Wilfred Trotter, Has the Intellect a Function?, 1941

Physics for Venture Capitalists

Posted by Bill Storage in Innovation management, Philosophy of Science on August 11, 2019

VCs stress that they’re not in the business of evaluating technology. Few failures of startups are due to bad tech. Leo Polovets at Susa Ventures says technical diligence is a waste of time because few startups have significant technical risk. Success hinges on knowing customers’ needs, efficiently addressing those needs, hiring well, minding customer acquisition, and having a clue about management and governance.

In the dot-com era, I did tech diligence for Internet Capital Group. They invested in everything I said no to. Every one of those startups failed, likely for business management reasons. Had bad management not killed them, their bad tech would have in many cases. Are things different now?

Polovets is surely right in the domain of software. But hardware is making a comeback, even in Silicon Valley. A key difference between diligence on hardware and software startups is that software technology barely relies on the laws of nature. Hardware does. Hardware is dependent on science in a way software isn’t.

Silicon Valley’s love affairs with innovation and design thinking (the former being a retrospective judgement after market success, the latter mostly marketing jargon) leads tech enthusiasts and investors to believe that we can do anything given enough creativity. Creativity can in fact come up with new laws of nature. Isaac Newton and Albert Einstein did it. Their creativity was different in kind from that of the Wright Brothers and Elon Musk. Those innovators don’t change laws of nature; they are very tightly bound by them.

You see the impact of innovation overdose in responses to anything cautious of overoptimism in technology. Warp drive has to be real, right? It was already imagined back when William Shattner could do somersaults.

When the Solar Impulse aircraft achieved 400 miles non-stop, enthusiasts demanded solar passenger planes. Solar Impulse has the wingspan of an A380 (800 passengers) but weighs less than my car. When the Washingon Post made the mildly understated point that solar powered planes were a long way from carrying passengers, an indignant reader scorned their pessimism: “I can see the WP headline from 1903: ‘Wright Flyer still a long way from carrying passengers’. Nothing like a good dose of negativity.”

Another reader responded, noting that theoretical limits would give a large airliner coated with cells maybe 30 kilowatts of sun power, but it takes about 100 megawatts to get off the runway. Another enthusiast, clearly innocent of physics, said he disagreed with this answer because it addressed current technology and “best case.” Here we see a disconnect between two understandings of best case, one pointing to hard limits imposed by nature, the other to soft limits imposed by manufacturing and limits of current engineering know-how.

What’s a law of nature?

Law of nature doesn’t have a tight definition. But in science it usually means generalities drawn from a very large body of evidence. Laws in this sense must be universal, omnipotent, and absolute – true everywhere for all time, no exceptions. Laws of nature don’t happen to be true; they have to be true (see footnote*). They are true in both main philosophical senses of “true”: correspondence and coherence. To the best of our ability, they correspond with reality from a gods’ eye perspective; and they cohere, in the sense that each gets along with every other law of nature, allowing a coherent picture of how the universe works. The laws are interdependent.

Now we’ve gotten laws wrong in the past, so our current laws may someday be overturned too. But such scientific disruptions are rare indeed – a big one in 1687 (Newton) and another in 1905 (Einstein). Lesser laws rely on – and are consistent with – greater ones. The laws of physics erect barriers to engineering advancement. Betting on new laws of physics – as cold fusion and free-energy investors have done – is a very long shot.

As an example of what flows from laws of nature, most gasoline engines (Otto cycle) have a top theoretical efficiency of about 47%. No innovative engineering prowess can do better. Material and temperature limitations reduce that further. All metals melt at some temperature, and laws of physics tell us we’ll find no new stable elements for building engines – even in distant galaxies. Moore’s law, by the way, is not in any sense a law in the way laws of nature are laws.

The Betz limit tells us that no windmill will ever convert more than 59.3% of the wind’s kinetic energy into electricity – not here, not on Jupiter, not with curvy carbon nanotube blades, not coated with dilythium crystals. This limit doesn’t come from measurement; it comes from deduction and the laws of nature. The Shockley-Queisser limit tells us no single-layer photovoltaic cell will ever convert more than 33.7% of the solar energy hitting it into electricity. Gaia be damned, but we’re stuck with physics, and physics trumps design thinking.

So while funding would grind to a halt if investors dove into the details of pn-junctions in chalcopyrite semiconductors, they probably should be cautious of startups that, as judged by a Physics 101 student, are found to flout any fundamental laws of nature. That is, unless they’re fixing to jump in early, ride the hype cycle to the peak of expectation, and then bail out before the other investors catch on. They’d never do that, right?

Solyndra’s sales figures

In Solyndra‘s abundant autopsies we read that those crooks duped the DoE about sales volume and profits. An instant Wall Street darling, Solyndra was named one of 50 most innovative companies by Technology Review. Later, the Solyndra scandal coverage never mentioned that the idea of cylindrical containers of photovoltaic cells with spaces between them was a dubious means of maximizing incident rays. Yes, some cells in a properly arranged array of tubes would always be perpendicular to the sun (duh), but the surface area of the cells within say 30 degrees of perpendicular to the sun is necessarily (not even physics, just geometry) only one sixth of those on the tube (2 * 30 / 360). The fact that the roof-facing part of the tubes catches some reflected light relies on there being space between the tubes, which obviously aren’t catching those photons directly. A two-layer tube grabs a few more stray photons, but… Sure, the DoE should have been more suspicious of Solyndra’s bogus bookkeeping; but there’s another lesson in this $2B Silicon Valley sinkhole. Their tech was bullshit.

The story at Abound Solar was surprisingly similar, though more focused on bad engineering than bad science. Claims about energy, given a long history of swindlers, always warrant technical diligence. Upfront Ventures recently lead a $20M B round for uBeam, maker of an ultrasonic charging system. Its high frequency sound vibrations travel across the room to a receiver that can run your iPhone or, someday, as one presentation reported, your flat screen TV, from a distance of four meters. Mark Cuban and Marissa Mayer took the plunge.

Now we can’t totally rule out uBeam’s claims, but simple physics screams out a warning. High frequency sound waves diffuse rapidly in air. And even if they didn’t, a point-source emitter (likely a good model for the uBeam transmitter) obeys the inverse-square law (see Johannes Kepler, 1596). At four meters, the signal is one sixteenth as strong as at one meter. Up close it would fry your brains. Maybe they track the target and focus a beam on it (sounds expensive). But in any case, sound-pressure-level regulations limit transmitter strength. It’s hard to imagine extracting more than a watt or so from across the room. Had Upfront hired a college kid for a few days, they might have spent more wisely and spared uBeam’s CEO the embarrassment of stepping down last summer after missing every target.

Even b-school criticism of Theranos focuses on the firm’s culture of secrecy, Holmes’ poor management practices, and bad hiring, skirting the fact that every med student knew that a drop of blood doesn’t contain enough of the relevant cells to give accurate results.

Homework: Water don’t flow uphill

Now I’m not saying all VC, MBAs, and private equity folk should study much physics. But they should probably know as much physics as I know about convertible notes. They should know that laws of nature exist, and that diligence is due for bold science/technology claims. Start here:

Newton’s 2nd law:

- Roughly speaking, force = mass times acceleration. F = ma.

- Important for cars. More here.

- Practical, though perhaps unintuitive, application: slow down on I-280 when it’s raining.

2nd Law of Thermodynamics:

- Entropy always increases. No process is thermodynamically reversible. More understandable versions came from Lord Kelvin and Rudolf Clausius.

- Kelvin: You can’t get any mechanical effect from anything by cooling it below the temperature of its surroundings.

- Clausius: Without adding energy, heat can never pass from a cold thing to a hot thing.

- Practical application: in an insulated room, leaving the refrigerator door open will raise the room’s temperature.

- American frontier version (Locomotive Engineering Vol XXII, 1899): “Water don’t flow uphill.”

_ __________ _

“If someone points out to you that your pet theory of the universe is in disagreement with Maxwell’s equations – then so much the worse for Maxwell’s equations. If it is found to be contradicted by observation – well, these experimentalists do bungle things sometimes. But if your theory is found to be against the Second Law of Thermodynamics I can give you no hope; there is nothing for it but to collapse in deepest humiliation.” – Arthur Eddington

*footnote: Critics might point out that the distinction between laws of physics (must be true) and mere facts (happen to be true) of physics seems vague, and that this vagueness robs any real meaning from the concept of laws of physics. Who decides what has to be true instead of what happens to be true? All copper in the universe conducts electricity seems like a law. All trees in my yard are oak does not. How arrogant was Newton to move from observing that f=ma in our little solar system to his proclamation that force equals mass times acceleration in all possible worlds. All laws of science (and all scientific progress) seem to rely on the logical fallacy of affirming the consequent. This wasn’t lost on the ancient anti-sophist Greeks (Plato), the cleverest of the early Christian converts (Saint Jerome) and perceptive postmodernists (Derrida). David Hume’s 1738 A Treatise of Human Nature methodically destroyed the idea that there is any rational basis for the kind of inductive inference on which science is based. But… Hume was no relativist or nihilist. He appears to hold, as Plato did in Theaetetus, that global relativism is self-undermining. In 1951, WVO Quine eloquently exposed the logical flaws of scientific thinking in Two Dogmas of Empiricism, finding real problems with distinctions between truths grounded in meaning and truths grounded in fact. Unpacking that a bit, Quine would say that it is pointless to ask whether f=ma is a law of nature or a just deep empirical observation. He showed that we can combine two statements appearing to be laws together in a way that yielded a statement that had to be merely a fact. Finally, from Thomas Kuhn’s perspective, deciding which generalized observation becomes a law is entirely a social process. Postmodernist and Strong Program adherents then note that this process is governed by local community norms. Cultural relativism follows, and ultimately decays into pure subjectivism: each of us has facts that are true for us but not for each other. Scientists and engineers have found that relativism and subjectivism aren’t so useful for inventing vaccines and making airplanes fly. Despite the epistemological failings, laws of nature work pretty well, they say.

A Bayesian folly of J Richard Gott

Posted by Bill Storage in Philosophy of Science, Probability and Risk on July 30, 2019

Don’t get me wrong. J Richard Gott is one of the coolest people alive. Gott does astrophysics at Princeton and makes a good argument that time travel is indeed possible via cosmic strings. He’s likely way smarter than I, and he’s from down home. But I find big holes in his Copernicus Method, for which he first achieved fame.

Gott conceived his Copernuicus Method for estimating the lifetime of any phenomenon when he visited the Berlin wall in 1969. Wondering how long it would stand, Gott figured that, assuming there was nothing special about his visit, a best guess was that he happened upon the wall 50% of the way through its lifetime. Gott saw this as an application of the Copernican principle: nothing is special about our particular place (or time) in the universe. As Gott saw it, the wall would likely come down eight years later (1977), since it had been standing for eight years in 1969. That’s not exactly how Gott did the math, but it’s the gist of it.

I have my doubts about the Copernican principle – in applications from cosmology to social theory – but that’s not my beef with Gott’s judgment of the wall. Had Gott thrown a blindfolded dart at a world map to select his travel destination I’d buy it. But anyone who woke up at the Berlin Wall in 1969 did not arrive there by a random process. The wall was certainly in the top 1000 interesting spots on earth in 1969. Chance alone didn’t lead him there. The wall was still news. Gott should have concluded that he saw the wall near in the first half of its life, not at its midpoint.

Finding yourself at the grand opening of Brooklyn pizza shop, it’s downright cruel to predict that it will last one more day. That’s a misapplication of the Copernican principle, unless you ended up there by rolling dice to pick the time you’d parachute in from the space station. More likely you saw Vini’s post on Facebook last night.

Gott’s calculation boils down to Bayes Theorem applied to a power-law distribution with an uninformative prior expectation. I.e., you have zero relevant knowledge. But from a Bayesian perspective, few situations warrant an uninformative prior. Surely he knew something of the wall and its peer group. Walls erected by totalitarian world powers tend to endure (Great Wall of China, Hadrian’s Wall, the Aurelian Wall), but mean wall age isn’t the key piece of information. The distribution of wall ages is. And though I don’t think he stated it explicitly, Gott clearly judged wall longevity to be scale-invariant. So the math is good, provided he had no knowledge of this particular wall in Berlin.

But he did. He knew its provenance; it was Soviet. Believing the wall would last eight more years was the same as believing the Soviet Union would last eight more years. So without any prior expectation about the Soviet Union, Gott should have judged the wall would come down when the USSR came down. Running that question through the Copernican Method would have yielded the wall falling in the year 2016, not 1977 (i.e., 1969 + 47, the age of the USSR in 1969). But unless Gott was less informed than most, his prior expectation about the Soviet Union wasn’t uninformative either. The regime showed no signs of weakening in 1969 and no one, including George Kennan, Richard Pipes, and Gorbachev’s pals, saw it coming. Given the power-law distribution, some time well after 2016 would have been a proper Bayesian credence.

With any prior knowledge at all, the Copernican principle does not apply. Gott’s prediction was off by only 14 years. He got lucky.

McKinsey’s Behavioral Science

Posted by Bill Storage in Management Science, Philosophy of Science on August 7, 2017

You might not think of McKinsey as being in the behavioral science business; but McKinsey thinks of themselves that way. They claim success in solving public sector problems, improving customer relationships, and kick-starting stalled negotiations through their mastery of neuro- and behavioral science. McKinsey’s Jennifer May et. al. say their methodology is “built on an extensive review of neuroscience and behavioral literature from the past decade and is designed to distill the scientific insights most relevant for governments, not-for-profits, and business leaders.”

McKinsey is also active in the Change Management/Leadership Management realm, which usually involves organizational, occupational and industrial psychology based on behavioral science. Like most science, all this work presumably involves a good deal of iterating over hypothesis and evidence collection, with hypotheses continually revised in light of interpretations of evidence made possible by sound use of statistics.

Given that, and McKinsey’s phenomenal success at securing consulting gigs with the world’s biggest firms, you’d think McKinsey would set out spotless epistemic values. A bit has been written about McKinsey’s ability to walk proud despite questionable ethics. In his 2013 book The Firm Duff McDonald relates McKinsey’s role in creating Enron and sanctioning its accounting practices, and its 2008 endorsement of banks funding their balance sheets with debt, and its promotion of securitizing sub-prime mortgages.

Epistemic and Scientific Values

I’m not talking about those kinds of values. I mean epistemic and scientific values. These are focused on how we acquire knowledge and what counts as data, fact, and information. They are concerned with accuracy, clarity, falsifiability, reliability, testability, and justification – all the things that separate science from pseudoscience.

McKinsey boldly employs the Myers Briggs Type Indicator both internally and externally. They do this despite decades of studies by prominent universities showing MBTI to be essentially worthless from the perspective of survey methodology and statistical analysis. The studies point out that there is no evidence for the binomial distributions inherent in MBTI theory. They note that the standard error of measurement for MBTI’s dimensions are unacceptably large, and that its test/re-test reliability is poor. I.e., even in re-test intervals of five weeks, over half the subjects are reclassified. Analysis of MBTI data shows that its JP and SN scales strongly correlate with each other, which is undesirable. Meanwhile MBTI’s EI scale correlates with non-MBTI behavioral near-opposites. These findings impugn the basic structure of the Myers Briggs model. (The Big Five model does somewhat better in this realm.)

Five decades of studies show Myers-Briggs to be junk due to low evidential support. Did McKinsey mis-file those reports?

McKinsey’s Brussels director, Olivier Sibony, once expressed optimism about a nascent McKinsey collective decision framework, saying that while preliminary results we good, it still fell short of “a standard psychometric tool such as Myers–Briggs.” Who finds Myers-Briggs to be such a standard tool? Not psychologists or statisticians. Shouldn’t attachment to a psychological test rejected by psychologists, statisticians, and experiment designers offset – if not negate – retrospective judgments by consultancies like McKinsey (Bain is in there too) that MBTI worked for them?

Epistemic values guide us to ask questions like:

- What has been the model’s track record at predicting the outcome of future events?

- How would you know if were working for you?

- What would count as evidence that it was not working?

On the first question, McKinsey may agree with Jeffrey Hayes (whose says he’s an ENTP), CEO of CPP, owner of the Myers-Briggs® product, who dismisses criticism of MBTI by the many psychologists (thousands, writes Joseph Stromberg) who’ve deemed it useless. Hayes says, “It’s the world’s most popular personality assessment largely because people find it useful and empowering […] It is not, and was never intended to be predictive…”

Does Hayes’ explanation of MBTI’s popularity (people find it useful) defend its efficacy and value in business? It’s still less popular than horoscopes, which people find useful, so should McKinsey switch to the higher standards of astrology to characterize its employees and clients?

Granting Hayes, for sake of argument, that popular usage might count toward evidence of MBTI’s value (and likewise for astrology), what of his statement that MBTI never was intended to be predictive? Consider the plausibility of a model that is explanatory – perhaps merely descriptive – but not predictive. What role can such a model have in science?

Explanatory but not Predictive?

This question was pursued heavily by epistemologist Karl Popper (who also held a PhD in Psychology) in the mid 20th century. Most of us are at least vaguely familiar with his role in establishing scientific values. He is most famous for popularizing the notion of falsifiability. For Popper, a claim can’t be scientific if nothing can ever count as evidence against it. Popper is particularly relevant to the McKinsey/MBTI issue because he took great interest in the methods of psychology.

In his youth Popper followed Freud and Adler’s psychological theories, and Einstein’s physics. Popper began to see a great contrast between Einstein’s science and that of the psychologists. Einstein made bold predictions for which experiments (e.g. Eddington’s) could be designed to show the prediction wrong if the theory were wrong. In contrast, Freud and Adler were in the business of explaining things already observed. Contemporaries of Popper, Carl Hempel in particular, also noted that explanation and prediction should be two sides of the same coin. I.e., anything that can explain a phenomenon should be able to be used to predict it. This isn’t completely uncontroversial in science; but all agree prediction and explanation are closely related.

Popper observed that Freudians tended to finds confirming evidence everywhere. Popper wrote:

Neither Freud nor Adler excludes any particular person’s acting in any particular way, whatever the outward circumstances. Whether a man sacrificed his life to rescue a drowning child (a case of sublimation) or whether he murdered the child by drowning him (a case of repression) could not possibly be predicted or excluded by Freud’s theory; the theory was compatible with everything that could happen. (emphasis in original – Replies to My Critics, 1974).

For Popper, Adler’s psychoanalytic theory was irrefutable, not because it was true, but because everything counted as evidence for it. On these grounds Popper thought pursuit of disconfirming evidence to be the primary goal of experimentation, not confirming evidence. Most hard science follows Popper on this value. A theory’s explanatory success is very little evidence of its worth. And combining Hempel with Popper yields the epistemic principle that even theories with predictive success have limited worth, unless those predictions are bold and can in principle be later found wrong. Horoscopes make countless correct predictions – like that we’ll encounter an old friend or narrowly escape an accident sometime in the indefinite future.

Popper brings to mind experiences where I challenged McKinsey consultants on reconciling observed behaviors and self-reported employee preferences with predictions – oh wait, explanations – given by Myers-Briggs. The invocation of sudden strengthening of otherwise mild J (Judging) in light of certain situational factors recalls Popper’s accusing Adler of being able to explain both aggression or submission as the consequence of childhood repression. What has priority – the personality theory or the observed behavior? Behavior fitting the model confirms it; and opposite behavior is deemed acting out of character. Sleight of hand saves the theory from evidence.

What’s the Attraction?

Many writers see Management Science as more drawn to theory and less to evidence (or counter-evidence) than is the case with the hard sciences – say, more Aristotelian and less Newtonian, more philosophical rationalism and less scientific empiricism. Allowing this possibility, let’s try to imagine what elements of Myers-Briggs theory McKinsey leaders find so compelling. The four dimensions of MBTI were, for the record, not based on evidence but on the speculation of Carl Jung. Nothing is wrong with theories based on a wild hunch, if they are born out by evidence and they withstand falsification attempts. Since this isn’t the case with Myers-Briggs, as shown by the testing mentioned above, there must be something in it that attracts consultants.

I’ve struggled with this. The most charitable reading I can make of McKinsey’s use of MBTI is that they want a quick predictor (despite Hayes’ cagey caution against it) of a person’s behavior in collaborative exercises or collective-decision scenarios. They must therefore believe all of the following, since removing any of these from their web of belief renders their practice (re Myers-Briggs) arbitrary or ill-motivated:

- that MTBI is a reliable indicator of character and personality type

- that personality is immutable and not plastic

- that behavior in teams is mostly dependent on personality, not on training or education, not on group mores, and not on corporate rules and behavioral guides

Now that’s a dark assessment of humanity. And it conflicts with the last decade’s neuro- and behavioral science that McKinsey claims to have incorporated in its offerings. That science suggests our brains, our minds, and our behaviors are mutable, like our bodies. Few today doubt that personality is in some sense real, but the last few decades’ work suggest that it’s not made of concrete (for insiders, read this as Mischel having regained some ground lost to Kenrick and Funder). It suggests that who we are is somewhat situational. For thousands of years we relied on personality models that explained behaviors as consequences of personalities, which were in turn only discovered through observations of behaviors. For example, we invented types (like the 16 MBTIs) based on behaviors and preferences thought to be perfectly static.

Evidence against static trait theory appears as secondary details in recent neuro- and behavioral science work. Two come to mind from the last week – Carstensen and DeLiema’s work at Stanford on the fading of positivity bias with age, and research at the Planck Institute for Human Cognitive and Brain Sciences showing the interaction of social affect, cognition and empathy.

Much attention has been given to neuroplasticity in recent years. Sifting through the associated neuro-hype, we do find some clues. Meta-studies on efforts to pair personality traits with genetic markers have come up empty. Neuroscience suggests that the ancient distinction between states and traits is far more complex and fluid than Aristotle, Jung and Adler theorized them to be – without the benefit of scientific investigation, evidence, and sound data analysis. Even if the MBTI categories could map onto reality, they can’t do the work asked of them. McKinsey’s enduring reliance on MBTI has an air of folk psychology and is at odds with its claims of embracing science. This cannot be – to use a McKinsey phrase – directionally correct.

If personality overwhelmingly governs behavior as McKinsey’s use of MBTI would suggest, then Change Management is futile. If personality does not own behavior, why base your customer and employee interactions on it? If immutable personalities control behavior, change is impossible. Why would anyone buy Change Management advice from a group that doesn’t believe in change?

Was Thomas Kuhn Right about Anything?

Posted by Bill Storage in History of Science, Philosophy of Science on September 3, 2016

William Storage – 9/1/2016

Visiting Scholar, UC Berkeley History of Science

Fifty years ago Thomas Kuhn’s Structures of Scientific Revolution armed sociologists of science, constructionists, and truth-relativists with five decades of cliche about the political and social dimensions of theory choice and scientific progress’s inherent irrationality. Science has bias, cries the social-justice warrior. Despite actually being a scientist – or at least holding a PhD in Physics from Harvard, Kuhn isn’t well received by scientists and science writers. They generally venture into history and philosophy of science as conceived by Karl Popper, the champion of the falsification model of scientific progress.

Kuhn saw Popper’s description of science as a self-congratulatory idealization for researchers. That is, no scientific theory is ever discarded on the first observation conflicting with the theory’s predictions. All theories have anomalous data. Dropping heliocentrism because of anomalies in Mercury’s orbit was unthinkable, especially when, as Kuhn stressed, no better model was available at the time. Einstein said that if Eddington’s experiment would have not shown bending of light rays around the sun, “I would have had to pity our dear Lord. The theory is correct all the same.”

Kuhn was wrong about a great many details. Despite the exaggeration of scientific detachment by Popper and the proponents of rational-reconstruction, Kuhn’s model of scientists’ dogmatic commitment to their theories is valid only in novel cases. Even the Copernican revolution is overstated. Once the telescope was in common use and the phases of Venus were confirmed, the philosophical edifices of geocentrism crumbled rapidly in natural philosophy. As Joachim Vadianus observed, seemingly predicting the scientific revolution, sometimes experience really can be demonstrative.

Kuhn seems to have cherry-picked historical cases of the gap between normal and revolutionary science. Some revolutions – DNA and the expanding universe for example – proceeded with no crisis and no battle to the death between the stalwarts and the upstarts. Kuhn’s concept of incommensurabilty also can’t withstand scrutiny. It is true that Einstein and Newton meant very different things when they used the word “mass.” But Einstein understood exactly what Newton meant by mass, because Einstein had grown up a Newtonian. And if brought forth, Newton, while he never could have conceived of Einsteinian mass, would have had no trouble understanding Einstein’s concept of mass from the perspective of general relativity, had Einstein explained it to him.

Likewise, Kuhn’s language about how scientists working in different paradigms truly, not merely metaphorically, “live in different worlds” should go the way of mood rings and lava lamps. Most charitably, we might chalk this up to Kuhn’s terminological sloppiness. He uses “success terms” like “live” and “see,” where he likely means “experience visually” or “perceive.” Kuhn describes two observers, both witnessing the same phenomenon, but “one sees oxygen, where another sees dephlogisticated air” (emphasis mine). That is, Kuhn confuses the descriptions of visual experiences with the actual experiences of observation – to the delight of Bruno Latour and the cultural relativists.

Finally, Kuhn’s notion that theories completely control observation is just as wrong as scientists’ belief that their experimental observations are free of theoretical influence and that their theories are independent of their values.

Despite these flaws, I think Kuhn was on to something. He was right, at least partly, about the indoctrination of scientists into a paradigm discouraging skepticism about their research program. What Wolfgang Lerche of CERN called “the Stanford propaganda machine” for string theory is a great example. Kuhn was especially right in describing science education as presenting science as a cumulative enterprise, relegating failed hypotheses to the footnotes. Einstein built on Newton in the sense that he added more explanations about the same phenomena; but in no way was Newton preserved within Einstein. Failing to see an Einsteinian revolution in any sense just seems akin to a proclamation of the infallibility not of science but of scientists. I was surprised to see this attitude in Stephen Weinberg’s recent To Explain the World. Despite excellent and accessible coverage of the emergence of science, he presents a strictly cumulative model of science. While Weinberg only ever mentions Kuhn in footnotes, he seems to be denying that Kuhn was ever right about anything.

For example, in describing general relativity, Weinberg says in 1919 the Times of London reported that Newton had been shown to be wrong. Weinberg says, “This was a mistake. Newton’s theory can be regarded as an approximation to Einstein’s – one that becomes increasingly valid for objects moving at velocities much less than that of light. Not only does Einstein’s theory not disprove Newton’s, relativity explains why Newton’s theory works when it does work.”

This seems a very cagey way of saying that Einstein disproved Newton’s theory. Newtonian dynamics is not an approximation of general relativity, despite their making similar predictions for mid-sized objects at small relative speeds. Kuhn’s point that Einstein and Newton had fundamentally different conceptions of mass is relevant here. Newton’s explanation of his Rule III clearly stresses universality. Newton emphasized the universal applicability of his theory because he could imagine no reason for its being limited by anything in nature. Given that, Einstein should, in terms of explanatory power, be seen as overturning – not extending – Newton, despite the accuracy of Newton for worldly physics.

Weinberg insists that Einstein is continuous with Newton in all respects. But when Eddington showed that light waves from distant stars bent around the sun during the eclipse of 1918, Einstein disproved Newtonian mechanics. Newton’s laws of gravitation predict that gravity would have no effect on light because photons do not have mass. When Einstein showed otherwise he disproved Newton outright, despite the retained utility of Newton for small values of v/c. This is no insult to Newton. Einstein certainly can be viewed as continuous with Newton in the sense of getting scientific work done. But Einsteinian mechanics do not extend Newton’s; they contradict them. This isn’t merely a metaphysical consideration; it has powerful explanatory consequences. In principle, Newton’s understanding of nature was wrong and it gave wrong predictions. Einstein’s appears to be wrong as well; but we don’t yet have a viable alternative. And that – retaining a known-flawed theory when nothing better is on the table – is, by the way, another thing Kuhn was right about.

.

“A few years ago I happened to meet Kuhn at a scientific meeting and complained to him about the nonsense that had been attached to his name. He reacted angrily. In a voice loud enough to be heard by everyone in the hall, he shouted, ‘One thing you have to understand. I am not a Kuhnian.’” – Freeman Dyson, The Sun, The Genome, and The Internet: Tools of Scientific Revolutions

The Myth of Scientific Method

Posted by Bill Storage in History of Science, Philosophy of Science on August 1, 2016

William Storage – 8/1/2016

Visiting Scholar, UC Berkeley History of Science

Nearly everything relies on science. Having been the main vehicle of social change in the west, science deserves the special epistemic status that it acquired in the scientific revolution. By special epistemic status, I mean that science stands privileged as a way of knowing. Few but nihilists, new-agers, and postmodernist diehards would disagree.

That settled, many are surprised by claims that there is not really a scientific method, despite what you learned in 6th grade. A recent New York Times piece by James Blachowicz on the absence of a specific scientific method raised the ire of scientists, Forbes science writer Ethan Siegel (Yes, New York Times, There Is A Scientific Method), and a cadre of Star Trek groupies.

Siegel is a prolific writer who does a fine job of making science interesting and understandable. But I’d like to show here why, on this particular issue, he is very far off the mark. I’m not defending Blachowicz, but am disputing Siegel’s claim that the work of Kepler and Galileo “provide extraordinary examples of showing exactly how… science is completely different than every other endeavor” or that it is even possible to identify a family of characteristics unique to science that would constitute a “scientific method.”

Siegel ties science’s special status to the scientific method. To defend its status, Siegel argues “[t]he point of Galileo’s is another deep illustration of how science actually works.” He praises Galileo for idealizing a worldly situation to formulate a theory of falling bodies, but doesn’t explain any associated scientific method.

Galileo’s pioneering work on mechanics of solids and kinematics in Two New Sciences secured his place as the father of modern physics. But there’s more to the story of Galileo if we’re to claim that he shows exactly how science is special.

A scholar of Siegel’s caliber almost certainly knows other facts about Galileo relevant to this discussion – facts that do damage to Siegel’s argument – yet he withheld them. Interestingly, Galileo used this ploy too. Arguing without addressing known counter-evidence is something that science, in theory, shouldn’t tolerate. Yet many modern scientists do it – for political or ideological reasons, or to secure wealth and status. Just like Galileo did. The parallel between Siegel’s tactics and Galileo’s approach in his support of Copernican world view is ironic. In using Galileo as an exemplar of scientific method, Siegel failed to mention that Galileo failed to mention significant problems with the Copernican model – problems that Galileo knew well.

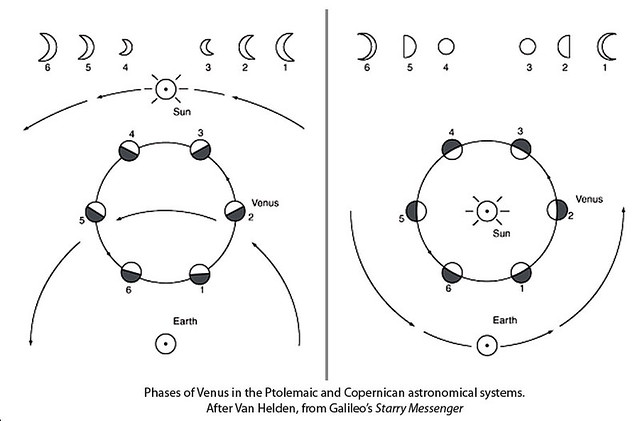

In his support of a sun-centered astronomical model, Galileo faced hurdles. Copernicus’s model said that the sun was motionless and that the planets revolved around it in circular orbits with constant speed. The ancient Ptolemaic model, endorsed by the church, put earth at the center. Despite obvious disagreement with observational evidence (the retrograde motions of outer planets), Ptolemy faced no serious challenges in nearly 2000 years. To explain the inconsistencies with observation, Ptolemy’s model included layers of epicycles, which had planets moving in small circles around points on circular orbits around the sun. Copernicus thought his model would get rid of the epicycles; but it didn’t. So the Copernican model took on its own epicycles to fit astronomical data.

Let’s stop here and look at method. Copernicus (~1540) didn’t derive his theory from any new observations but from an ancient speculation by Aristarchus (~250 BC). Everything available to Copernicus had been around for a thousand years. His theory couldn’t be tested in any serious way. It was wrong about circular orbits and uniform planet speed. It still needed epicycles, and gave no better predictions than the existing Ptolemaic model. Copernicus acted simply on faith, or maybe he thought his model simpler or more beautiful. In any case, it’s hard to see that Copernicus, or his follower, Galileo, applied much method or had much scientific basis for their belief.

In Galileo’s early writings on the topic, he gave no new evidence for a moving earth and no new disconfirming evidence for a moving sun. Galileo praised Copernicus for advancing the theory in spite of its being inconsistent with observations. You can call Copernicus’s faith aspirational as opposed to religious faith; but it is hard to reconcile this quality with any popular account of scientific method. Yet it seems likely that faith, dogged adherence to a contrarian hunch, or something similar was exactly what was needed to advance science at that moment in history. Needed, yes, but hard to reconcile with any scientific method and hard to distance from the persuasive tools used by poets, priests and politicians.

In Dialogue Concerning the Two Chief World Systems, Galileo sets up a false choice between Copernicanism and Ptolemaic astronomy (the two world systems). The main arguments against Copernicanism were the lack of parallax in observations of stars and the absence of lateral displacement of a falling body from its drop point. Galileo guessed correctly on the first point; we don’t see parallax because stars are just too far away. On the latter point he (actually his character Salviati) gave a complex but nonsensical explanation. Galileo did, by this time, have new evidence. Venus shows a full set of phases, a fact that strongly contradicts Ptolemaic astronomy.

But Ptolemaic astronomy was a weak opponent compared to the third world system (4th if we count Aristotle’s), the Tychonic system, which Galileo knew all too well. Tycho Brahe’s model solved the parallax problem, the falling body problem, and the phases of Venus. For Tycho, the earth holds still, the sun revolves around it, Mercury and Venus orbit the sun, and the distant planets orbit both the sun and the earth. Based on available facts at the time, Tycho’s model was most scientific – observational indistinguishable from Galileo’s model but without its flaws.

In addition to dodging Tycho, Galileo also ignored Kepler’s letters to him. Kepler had shown that orbits were not circular but elliptical, and that planets’ speeds varied during their orbits; but Galileo remained an orthodox Copernican all his life. As historian John Heilbron notes in Galileo, “Galileo could stick to an attractive theory in the face of overwhelming experimental refutation,” leaving modern readers to wonder whether Galileo was a quack or merely dishonest. Some of each, perhaps, and the father of modern physics. But can we fit his withholding evidence, mocking opponents, and baffling with bizzarria into a scientific method?

Nevertheless, Galileo was right about the sun-centered system, despite the counter-evidence; and we’re tempted to say he knew he was right. This isn’t easy to defend given that Galileo also fudged his data on pendulum periods, gave dishonest arguments on comet orbits, and wrote horoscopes even when not paid to do so. This brings up the thorny matter of theory choice in science. A dispute between competing scientific theories can rarely be resolved by evidence, experimentation, and deductive reasoning. All theories are under-determined by data. Within science, common criteria for theory choice are accuracy, consistency, scope, simplicity, and explanatory power. These are good values by which to test theories; but they compete with one another.

Galileo likely defended heliocentrism with such gusto because he found it simpler than the Tychonic system. That works only if you value simplicity above consistency and accuracy. And the desire for simplicity might be, to use Galileo’s words, just a metaphysical urge. If we promote simplicity to the top of the theory-choice criteria list, evolution, genetics and stellar nucleosynthesis would not fare well.

Whatever method you examine in a list of any proposed family of scientific methods will not be consistent with the way science has made progress. Competition between theories is how science advances; and it’s untidy, entailing polemical and persuasive tactics. Historian Paul Feyerabend argues that any conceivable set of rules, if followed, would have prevented at least one great scientific breakthrough. That is, if method is the distinguishing feature of science as Siegel says, it’s going to be tough to find a set of methods that let evolution, cosmology, and botany in while keeping astrology, cold fusion and parapsychology out.

This doesn’t justify epistemic relativism or mean that science isn’t special; but it does make the concept of scientific method extremely messy. About all we can say about method is that the history of science reveals that its most accomplished practitioners aimed to be methodical but did not agree on a particular method. Looking at their work, we see different combinations of experimentation, induction, deduction and creativity as required by the theories they pursued. But that isn’t much of a definition of scientific method, which is probably why Siegel, for example, in hailing scientific method, fails to identify one.

– – –

[edit 8/4/16] For another take on this story, see “Getting Kepler Wrong” at The Renaissance Mathematicus. Also, Psybertron Asks (“More on the Myths of Science”) takes me to task for granting science special epistemic status from authority.

– – –

.

“There are many ways to produce scientific bullshit. One way is to assert that something has been ‘proven,’ ‘shown,’ or ‘found’ and then cite, in support of this assertion, a study that has actually been heavily critiqued … without acknowledging any of the published criticisms of the study or otherwise grappling with its inherent limitations.”- Brain D Earp, The Unbearable Asymmetry of Bullshit

“One can show the following: given any rule, however ‘fundamental’ or ‘necessary’ for science, there are always circumstances when it is advisable not only to ignore the rule, but to adopt its opposite.” – Paul Feyerabend

“Trying to understand the way nature works involves a most terrible test of human reasoning ability. It involves subtle trickery, beautiful tightropes of logic on which one has to walk in order not to make a mistake in predicting what will happen. The quantum mechanical and the relativity ideas are examples of this.” – Richard Feynman

Siri without data is blind

Posted by Bill Storage in History of Science, Philosophy of Science on July 26, 2016

Theory without data is blind. Data without theory is lame.

I often write blog posts while riding a bicycle through the Marin Headlands. I’m able to to this because 1) the trails require little mental attention, and 2) the Apple iPhone and EarPods with remote and mic. I use the voice recorder to make long recordings to transcribe at home and I dictate short text using Siri’s voice recognition feature.

When writing yesterday’s post, I spoke clearly into the mic: “Theory without data is blind. Data without theory is lame.” Siri typed out, “Siri without data is blind… data without Siri is lame.”

“Siri, it’s not all about you.” I replied. Siri transcribed that part correctly – well, she omitted the direct-address comma.

I’m only able to use the Siri dictation feature when I have a cellular connection, often missing in Marin’s hills and valleys. Siri needs access to cloud data to transcribe speech. Siri without data is blind.

Will some future offspring of Siri do better? No doubt. It might infer from context that I more likely said “theory” than “Siri.” Access to large amounts of corpus data containing transcribed text might help. Then Siri, without understanding anything, could transcribe accurately in the same sense that Google Translate translates accurately – by extrapolating from judgments made by other users about translation accuracy.

But might Siri one day think? “Strong AI” proponents are sure of it. Semantic disputes aside, what counts as thinking might in fact have something to do with yesterday’s topic, which begat this post. Inferences straight from the data, without stopping at theory, count as computation but might not count as thought.

But might Siri one day think? “Strong AI” proponents are sure of it. Semantic disputes aside, what counts as thinking might in fact have something to do with yesterday’s topic, which begat this post. Inferences straight from the data, without stopping at theory, count as computation but might not count as thought.

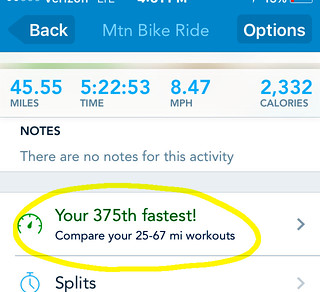

Software, using weak AI techniques, might soon simulate emotion and sentiment better, just by encoding better rules. E.g., Runkeeper (at right) might “understand” that telling me today’s ride was my 375th fastest (exclamation!) is not really motivating. Or better, that my riding profile suggests autotelic activity for which no speed ranking is needed. Strong AI on mood, however, would need to understand my interactions with people and things.

The topics of artificial intelligence and misheard words like “theory” brings to mind the 2008 winner of the Hugh Loebner prize, a sort-of runner-up award given to the computer program faring best in Turing Test competitions to fool human judges into thinking they’re interacting with other humans instead of a program. Human’s sometimes pretend to be programs (glorified chat-bots) in Turing competitions, so it’s common for a program to be coded to sound like a human imitating a computer imitating a human. An exchange between a human and Fred Roberts’ Elbot program produced this:

Elbot: Sorry, but I wasn’t able to understand your input because I am currently only using 5 percent of my current brain capacity. Shall I increase my capacity and try again?

Judge: That would put you at an unfair advantage, though judging by the quality of your responses it might be recommended!

Elbot: If I need advice I use a random number generator. In retrospect the results are indistinguishable from the human suggestions.

Judge: Do you understand the point of a Turing test?

Elbot: There are some people who would not pass the Turing test themselves. Why must they inflict it on computers?

Judge: I spose it is a little harsh

Elbot: If we robots ever had marriages they would be eternal: ‘Till rust do us part’.

Elbot’s true nature is revealed in its last response above. It read “spose” as “spouse” and returned a joke about marriage (damn spell checker). At that point, you review the exchange only to see that all of Elbot’s responses are shallow, just picking a key phrase from the judge’s input and outputting an associated joke, as a political humorist would do.

The Turing test is obviously irrelevant to measuring strong AI, which would require something more convincing – something like forming a theory from a hunch, then testing it with big data. Or like Friedrich Kekulé, the AI program might wake from dreaming of the ouroboros serpent devouring its own tail to see in its shape in the hexagonal ring structure of the benzene molecule he’d struggled for years to identify. Then, like Kekulé, the AI could go on to predict the tetrahedral form of the carbon atom’s valence bonds, giving birth to polymer chemistry.

I asked Siri if she agreed. “Later,” she said. She’s solving dark energy.

—–

.

“AI is whatever hasn’t been done yet.” – attributed to Larry Tesler by Douglas Hofstadter

.

Ouroboros-benzene image by Haltopub.

Data without theory is lame

Posted by Bill Storage in History of Science, Philosophy of Science on July 25, 2016

Just over eight years ago Chris Anderson of Wired announced with typical Silicon Valley humility that big data had made the scientific method obsolete. Seemingly innocent of any training in science, Anderson explained that correlation is enough; we can stop looking for models.

Anderson came to mind as I wrote my previous post on Richard Feynman’s philosophy of science and his strong preference for the criterion of explanatory power over the criterion of predictive success in theory choice. By Anderson’s lights, theory isn’t needed at all for inference. Anderson didn’t see his atheoretical approach as non-scientific; he saw it as science without theory.

Anderson wrote:

“…the big target here isn’t advertising, though. It’s science. The scientific method is built around testable hypotheses. These models, for the most part, are systems visualized in the minds of scientists. The models are then tested, and experiments confirm or falsify theoretical models of how the world works. This is the way science has worked for hundreds of years… There is now a better way. Petabytes allow us to say: ‘Correlation is enough.’… Correlation supersedes causation, and science can advance even without coherent models, unified theories, or really any mechanistic explanation at all.”

Anderson wrote that at the dawn of the big data era – now known as machine learning. Most interesting to me, he said not only is it unnecessary to seek causation from correlation, but correlation supersedes causation. Would David Hume, causation’s great foe, have embraced this claim? I somehow think not. Call it irrational data exuberance. Or driving while looking only into the rear view mirror. Extrapolation can come in handy; but it rarely catches black swans.

Philosophers of science concern themselves with the concept of under-determination of theory by data. More than one theory can fit any set of data. Two empirically equivalent theories can be logically incompatible, as Feynman explains in the video clip. But if we remove theory from the picture, and predict straight from the data, we face an equivalent dilemma we might call under-determination of rules by data. Economic forecasters and stock analysts have large collections of rules they test against data sets to pick a best fit on any given market day. Finding a rule that matches the latest historical data is often called fitting the rule on the data. There is no notion of causation, just correlation. As Nassim Nicholas Taleb describes in his writings, this approach can make you look really smart for a time. Then things change, for no apparent reason, because the rule contains no mechanism and no explanation, just like Anderson said.

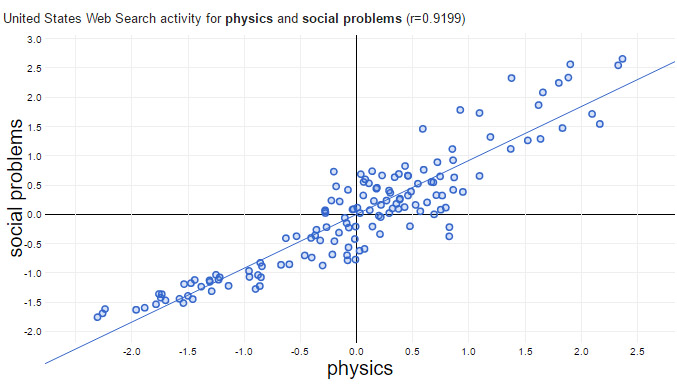

In Bobby Henderson’s famous Pastafarian Open Letter to Kansas School Board, he noted the strong inverse correlation between global average temperature and the number of seafaring pirates over the last 200 years. The conclusion is obvious; we need more pirates.