Posts Tagged Philosophy of Science

What ‘Project Hail Mary’ Gets Right about Science

Posted by Bill Storage in History of Science on February 11, 2026

Most reviews of Project Hail Mary focus on the science, the plot, or the plausibility of first contact. This one asks a different question: what does the story assume science is?

Andy Weir’s novel, and the upcoming film adaptation, treats science not as individual brilliance but as a coordination technology, a way fallible minds synchronize their guesses about the world. That framing quietly explains why an alien civilization could master interstellar travel while missing radiation, and why human weakness turns out to be an epistemic strength.

This review looks at Project Hail Mary as a rare piece of science fiction where epistemology is central. Things like:

- Science as method rather than facts

- Individual intelligence vs collective knowledge

- Why discovery depends on social structure, not genius

- Rocky’s cognition and epistemic blind spots

- Why humans “stumble” into deep structure

Most people think science is something smart individuals discover. Project Hail Mary argues the opposite: science works because none of us is very smart alone. This idea is the structure that holds the whole story together.

Science is not a property of brains. It’s a coordination technology we built to synchronize our predictions about nature. Very few novels even notice this distinction. Project Hail Mary, a 2021 novel by Andy Weir and a 2026 film starring Ryan Gosling, puts it at the center of the story. The question here isn’t whether Weir gets the science right, but what the story assumes science is.

I’m going to give you a philosopher-of-science take on why Hail Mary works when so much science fiction doesn’t.

Most science fiction forgets about epistemology, the theory of knowledge. How do we know? What counts as evidence? What methods justify belief? Epistemology sounds abstract, but it’s basic enough that it could be taught to sixth graders, and once was. Project Hail Mary never uses the word, and its characters never discuss it explicitly. Instead, epistemology is the plot – which is oddly refreshing.

Every observation and every conclusion in the book flows from astronaut Ryland Grace’s constrained first-person perspective. Weir keeps epistemology inside the story rather than lecturing about it. Walter Miller gestured at something similar in his 1959 A Canticle for Leibowitz, where the complementary mental habits of Michael Faraday and James Clerk Maxwell are mirrored without ever being named. Insiders catch it, outsiders don’t need to. Weir pushes that technique much further. Epistemology becomes the engine that moves the story forward. I hope the movie retains this aspect of the book. Weir’s early praise of the movie is a good sign.

From a literary standpoint, science fiction has mostly lagged behind other genres in abandoning omniscient reporting of mental states. Weir avoids this almost to a fault. Grace knows only what he can operationalize. Awakening from a coma, even his own memories arrive like experimental results rather than introspection. This feels less like literary minimalism than engineering discipline. Knowledge is revealed through constrained interaction with apparatus, not through authorial mind-reading. Bradbury told us what characters thought because he was taught that was realism. Weir understands that realism in science is procedural.

Reactions to Hail Mary are mixed but mostly positive. Many readers praise its ingenuity while criticizing its thin prose, quippy dialogue, and engineered optimism. Weir has admitted that scientific accuracy takes priority over literary polish. Grace can feel like a bundle of dad jokes attached to a physics degree. But that tone does more work than it seems. We are, after all, inside the head of a physics nerd solving problems under extreme constraint.

The novel openly teaches science: pendulums, gravitation, momentum. Less openly, it teaches philosophy of science. That second lesson is never announced. It’s embedded.

Grace encounters an extraterrestrial engineer named Rocky. Rocky evolved in an ammonia atmosphere far denser and hotter than Earth’s. His blood is mercury. He has no eyes, five legs, speaks in chords, is the size of a dog but weighs 400 pounds, and can only interact with Grace across physical barriers. The differences pile up gradually.

Rocky is astonishingly capable. His memory is perfect. His computation is nearly instantaneous. And yet his civilization never discovered radiation. It’s a blind spot with lethal consequences. They developed interstellar travel without any theory of relativity. Rocky is not inferior to humans. He is orthogonal. Weir refuses to treat language, vision, or the ability to abstract as universal yardsticks. Rocky’s cognition is constrained by temperature, pressure, materials science, acoustics, and survival heuristics that are alien in the literal sense.

Interstellar travel without knowledge of relativity sounds implausible until you think like a historian of science. Discovery is path-dependent. Humans built steam engines before thermodynamics, radios before quantum mechanics, and turbochargers without a general solution to the Navier–Stokes equations. In fact, general relativity was understood faster, with fewer people and fewer unknowns, than modern turbomachinery. Intelligence does not guarantee theoretical completeness.

We often talk as if engineering is applied science, as though scientists discover laws and engineers merely execute them. Historically, it’s mostly the reverse. Engineering drove hydrostatics, thermodynamics, and much of electromagnetism. Science condensed out of practice. Rocky shows us a civilization that pushed engineering heuristics to extraordinary limits without building the meta-theory we associate with modern physics.

Weir shows us that ignorance has consequences. Rocky’s civilization has blind spots, not just gaps. They solve problems locally, not universally. That matches real scientific history, which is full of “how did they not notice that?” moments. Epistemic humility matters.

The deeper point is easy to miss. Rocky’s raw intelligence is overwhelming, yet Weir shows how insufficient that is. Computational power is not the same thing as epistemic traction.

Humans compensate for limited individual cognition by externalizing thought. Books, instruments, equations, replication, argument, peer irritation. Science is not what smart people know. It’s what happens when disagreement is preserved instead of suppressed.

Consider the neutron lifetime puzzle. Isolated neutrons decay in about fifteen minutes. Bottle experiments and beam experiments both work, both are careful, and their measurements disagree by nearly ten seconds. That discrepancy feeds directly into Big Bang nucleosynthesis and cosmology. No one is happy about it. That discomfort is the system working. Science as a council of experts would smooth it over. Science as a messy coordination technology will not.

Rocky’s science advances by heroic individual problem-solving. Human science advances by distributed skepticism. His civilization seems optimized for survival and local success, not for epistemic reach. Humans stumble into deep structure because we are bad enough at thinking alone that we are forced to think together.

Relativity illustrates this point. Einstein is often treated as a counterexample, the lone genius who leapt beyond intuition. But strip away the myth and the leap shrinks. Maxwell’s equations had already broken classical time and space. Michelson–Morley refused to go away. Lorentz supplied transformations that worked but felt evasive. Einstein inherited the problem fully formed. His leap was short because the runway was long. What made it remarkable was not distance but direction. He was willing to look where others would not. No one is epistemically self-sufficient. Not Einstein, not Rocky, not us.

There’s another evolutionary angle Weir hints at. Vision didn’t just give humans data. It gave easily shared data. You can point. You can draw on a cave wall. You can argue over the same thing in space. In Rocky’s sightless world, translating private perception into communal objects is harder. That alone could delay theoretical physics by centuries.

The book’s real claim is stronger than “different minds think differently.” Scientific knowledge depends on social failure modes as much as on cognitive gifts. Progress requires tolerance for being wrong in public and for wasting effort on anomalies.

Thankfully, Weir doesn’t sermonize. Rocky saves the mission by being smarter. Humanity saves itself by having invented a way for dull humans to coordinate across centuries. It’s a quietly anti-heroic view of intelligence.

Project Hail Mary treats science as failure analysis rather than genius theater. Something breaks. What do we test next? That may be why it succeeds where so much science fiction fails.

Here’s my video review shot with an action cam as I wander the streets of ancient and renaissance Rome.

From Aqueducts to Algorithms: The Cost of Consensus

Posted by Bill Storage in History of Science on July 9, 2025

The Scientific Revolution, we’re taught, began in the 17th century – a European eruption of testable theories, mathematical modeling, and empirical inquiry from Copernicus to Newton. Newton was the first scientist, or rather, the last magician, many historians say. That period undeniably transformed our understanding of nature.

Historians increasingly question whether a discrete “scientific revolution” ever happened. Floris Cohen called the label a straightjacket. It’s too simplistic to explain why modern science, defined as the pursuit of predictive, testable knowledge by way of theory and observation, emerged when and where it did. The search for “why then?” leads to Protestantism, capitalism, printing, discovered Greek texts, scholasticism, even weather. That’s mostly just post hoc theorizing.

Still, science clearly gained unprecedented momentum in early modern Europe. Why there? Why then? Good questions, but what I wonder, is why not earlier – even much earlier.

Europe had intellectual fireworks throughout the medieval period. In 1320, Jean Buridan nearly articulated inertia. His anticipation of Newton is uncanny, three centuries earlier:

“When a mover sets a body in motion he implants into it a certain impetus, that is, a certain force enabling a body to move in the direction in which the mover starts it, be it upwards, downwards, sidewards, or in a circle. The implanted impetus increases in the same ratio as the velocity. It is because of this impetus that a stone moves on after the thrower has ceased moving it. But because of the resistance of the air (and also because of the gravity of the stone) … the impetus will weaken all the time. Therefore the motion of the stone will be gradually slower, and finally the impetus is so diminished or destroyed that the gravity of the stone prevails and moves the stone towards its natural place.”

Robert Grosseteste, in 1220, proposed the experiment-theory iteration loop. In his commentary on Aristotle’s Posterior Analytics, he describes what he calls “resolution and composition”, a method of reasoning that moves from particulars to universals, then from universals back to particulars to make predictions. Crucially, he emphasizes that both phases require experimental verification.

In 1360, Nicole Oresme gave explicit medieval support for a rotating Earth:

“One cannot by any experience whatsoever demonstrate that the heavens … are moved with a diurnal motion… One can not see that truly it is the sky that is moving, since all movement is relative.”

He went on to say that the air moves with the Earth, so no wind results. He challenged astrologers:

“The heavens do not act on the intellect or will… which are superior to corporeal things and not subject to them.”

Even if one granted some influence of the stars on matter, Oresme wrote, their effects would be drowned out by terrestrial causes.

These were dead ends, it seems. Some blame the Black Death, but the plague left surprisingly few marks in the intellectual record. Despite mass mortality, history shows politics, war, and religion marching on. What waned was interest in reviving ancient learning. The cultural machinery required to keep the momentum going stalled. Critical, collaborative, self-correcting inquiry didn’t catch on.

A similar “almost” occurred in the Islamic world between the 10th and 16th centuries. Ali al-Qushji and al-Birjandi developed sophisticated models of planetary motion and even toyed with Earth’s rotation. A layperson would struggle to distinguish some of al-Birjandi’s thought experiments from Galileo’s. But despite a wealth of brilliant scholars, there were few institutions equipped or allowed to convert knowledge into power. The idea that observation could disprove theory or override inherited wisdom was socially and theologically unacceptable. That brings us to a less obvious candidate – ancient Rome.

Rome is famous for infrastructure – aqueducts, cranes, roads, concrete, and central heating – but not scientific theory. The usual story is that Roman thought was too practical, too hierarchical, uninterested in pure understanding.

One text complicates that story: De Architectura, a ten-volume treatise by Marcus Vitruvius Pollio, written during the reign of Augustus. Often described as a manual for builders, De Architectura is far more than a how-to. It is a theoretical framework for knowledge, part engineering handbook, part philosophy of science.

Vitruvius was no scientist, but his ideas come astonishingly close to the scientific method. He describes devices like the Archimedean screw or the aeolipile, a primitive steam engine. He discusses acoustics in theater design, and a cosmological models passed down from the Greeks. He seems to describe vanishing point perspective, something seen in some Roman art of his day. Most importantly, he insists on a synthesis of theory, mathematics, and practice as the foundation of engineering. His describes something remarkably similar to what we now call science:

“The engineer should be equipped with knowledge of many branches of study and varied kinds of learning… This knowledge is the child of practice and theory. Practice is the continuous and regular exercise of employment… according to the design of a drawing. Theory, on the other hand, is the ability to demonstrate and explain the productions of dexterity on the principles of proportion…”

“Engineers who have aimed at acquiring manual skill without scholarship have never been able to reach a position of authority… while those who relied only upon theories and scholarship were obviously hunting the shadow, not the substance. But those who have a thorough knowledge of both… have the sooner attained their object and carried authority with them.”

This is more than just a plea for well-rounded education. H e gives a blueprint for a systematic, testable, collaborative knowledge-making enterprise. If Vitruvius and his peers glimpsed the scientific method, why didn’t Rome take the next step?

The intellectual capacity was clearly there. And Roman engineers, like their later European successors, had real technological success. The problem, it seems, was societal receptiveness.

Science, as Thomas Kuhn famously brough to our attention, is a social institution. It requires the belief that man-made knowledge can displace received wisdom. It depends on openness to revision, structured dissent, and collaborative verification. These were values that the Roman elite culture distrusted.

When Vitruvius was writing, Rome had just emerged from a century of brutal civil war. The Senate and Augustus were engaged in consolidating power, not questioning assumptions. Innovation, especially social innovation, was feared. In a political culture that prized stability, hierarchy, and tradition, the idea that empirical discovery could drive change likely felt dangerous.

We see this in Cicero’s conservative rhetoric, in Seneca’s moralism, and in the correspondence between Pliny and Trajan, where even mild experimentation could be viewed as subversive. The Romans could build aqueducts, but they wouldn’t fund a lab.

Like the Islamic world centuries later, Rome had scholars but not systems. Knowledge existed, but the scaffolding to turn it into science – collective inquiry, reproducibility, peer review, invitations for skeptics to refute – never emerged.

Vitruvius’s De Architectura deserves more attention, not just as a technical manual but as a proto-scientific document. It suggests that the core ideas behind science were not exclusive to early modern Europe. They’ve flickered into existence before, in Alexandria, Baghdad, Paris, and Rome, only to be extinguished by lack of institutional fit.

That science finally took root in the 17th century had less to do with discovery than with a shift in what society was willing to do with discovery. The real revolution wasn’t in Newton’s laboratory, it was in the culture.

Rome’s Modern Echo?

It’s worth asking whether we’re becoming more Roman ourselves. Today, we have massively resourced research institutions, global scientific networks, and generations of accumulated knowledge. Yet, in some domains, science feels oddly stagnant or brittle. Dissenting views are not always engaged but dismissed, not for lack of evidence, but for failing to fit a prevailing narrative.

We face a serious, maybe existential question. Does increasing ideological conformity, especially in academia, foster or hamper science?

Obviously, some level of consensus is essential. Without shared standards, peer review collapses. Climate models, particle accelerators, and epidemiological studies rely on a staggering degree of cooperation and shared assumptions. Consensus can be a hard-won product of good science. And it can run perilously close to dogma. In the past twenty years we’ve seen consensus increasingly enforced by legal action, funding monopolies, and institutional ostracism.

String theory may (or may not) be physics’ great white whale. It’s mathematically exquisite but empirically elusive. For decades, critics like Lee Smolin and Peter Woit have argued that string theory has enjoyed a monopoly on prestige and funding while producing little testable output. Dissenters are often marginalized.

Climate science is solidly evidence-based, but responsible scientists point to constant revision of old evidence. Critics like Judith Curry or Roger Pielke Jr. have raised methodological or interpretive concerns, only to find themselves publicly attacked or professionally sidelined. Their critique is labeled denial. Scientific American called Curry a heretic. Lawsuits, like Michael Mann’s long battle with critics, further signal a shift from scientific to pre-scientific modes of settling disagreement.

Jonathan Haidt, Lee Jussim, and others have documented the sharp political skew of academia, particularly in the humanities and social sciences, but increasingly in hard sciences too. When certain political assumptions are so embedded, they become invisible. Dissent is called heresy in an academic monoculture. This constrains the range of questions scientists are willing to ask, a problem that affects both research and teaching. If the only people allowed to judge your work must first agree with your premises, then peer review becomes a mechanism of consensus enforcement, not knowledge validation.

When Paul Feyerabend argued that “the separation of science and state” might be as important as the separation of church and state, he was pushing back against conservative technocratic arrogance. Ironically, his call for epistemic anarchism now resonates more with critics on the right than the left. Feyerabend warned that uniformity in science, enforced by centralized control, stifles creativity and detaches science from democratic oversight.

Today, science and the state, including state-adjacent institutions like universities, are deeply entangled. Funding decisions, hiring, and even allowable questions are influenced by ideology. This alignment with prevailing norms creates a kind of soft theocracy of expert opinion.

The process by which scientific knowledge is validated must be protected from both politicization and monopolization, whether that comes from the state, the market, or a cultural elite.

Science is only self-correcting if its institutions are structured to allow correction. That means tolerating dissent, funding competing views, and resisting the urge to litigate rather than debate. If Vitruvius teaches us anything, it’s that knowing how science works is not enough. Rome had theory, math, and experimentation. What it lacked was a social system that could tolerate what those tools would eventually uncover. We do not yet lack that system, but we are testing the limits.

Grains of Truth: Science and Dietary Salt

Posted by Bill Storage in History of Science, Philosophy of Science on June 29, 2025

Science doesn’t proceeds in straight lines. It meanders, collides, and battles over its big ideas. Thomas Kuhn’s view of science as cycles of settled consensus punctuated by disruptive challenges is a great way to understand this messiness, though later approaches, like Imre Lakatos’s structured research programs, Paul Feyerabend’s radical skepticism, and Bruno Latour’s focus on science’s social networks have added their worthwhile spins. This piece takes a light look, using Kuhn’s ideas with nudges from Feyerabend, Lakatos, and Latour, at the ongoing debate over dietary salt, a controversy that’s nuanced and long-lived. I’m not looking for “the truth” about salt, just watching science in real time.

Dietary Salt as a Kuhnian Case Study

The debate over salt’s role in blood pressure shows how science progresses, especially when viewed through the lens of Kuhn’s philosophy. It highlights the dynamics of shifting paradigms, consensus overreach, contrarian challenges, and the nonlinear, iterative path toward knowledge. This case reveals much about how science grapples with uncertainty, methodological complexity, and the interplay between evidence, belief, and rhetoric, even when relatively free from concerns about political and institutional influence.

In The Structure of Scientific Revolutions, Kuhn proposed that science advances not steadily but through cycles of “normal science,” where a dominant paradigm shapes inquiry, and periods of crisis that can result in paradigm shifts. The salt–blood pressure debate, though not as dramatic in consequence as Einstein displacing Newton or as ideologically loaded as climate science, exemplifies these principles.

Normal Science and Consensus

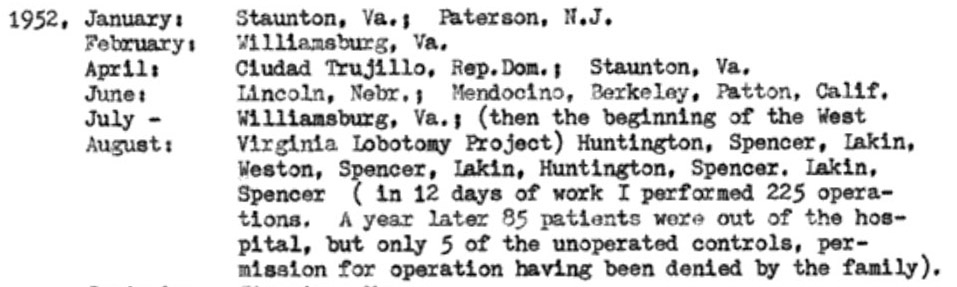

Since the 1970s, medical authorities like the World Health Organization and the American Heart Association have endorsed the view that high sodium intake contributes to hypertension and thus increases cardiovascular disease (CVD) risk. This consensus stems from clinical trials such as the 2001 DASH-Sodium study, which demonstrated that reducing salt intake significantly (from 8 grams per day to 4) lowered blood pressure, especially among hypertensive individuals. This, in Kuhn’s view, is the dominant paradigm.

This framework – “less salt means better health” – has guided public health policies, including government dietary guidelines and initiatives like the UK’s salt reduction campaign. In Kuhnian terms, this is “normal science” at work. Researchers operate within an accepted model, refining it with meta-analyses and Randomized Control Trials, seeking data to reinforce it, and treating contradictory findings as anomalies or errors. Public health campaigns, like the AHA’s recommendation of less than 2.3 g/day of sodium, reflect this consensus. Governments’ involvement embodies institutional support.

Anomalies and Contrarian Challenges

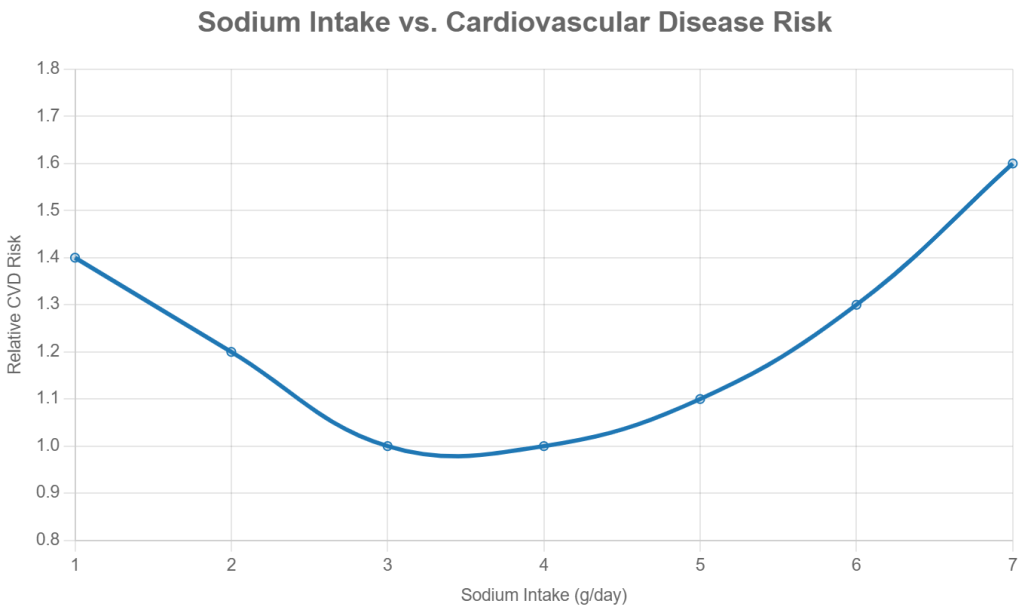

However, anomalies have emerged. For instance, a 2016 study by Mente et al. in The Lancet reported a U-shaped curve; both very low (less than 3 g/day) and very high (more than 5 g/day) sodium intakes appeared to be associated with increased CVD risk. This challenged the linear logic (“less salt, better health”) of the prevailing model. Although the differences in intake were not vast, the implications questioned whether current sodium guidelines were overly restrictive for people with normal blood pressure.

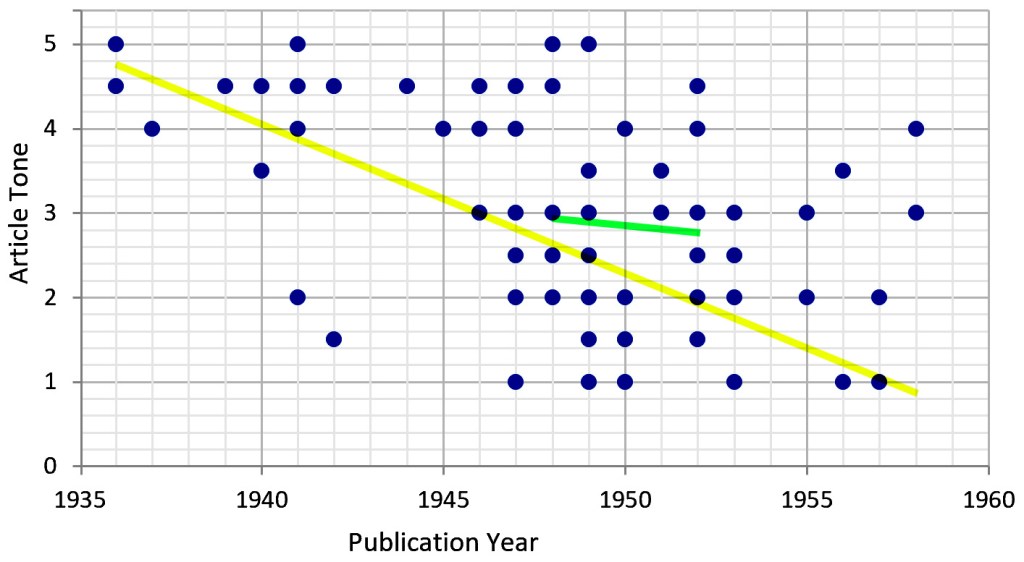

The video Salt & Blood Pressure: How Shady Science Sold America a Lie mirrors Galileo’s rhetorical flair, using provocative language such as “shady science” to challenge the establishment. Like Galileo’s defense of heliocentrism, contrarians in the salt debate (researchers like Mente) amplify anomalies to question dogma, sometimes exaggerating flaws in early studies (e.g., Lewis Dahl’s rat experiments) or alleging conspiracies (e.g., pharmaceutical influence). More in Feyerabend’s view than in Kuhn’s, this exaggeration and rhetoric might be desirable. It’s useful. It provides the challenges that the paradigm should be able to overcome to remain dominant.

These challenges haven’t led to a paradigm shift yet, as the consensus remains robust, supported by RCTs and global health data. But they highlight the Kuhnian tension between entrenched views and emerging evidence, pushing science to refine its understanding.

Framing the issue as a contrarian challenge might go something like this:

Evidence-based medicine sets treatment guidelines, but evidence-based medicine has not translated into evidence-based policy. Governments advise lowering salt intake, but that advice is supported by little robust evidence for the general population. Randomized controlled trials have not strongly supported the benefit of salt reduction for average people. Indeed, we see evidence that low salt might pose as great a risk.

Methodological Challenges

The question “Is salt bad for you?” is ill-posed. Evidence and reasoning say this question oversimplifies a complex issue: sodium’s effects vary by individual (e.g., salt sensitivity, genetics), diet (e.g., processed vs. whole foods), and context (e.g., baseline blood pressure, activity level). Science doesn’t deliver binary truths. Modern science gives probabilistic models, refined through iterative testing.

While randomized controlled trials (RCTs) have shown that reducing sodium intake can lower blood pressure, especially in sensitive groups, observational studies show that extremely low sodium is associated with poor health. This association may signal reverse causality, an error in reasoning. The data may simply reveal that sicker people eat less, not that they are harmed by low salt. This complexity reflects the limitations of study design and the challenges of isolating causal relationships in real-world populations. The above graph is a fairly typical dose-response curve for any nutrient.

The salt debate also underscores the inherent difficulty of studying diet and health. Total caloric intake, physical activity, genetic variation, and compliance all confound the relationship between sodium and health outcomes. Few studies look at salt intake as a fraction of body weight. If sodium recommendations were expressed as sodium density (mg/kcal), it might help accommodate individual energy needs and eating patterns more effectively.

Science as an Iterative Process

Despite flaws in early studies and the polemics of dissenters, the scientific communities continue to refine its understanding. For example, Japan’s national sodium reduction efforts since the 1970s have coincided with significant declines in stroke mortality, suggesting real-world benefits to moderation, even if the exact causal mechanisms remain complex.

Through a Kuhnian lens, we see a dominant paradigm shaped by institutional consensus and refined by accumulating evidence. But we also see the system’s limits: anomalies, confounding variables, and methodological disputes that resist easy resolution.

Contrarians, though sometimes rhetorically provocative or methodologically uneven, play a crucial role. Like the “puzzle-solvers” and “revolutionaries” in Kuhn’s model, they pressure the scientific establishment to reexamine assumptions and tighten methods. This isn’t a flaw in science; it’s the process at work.

Salt isn’t simply “good” or “bad.” The better scientific question is more conditional: How does salt affect different individuals, in which contexts, and through what mechanisms? Answering this requires humility, robust methodology, and the acceptance that progress usually comes in increments. Science moves forward not despite uncertainty, disputation and contradiction but because of them.

After the Applause: Heilbron Rereads Feyerabend

Posted by Bill Storage in History of Science, Philosophy of Science on June 4, 2025

A decade ago, in a Science, Technology and Society (STS) roundtable, I brought up Paul Feyerabend, who was certainly familiar to everyone present. I said that his demand for a separation of science and state – his call to keep science from becoming a tool of political authority – seemed newly relevant in the age of climate science and policy entanglement. Before I could finish the thought, someone cut in: “You can’t use Feyerabend to support republicanism!”

I hadn’t made an argument. Feyerabend was being claimed as someone who belonged to one side of a cultural war. His ideas were secondary. That moment stuck with me, not because I was misunderstood, but because Feyerabend was. And maybe he would have loved that. He was ambiguous by design. The trouble is that his deliberate opacity has hardened, over time, into distortion.

Feyerabend survives in fragments and footnotes. He’s the folk hero who overturned Method and danced on its ruins. He’s a cautionary tale: the man who gave license to science denial, epistemic relativism, and rhetorical chaos. You’ll find him invoked in cultural studies and critiques of scientific rationality, often with little more than the phrase “anything goes” as evidence. He’s also been called “the worst enemy of science.”

Against Method is remembered – or reviled – as a manifesto for intellectual anarchy. But “manifesto” doesn’t fit at all. It didn’t offer a vision, a list of principles, or a path forward. It has no normative component. It offered something stranger: a performance.

Feyerabend warned readers in the preface that the book would contradict itself, that it wasn’t impartial, and that it was meant to persuade, not instruct. He said – plainly and explicitly – that later parts would refute earlier ones. It was, in his words, a “tendentious” argument. And yet neither its admirers nor its critics have taken that warning seriously.

Against Method has become a kind of Rorschach test. For some, it’s license; for others, sabotage. Few ask what Feyerabend was really doing – or why he chose that method to attack Method. A few of us have long argued that Against Method has been misread. It was never meant as a guidebook or a threat, but as a theatrical critique staged to provoke and destabilize something that badly needed destabilizing.

That, I was pleased to learn, is also the argument made quietly and precisely in the last published work of historian John Heilbron. It may be the most honest reading of Feyerabend we’ve ever had.

John once told me that, unlike Kuhn, he had “the metabolism of a historian,” a phrase that struck me later as a perfect self-diagnosis: patient, skeptical, and slow-burning. He’d been at Berkeley when Feyerabend was still strutting the halls in full flair – the accent, the dramatic pronouncements, the partying. John didn’t much like him. He said so over lunch, on walks, at his house or mine. Feyerabend was hungry for applause, and John disapproved of his personal appetites and the way he flaunted them.

And yet… John’s recent piece on Feyerabend – the last thing he ever published – is microscopically delicate, charitable, and clear-eyed. John’s final chapter in Stefano Gattei’s recent book, Feyerabend in Dialogue, contains no score-settling, no demolition. Just a forensic mind trained to separate signal from noise. If Against Method is a performance, Heilbron doesn’t boo it offstage. He watches it again, closely, and tells us how it was done. Feyerabend through Heilbron’s lens is a performance reframed.

If anyone was positioned to make sense of Feyerabend, rhetorically, philosophically, and historically, it was Heilbron – Thomas Kuhn’s first graduate student, a lifelong physicist-turned-historian, and an expert on both early modern science and quantum theory’s conceptual tangles. His work on Galileo, Bohr, and the Scientific Revolution was always precise, occasionally sly, and never impressed by performance for performance’s sake.

That care is clearest in his treatment of Against Method’s most famous figure: Galileo. Feyerabend made Galileo the centerpiece of his case against scientific method – not as a heroic rationalist, but as a cunning rhetorician who won not because of superior evidence, but because of superior style. He compared Galileo to Goebbels, provocatively, to underscore how persuasion, not demonstration, drove the acceptance of heliocentrism. In Feyerabend’s hands, Galileo became a theatrical figure, a counterweight to the myth of Enlightenment rationality.

Heilbron dismantles this with the precision of someone who has lived in Galileo’s archives. He shows that while Galileo lacked a modern theory of optics, he was not blind to his telescope’s limits. He cross-checked, tested, and refined. He triangulated with terrestrial experiments. He understood that instruments could deceive, and worked around that risk with repetition and caution. The image of Galileo as a showman peddling illusions doesn’t hold up. Galileo, flaws acknowledged, was a working proto-scientist, attentive to the fragility of his tools.

Heilbron doesn’t mythologize Galileo; his 2010 Galileo makes that clear. But he rescues Galileo from Feyerabend’s caricature. In doing so, he models something Against Method never offered: a historically grounded, philosophically rigorous account of how science proceeds when tools are new, ideas unstable, and theory underdetermined by data.

To be clear, Galileo was no model of transparency. He framed the Dialogue as a contest between Copernicus and Ptolemy, though he knew Tycho Brahe’s hybrid system was the more serious rival. He pushed his theory of tides past what his evidence could support, ignoring counterarguments – even from Cardinal Bellarmine – and overstating the case for Earth’s motion.

Heilbron doesn’t conceal these. He details them, but not to dismiss. For him, these distortions are strategic flourishes – acts of navigation by someone operating at the edge of available proof. They’re rhetorical, yes, but grounded in observation, subject to revision, and paid for in methodological care.

That’s where the contrast with Feyerabend sharpens. Feyerabend used Galileo not to advance science, but to challenge its authority. More precisely, to challenge Method as the defining feature of science. His distortions – minimizing Galileo’s caution, questioning the telescope, reimagining inquiry as theater – were made not in pursuit of understanding, but in service of a larger philosophical provocation. This is the line Heilbron quietly draws: Galileo bent the rules to make a case about nature; Feyerabend bent the past to make a case about method.

In his final article, Heilbron makes four points. First, that the Galileo material in Against Method – its argumentative keystone – is historically slippery and intellectually inaccurate. Feyerabend downplays empirical discipline and treats rhetorical flourish as deception. Heilbron doesn’t call this dishonest. He calls it stagecraft.

Second, that Feyerabend’s grasp of classical mechanics, optics, and early astronomy was patchy. His critique of Galileo’s telescope rests on anachronistic assumptions about what Galileo “should have” known. He misses the trial-based, improvisational reasoning of early instrumental science. Heilbron restores that context.

Third, Heilbron credits Feyerabend’s early engagement with quantum mechanics – especially his critique of von Neumann’s no-hidden-variables proof and his alignment with David Bohm’s deterministic alternative. Feyerabend’s philosophical instincts were sharp.

And fourth, Heilbron tracks how Feyerabend’s stance unraveled – oscillating between admiration and disdain for Popper, Bohr, and even his earlier selves. He supported Bohm against Bohr in the 1950s, then defended Bohr against Popper in the 1970s. Heilbron doesn’t call this hypocrisy. He calls it instability built into the project itself: Feyerabend didn’t just critique rationalism – he acted out its undoing. If this sounds like a takedown, it isn’t. It’s a reconstruction – calm, slow, impartial. The rare sort that shows us not just what Feyerabend said, but where he came apart.

Heilbron reminds us what some have forgotten and many more never knew: that Feyerabend was once an insider. Before Against Method, he was embedded in the conceptual heart of quantum theory. He studied Bohm’s challenge to Copenhagen while at LSE, helped organize the 1957 Colston symposium in Bristol, and presented a paper there on quantum measurement theory. He stood among physicists of consequence – Bohr, Bohm, Podolsky, Rosen, Dirac, and Pauli – all struggling to articulate alternatives to an orthodoxy – Copenhagen Interpretation – that they found inadequate.

With typical wit, Heilbron notes that von Neumann’s no-hidden-variables proof “was widely believed, even by people who had read it.” Feyerabend saw that dogma was hiding inside the math – and tried to smoke it out.

Late in life, Feyerabend’s provocations would ripple outward in unexpected directions. In a 1990 lecture at Sapienza University, Cardinal Joseph Ratzinger – later Pope Benedict XVI – quoted Against Method approvingly. He cited Feyerabend’s claim that the Church had been more reasonable than Galileo in the affair that defined their rupture. When Ratzinger’s 2008 return visit was canceled due to protests about that quotation, the irony was hard to miss. The Church, once accused of silencing science, was being silenced by it, and stood accused of quoting a philosopher who spent his life telling scientists to stop pretending they were priests.

We misunderstood Feyerabend not because he misled us, but because we failed to listen the way Heilbron did.

Anarchy and Its Discontents: Paul Feyerabend’s Critics

Posted by Bill Storage in History of Science, Philosophy of Science on June 3, 2025

(For and against Against Method)

Paul Feyerabend’s 1975 Against Method and his related works made bold claims about the history of science, particularly the Galileo affair. He argued that science progressed not because of adherence to any specific method, but through what he called epistemological anarchism. He said that Galileo’s success was due in part to rhetoric, metaphor, and politics, not just evidence.

Some critics, especially physicists and historically rigorous philosophers of science, have pointed out technical and historical inaccuracies in Feyerabend’s treatment of physics. Here are some examples of the alleged errors and distortions:

Misunderstanding Inertial Frames in Galileo’s Defense of Copernicanism

Feyerabend argued that Galileo’s arguments for heliocentrism were not based on superior empirical evidence, and that Galileo used rhetorical tricks to win support. He claimed that Galileo simply lacked any means of distinguishing heliocentric from geocentric models empirically, so his arguments were no more rational than those of Tycho Brahe and other opponents.

His critics responded by saying that Galileo’s arguments based on the phases of Venus and Jupiter’s moons were empirically decisive against the Ptolemaic model. This is unarguable, though whether Galileo had empirical evidence to overthrow Tycho Brahe’s hybrid model is a much more nuanced matter.

Critics like Ronald Giere, John Worrall, and Alan Chalmers (What Is This Thing Called Science?) argued that Feyerabend underplayed how strong Galileo’s observational case actually was. They say Feyerabend confused the issue of whether Galileo had a conclusive argument with whether he had a better argument.

This warrants some unpacking. Specifically, what makes an argument – a model, a theory – better? Criteria might include:

- Empirical adequacy – Does the theory fit the data? (Bas van Fraassen)

- Simplicity – Does the theory avoid unnecessary complexity? (Carl Hempel)

- Coherence – Is it internally consistent? (Paul Thagard)

- Explanatory power – Does it explain more than rival theories? (Wesley Salmon)

- Predictive power – Does it generate testable predictions? (Karl Popper, Hempel)

- Fertility – Does it open new lines of research? (Lakatos)

Some argue that Galileo’s model (Copernicanism, heliocentrism) was obviously simpler than Brahe’s. But simplicity opens another can of philosophical worms. What counts as simple? Fewer entities? Fewer laws? More symmetry? Copernicus had simpler planetary order but required a moving Earth. And Copernicus still relied on epicycles, so heliocentrism wasn’t empirically simpler at first. Given the evidence of the time, a static Earth can be seen as simpler; you don’t need to explain the lack of wind and the “straight” path of falling bodies. Ultimately, this point boils down to aesthetics, not math or science. Galileo and later Newtonians valued mathematical elegance and unification. Aristotelians, the church, and Tychonians valued intuitive compatibility with observed motion.

Feyerabend also downplayed Galileo’s use of the principle of inertia, which was a major theoretical advance and central to explaining why we don’t feel the Earth’s motion.

Misuse of Optical Theory in the Case of Galileo’s Telescope

Feyerabend argued that Galileo’s use of the telescope was suspect because Galileo had no good optical theory and thus no firm epistemic ground for trusting what he saw.

His critics say that while Galileo didn’t have a fully developed geometrical optics theory (e.g., no wave theory of light), his empirical testing and calibration of the telescope were rigorous by the standards of the time.

Feyerabend is accused of anachronism – judging Galileo’s knowledge of optics by modern standards and therefore misrepresenting the robustness of his observational claims. Historians like Mario Biagioli and Stillman Drake point out that Galileo cross-verified telescope observations with the naked eye and used repetition, triangulation, and replication by others to build credibility.

Equating All Theories as Rhetorical Equals

Feyerabend in some parts of Against Method claimed that rival theories in the history of science were only judged superior in retrospect, and that even “inferior” theories like astrology or Aristotelian cosmology had equal rational footing at the time.

Historians like Steven Shapin (How to be Antiscientific) and David Wootton (The Invention of Science) say that this relativism erases real differences in how theories were judged even in Galileo’s time. While not elaborated in today’s language, Galileo and his rivals clearly saw predictive power, coherence, and observational support as fundamental criteria for choosing between theories.

Feyerabend’s polemical, theatrical tone often flattened the epistemic distinctions that working scientists and philosophers actually used, especially during the Scientific Revolution. His analysis of “anything goes” often ignored the actual disciplinary practices of science, especially in physics.

Failure to Grasp the Mathematical Structure of Physics

Scientists – those broad enough to know who Feyerabend was – often claim that he misunderstood or ignored the role of mathematics in theory-building, especially in Newtonian mechanics and post-Galilean developments. In Against Method, Feyerabend emphasizes metaphor and persuasion over mathematics. While this critique is valuable when aimed at the rhetorical and political sides of science, it underrates the internal mathematical constraints that shape physical theories, even for Galileo.

Imre Lakatos, his friend and critic, called Feyerabend’s work a form of “intellectual sabotage”, arguing that he distorted both the history and logic of physics.

Misrepresenting Quantum Mechanics

Feyerabend wrote about Bohr and Heisenberg in Philosophical Papers and later essays. Critics like Abner Shimony and Mario Bunge charge that Feyerabend misrepresented or misunderstood Bohr’s complementarity as relativistic, when Bohr’s position was more subtle and aimed at objective constraints on language and measurement.

Feyerabend certainly fails to understand the mathematical formalism underpinning Quantum Mechanics. This weakens his broader claims about theory incommensurability.

Feyerabend’s erroneous critique of Neil’s Bohr is seen in his 1958 Complimentarity:

“Bohr’s point of view may be introduced by saying that it is the exact opposite of [realism]. For Bohr the dual aspect of light and matter is not the deplorable consequence of the absence of a satisfactory theory, but a fundamental feature of the microscopic level. For him the existence of this feature indicates that we have to revise … the [realist] ideal of explanation.” (more on this in an upcoming post)

Epistemic Complaints

Beyond criticisms that he failed to grasp the relevant math and science, Feyerabend is accused of selectively reading or distorting historical episodes to fit the broader rhetorical point that science advances by breaking rules, and that no consistent method governs progress. Feyerabend’s claim that in science “anything goes” can be seen as epistemic relativism, leaving no rational basis to prefer one theory over another or to prefer science over astrology, myth, or pseudoscience.

Critics say Feyerabend blurred the distinction between how theories are argued (rhetoric) and how they are justified (epistemology). He is accused of conflating persuasive strategy with epistemic strength, thereby undermining the very principle of rational theory choice.

Some take this criticism to imply that methodological norms are the sole basis for theory choice. Feyerabend’s “anarchism” may demolish authority, but is anything left in its place except a vague appeal to democratic or cultural pluralism? Norman Levitt and Paul Gross, especially in Higher Superstition: The Academic Left and Its Quarrels with Science (1994), argue this point, along with saying Feyerabend attacked a caricature of science.

Personal note/commentary: In my view, Levitt and Gross did some great work, but Higher Superstition isn’t it. I bought the book shortly after its release because I was disgusted with weaponized academic anti-rationalism, postmodernism, relativism, and anti-science tendencies in the humanities, especially those that claimed to be scientific. I was sympathetic to Higher Superstition’s mission but, on reading it, was put off by its oversimplifications and lack of philosophical depth. Their arguments weren’t much better than those of the postmodernists. Critics of science in the humanities critics overreached and argued poorly, but they were responding to legitimate concerns in the philosophy of science. Specifically:

- Underdetermination – Two incompatible theories often fit the same data. Why do scientists prefer one over another? As Kuhn argued, social dynamics play a role.

- Theory-laden Observations – Observations are shaped by prior theory and assumptions, so science is not just “reading the book of nature.”

- Value-laden Theories – Public health metrics like life expectancy and morbidity (opposed to autonomy or quality of life) trickle into epidemiology.

- Historical Variability of Consensus – What’s considered rational or obvious changes over time (phlogiston, luminiferous ether, miasma theory).

- Institutional Interest and Incentives – String theory’s share of limited research funding, climate science in service of energy policy and social agenda.

- The Problem of Reification – IQ as a measure of intelligence has been reified in policy and education, despite deep theoretical and methodological debates about what it measures.

- Political or Ideological Capture – Marxist-Leninist science and eugenics were cases where ideology shaped what counted as science.

Higher Superstition and my unexpected negative reaction to it are what brought me to the discipline of History and Philosophy of Science.

Conclusion

Feyerabend exaggerated the uncertainty of early modern science, downplayed the empirical gains Galileo and others made, and misrepresented or misunderstood some of the technical content of physics. His mischievous rhetorical style made it hard to tell where serious argument ended and performance began. Rather than offering a coherent alternative methodology, Feyerabend’s value lay in exposing the fragility and contingency of scientific norms. He made it harder to treat methodological rules as timeless or universal by showing how easily they fracture under the pressure of real historical cases.

In a following post, I’ll review the last piece John Heilbron wrote before he died, Feyerabend, Bohr and Quantum Physics, which appeared in Stefano Gattei’s Feyerabend in Dialogue, a set of essays marking the 100th anniversary of Feyerabend’s birth.

Paul Feyerabend. Photo courtesy of Grazia Borrini-Feyerabend.

Statistical Reasoning in Healthcare: Lessons from Covid-19

Posted by Bill Storage in History of Science, Philosophy of Science, Probability and Risk on May 6, 2025

For centuries, medicine has navigated the tension between science and uncertainty. The Covid pandemic exposed this dynamic vividly, revealing both the limits and possibilities of statistical reasoning. From diagnostic errors to vaccine communication, the crisis showed that statistics is not just a technical skill but a philosophical challenge, shaping what counts as knowledge, how certainty is conveyed, and who society trusts.

Historical Blind Spot

Medicine’s struggle with uncertainty has deep roots. In antiquity, Galen’s reliance on reasoning over empirical testing set a precedent for overconfidence insulated by circular logic. If his treatments failed, it was because the patient was incurable. Enlightenment physicians, like those who bled George Washington to death, perpetuated this resistance to scrutiny. Voltaire wrote, “The art of medicine consists in amusing the patient while nature cures the disease.” The scientific revolution and the Enlightenment inverted Galen’s hierarchy, yet the importance of that reversal is often neglected, even by practitioners. Even in the 20th century, pioneers like Ernest Codman faced ostracism for advocating outcome tracking, highlighting a medical culture that prized prestige over evidence. While evidence-based practice has since gained traction, a statistical blind spot persists, rooted in training and tradition.

The Statistical Challenge

Physicians often struggle with probabilistic reasoning, as shown in a 1978 Harvard study where only 18% correctly applied Bayes’ Theorem to a diagnostic test scenario (a disease with 1/1,000 prevalence and a 5% false positive rate yields a ~2% chance of disease given a positive test). A 2013 follow-up showed marginal improvement (23% correct). Medical education, which prioritizes biochemistry over probability, is partly to blame. Abusive lawsuits, cultural pressures for decisiveness, and patient demands for certainty further discourage embracing doubt, as Daniel Kahneman’s work on overconfidence suggests.

Neil Ferguson and the Authority of Statistical Models

Epidemiologist Neil Ferguson and his team at Imperial College London produced a model in March 2020 predicting up to 500,000 UK deaths without intervention. The US figure could top 2 million. These weren’t forecasts in the strict sense but scenario models, conditional on various assumptions about disease spread and response.

Ferguson’s model was extraordinarily influential, shifting the UK and US from containment to lockdown strategies. It also drew criticism for opaque code, unverified assumptions, and the sheer weight of its political influence. His eventual resignation from the UK’s Scientific Advisory Group for Emergencies (SAGE) over a personal lockdown violation further politicized the science.

From the perspective of history of science, Ferguson’s case raises critical questions: When is a model scientific enough to guide policy? How do we weigh expert uncertainty under crisis? Ferguson’s case shows that modeling straddles a line between science and advocacy. It is, in Kuhnian terms, value-laden theory.

The Pandemic as a Pedagogical Mirror

The pandemic was a crucible for statistical reasoning. Successes included the clear communication of mRNA vaccine efficacy (95% relative risk reduction) and data-driven ICU triage using the SOFA score, though both had limitations. Failures were stark: clinicians misread PCR test results by ignoring pre-test probability, echoing the Harvard study’s findings, while policymakers fixated on case counts over deaths per capita. The “6-foot rule,” based on outdated droplet models, persisted despite disconfirming evidence, reflecting resistance to updating models, inability to apply statistical insights, and institutional inertia. Specifics of these issues are revealing.

Mostly Positive Examples:

- Risk Communication in Vaccine Trials (1)

The early mRNA vaccine announcements in 2020 offered clear statistical framing by emphasizing a 95% relative risk reduction in symptomatic COVID-19 for vaccinated individuals compared to placebo, sidelining raw case counts for a punchy headline. While clearer than many public health campaigns, this focus omitted absolute risk reduction and uncertainties about asymptomatic spread, falling short of the full precision needed to avoid misinterpretation. - Clinical Triage via Quantitative Models (2)

During peak ICU shortages, hospitals adopted the SOFA score, originally a tool for assessing organ dysfunction, to guide resource allocation with a semi-objective, data-driven approach. While an improvement over ad hoc clinical judgment, SOFA faced challenges like inconsistent application and biases that disadvantaged older or chronically ill patients, limiting its ability to achieve fully equitable triage. - Wastewater Epidemiology (3)

Public health researchers used viral RNA in wastewater to monitor community spread, reducing the sampling biases of clinical testing. This statistical surveillance, conducted outside clinics, offered high public health relevance but faced biases and interpretive challenges that tempered its precision.

Mostly Negative Examples:

- Misinterpretation of Test Results (4)

Early in the COVID-19 pandemic, many clinicians and media figures misunderstood diagnostic test accuracy, misreading PCR and antigen test results by overlooking pre-test probability. This caused false reassurance or unwarranted alarm, though some experts mitigated errors with Bayesian reasoning. This was precisely the type of mistake highlighted in the Harvard study decades earlier. - Cases vs. Deaths (5)

One of the most persistent statistical missteps during the pandemic was the policy focus on case counts, devoid of context. Case numbers ballooned or dipped not only due to viral spread but due to shifts in testing volume, availability, and policies. COVID deaths per capita rather than case count would have served as a more stable measure of public health impact. Infection fatality rates would have been better still. - Shifting Guidelines and Aerosol Transmission (6)

The “6-foot rule” was based on outdated models of droplet transmission. When evidence of aerosol spread emerged, guidance failed to adapt. Critics pointed out the statistical conservatism in risk modeling, its impact on mental health and the economy. Institutional inertia and politics prevented vital course corrections.

(I’ll defend these six examples in another post.)

A Philosophical Reckoning

Statistical reasoning is not just a mathematical tool – it’s a window into how science progresses, how it builds trust, and its special epistemic status. In Kuhnian terms, the pandemic exposed the fragility of our current normal science. We should expect methodological chaos and pluralism within medical knowledge-making. Science during COVID-19 was messy, iterative, and often uncertain – and that’s in some ways just how science works.

This doesn’t excuse failures in statistical reasoning. It suggests that training in medicine should not only include formal biostatistics, but also an eye toward history of science – so future clinicians understand the ways that doubt, revision, and context are intrinsic to knowledge.

A Path Forward

Medical education must evolve. First, integrate Bayesian philosophy into clinical training, using relatable case studies to teach probabilistic thinking. Second, foster epistemic humility, framing uncertainty as a strength rather than a flaw. Third, incorporate the history of science – figures like Codman and Cochrane – to contextualize medicine’s empirical evolution. These steps can equip physicians to navigate uncertainty and communicate it effectively.

Conclusion

Covid was a lesson in the fragility and potential of statistical reasoning. It revealed medicine’s statistical struggles while highlighting its capacity for progress. By training physicians to think probabilistically, embrace doubt, and learn from history, medicine can better manage uncertainty – not as a liability, but as a cornerstone of responsible science. As John Heilbron might say, medicine’s future depends not only on better data – but on better historical memory, and the nerve to rethink what counts as knowledge.

______

All who drink of this treatment recover in a short time, except those whom it does not help, all of whom die. It is obvious, therefore, that it fails only in incurable cases. – Galen

Extraordinary Popular Miscarriages of Science, Part 6 – String Theory

Posted by Bill Storage in History of Science, Philosophy of Science on May 3, 2025

Introduction: A Historical Lens on String Theory

In 2006, I met John Heilbron, widely credited with turning the history of science from an emerging idea into a professional academic discipline. While James Conant and Thomas Kuhn laid the intellectual groundwork, it was Heilbron who helped build the institutions and frameworks that gave the field its shape. Through John I came to see that the history of science is not about names and dates – it’s about how scientific ideas develop, and why. It explores how science is both shaped by and shapes its cultural, social, and philosophical contexts. Science progresses not in isolation but as part of a larger human story.

The “discovery” of oxygen illustrates this beautifully. In the 18th century, Joseph Priestley, working within the phlogiston theory, isolated a gas he called “dephlogisticated air.” Antoine Lavoisier, using a different conceptual lens, reinterpreted it as a new element – oxygen – ushering in modern chemistry. This was not just a change in data, but in worldview.

When I met John, Lee Smolin’s The Trouble with Physics had just been published. Smolin, a physicist, critiques string theory not from outside science but from within its theoretical tensions. Smolin’s concerns echoed what I was learning from the history of science: that scientific revolutions often involve institutional inertia, conceptual blind spots, and sociopolitical entanglements.

My interest in string theory wasn’t about the physics. It became a test case for studying how scientific authority is built, challenged, and sustained. What follows is a distillation of 18 years of notes – string theory seen not from the lab bench, but from a historian’s desk.

A Brief History of String Theory

Despite its name, string theory is more accurately described as a theoretical framework – a collection of ideas that might one day lead to testable scientific theories. This alone is not a mark against it; many scientific developments begin as frameworks. Whether we call it a theory or a framework, it remains subject to a crucial question: does it offer useful models or testable predictions – or is it likely to in the foreseeable future?

String theory originated as an attempt to understand the strong nuclear force. In 1968, Gabriele Veneziano introduced a mathematical formula – the Veneziano amplitude – to describe the scattering of strongly interacting particles such as protons and neutrons. By 1970, Pierre Ramond incorporated supersymmetry into this approach, giving rise to superstrings that could account for both fermions and bosons. In 1974, Joël Scherk and John Schwarz discovered that the theory predicted a massless spin-2 particle with the properties of the hypothetical graviton. This led them to propose string theory not as a theory of the strong force, but as a potential theory of quantum gravity – a candidate “theory of everything.”

Around the same time, however, quantum chromodynamics (QCD) successfully explained the strong force via quarks and gluons, rendering the original goal of string theory obsolete. Interest in string theory waned, especially given its dependence on unobservable extra dimensions and lack of empirical confirmation.

That changed in 1984 when Michael Green and John Schwarz demonstrated that superstring theory could be anomaly-free in ten dimensions, reviving interest in its potential to unify all fundamental forces and particles. Researchers soon identified five mathematically consistent versions of superstring theory.

To reconcile ten-dimensional theory with the four-dimensional spacetime we observe, physicists proposed that the extra six dimensions are “compactified” into extremely small, curled-up spaces – typically represented as Calabi-Yau manifolds. This compactification allegedly explains why we don’t observe the extra dimensions.

In 1995, Edward Witten introduced M-theory, showing that the five superstring theories were different limits of a single 11-dimensional theory. By the early 2000s, researchers like Leonard Susskind and Shamit Kachru began exploring the so-called “string landscape” – a space of perhaps 10^500 (1 followed by 500 zeros) possible vacuum states, each corresponding to a different compactification scheme. This introduced serious concerns about underdetermination – the idea that available empirical evidence cannot determine which among many competing theories is correct.

Compactification introduces its own set of philosophical problems. Critics Lee Smolin and Peter Woit argue that compactification is not a prediction but a speculative rationalization: a move designed to save a theory rather than derive consequences from it. The enormous number of possible compactifications (each yielding different physics) makes string theory’s predictive power virtually nonexistent. The related challenge of moduli stabilization – specifying the size and shape of the compact dimensions – remains unresolved.

Despite these issues, string theory has influenced fields beyond high-energy physics. It has informed work in cosmology (e.g., inflation and the cosmic microwave background), condensed matter physics, and mathematics (notably algebraic geometry and topology). How deep and productive these connections run is difficult to assess without domain-specific expertise that I don’t have. String theory has, in any case, produced impressive mathematics. But mathematical fertility is not the same as scientific validity.

The Landscape Problem

Perhaps the most formidable challenge string theory faces is the landscape problem: the theory allows for an enormous number of solutions – on the order of 10^500. Each solution represents a possible universe, or “vacuum,” with its own physical constants and laws.

Why so many possibilities? The extra six dimensions required by string theory can be compactified in myriad ways. Each compactification, combined with possible energy configurations (called fluxes), gives rise to a distinct vacuum. This extreme flexibility means string theory can, in principle, accommodate nearly any observation. But this comes at the cost of predictive power.

Critics argue that if theorists can forever adjust the theory to match observations by choosing the right vacuum, the theory becomes unfalsifiable. On this view, string theory looks more like metaphysics than physics.

Some theorists respond by embracing the multiverse interpretation: all these vacua are real, and our universe is just one among many. The specific conditions we observe are then attributed to anthropic selection – we could only observe a universe that permits life like us. This view aligns with certain cosmological theories, such as eternal inflation, in which different regions of space settle into different vacua. But eternal inflation can exist independent of string theory, and none of this has been experimentally confirmed.

The Problem of Dominance

Since the 1980s, string theory has become a dominant force in theoretical physics. Major research groups at Harvard, Princeton, and Stanford focus heavily on it. Funding and institutional prestige have followed. Prominent figures like Brian Greene have elevated its public profile, helping transform it into both a scientific and cultural phenomenon.

This dominance raises concerns. Critics such as Smolin and Woit argue that string theory has crowded out alternative approaches like loop quantum gravity or causal dynamical triangulations. These alternatives receive less funding and institutional support, despite offering potentially fruitful lines of inquiry.

In The Trouble with Physics, Smolin describes a research culture in which dissent is subtly discouraged and young physicists feel pressure to align with the mainstream. He worries that this suppresses creativity and slows progress.

Estimates suggest that between 1,000 and 5,000 researchers work on string theory globally – a significant share of theoretical physics resources. Reliable numbers are hard to pin down.

Defenders of string theory argue that it has earned its prominence. They note that theoretical work is relatively inexpensive compared to experimental research, and that string theory remains the most developed candidate for unification. Still, the issue of how science sets its priorities – how it chooses what to fund, pursue, and elevate – remains contentious.

Wolfgang Lerche of CERN once called string theory “the Stanford propaganda machine working at its fullest.” As with climate science, 97% of string theorists agree that they don’t want to be defunded.

Thomas Kuhn’s Perspective

The logical positivists and Karl Popper would almost certainly dismiss string theory as unscientific due to its lack of empirical testability and falsifiability – core criteria in their respective philosophies of science. Thomas Kuhn would offer a more nuanced interpretation. He wouldn’t label string theory unscientific outright, but would express concern over its dominance and the marginalization of alternative approaches. In Kuhn’s framework, such conditions resemble the entrenchment of a paradigm during periods of normal science, potentially at the expense of innovation.

Some argue that string theory fits Kuhn’s model of a new paradigm, one that seeks to unify quantum mechanics and general relativity – two pillars of modern physics that remain fundamentally incompatible at high energies. Yet string theory has not brought about a Kuhnian revolution. It has not displaced existing paradigms, and its mathematical formalism is often incommensurable with traditional particle physics. From a Kuhnian perspective, the landscape problem may be seen as a growing accumulation of anomalies. But a paradigm shift requires a viable alternative – and none has yet emerged.

Lakatos and the Degenerating Research Program

Imre Lakatos offered a different lens, seeing science as a series of research programs characterized by a “hard core” of central assumptions and a “protective belt” of auxiliary hypotheses. A program is progressive if it predicts novel facts; it is degenerating if it resorts to ad hoc modifications to preserve the core.

For Lakatos, string theory’s hard core would be the idea that all particles are vibrating strings and that the theory unifies all fundamental forces. The protective belt would include compactification schemes, flux choices, and moduli stabilization – all adjusted to fit observations.

Critics like Sabine Hossenfelder argue that string theory is a degenerating research program: it absorbs anomalies without generating new, testable predictions. Others note that it is progressive in the Lakatosian sense because it has led to advances in mathematics and provided insights into quantum gravity. Historians of science are divided. Johansson and Matsubara (2011) argue that Lakatos would likely judge it degenerating; Cristin Chall (2019) offers a compelling counterpoint.

Perhaps string theory is progressive in mathematics but degenerating in physics.

The Feyerabend Bomb

Paul Feyerabend, who Lee Smolin knew from his time at Harvard, was the iconoclast of 20th-century philosophy of science. Feyerabend would likely have dismissed string theory as a dogmatic, aesthetic fantasy. He might write something like:

“String theory dazzles with equations and lulls physics into a trance. It’s a mathematical cathedral built in the sky, a triumph of elegance over experience. Science flourishes in rebellion. Fund the heretics.”

Even if this caricature overshoots, Feyerabend’s tools offer a powerful critique:

- Untestability: String theory’s predictions remain out of reach. Its core claims – extra dimensions, compactification, vibrational modes – cannot be tested with current or even foreseeable technology. Feyerabend challenged the privileging of untested theories (e.g., Copernicanism in its early days) over empirically grounded alternatives.

- Monopoly and suppression: String theory dominates intellectual and institutional space, crowding out alternatives. Eric Weinstein recently said, in Feyerabendian tones, “its dominance is unjustified and has resulted in a culture that has stifled critique, alternative views, and ultimately has damaged theoretical physics at a catastrophic level.”

- Methodological rigidity: Progress in string theory is often judged by mathematical consistency rather than by empirical verification – an approach reminiscent of scholasticism. Feyerabend would point to Johannes Kepler’s early attempt to explain planetary orbits using a purely geometric model based on the five Platonic solids. Kepler devoted 17 years to this elegant framework before abandoning it when observational data proved it wrong.

- Sociocultural dynamics: The dominance of string theory stems less from empirical success than from the influence and charisma of prominent advocates. Figures like Brian Greene, with their public appeal and institutional clout, help secure funding and shape the narrative – effectively sustaining the theory’s privileged position within the field.

- Epistemological overreach: The quest for a “theory of everything” may be misguided. Feyerabend would favor many smaller, diverse theories over a single grand narrative.

Historical Comparisons

Proponents say other landmark theories emerging from math predated their experimental confirmation. They compare string theory to historical cases. Examples include:

- Planet Neptune: Predicted by Urbain Le Verrier based on irregularities in Uranus’s orbit, observed in 1846.

- General Relativity: Einstein predicted the bending of light by gravity in 1915, confirmed by Arthur Eddington’s 1919 solar eclipse measurements.

- Higgs Boson: Predicted by the Standard Model in the 1960s, observed at the Large Hadron Collider in 2012.

- Black Holes: Predicted by general relativity, first direct evidence from gravitational waves observed in 2015.

- Cosmic Microwave Background: Predicted by the Big Bang theory (1922), discovered in 1965.

- Gravitational Waves: Predicted by general relativity, detected in 2015 by the Laser Interferometer Gravitational-Wave Observatory (LIGO).

But these examples differ in kind. Their predictions were always testable in principle and ultimately tested. String theory, in contrast, operates at the Planck scale (~10^19 GeV), far beyond what current or foreseeable experiments can reach.

Special Concern Over Compactification

A concern I have not seen discussed elsewhere – even among critics like Smolin or Woit – is the epistemological status of compactification itself. Would the idea ever have arisen apart from the need to reconcile string theory’s ten dimensions with the four-dimensional spacetime we experience?

Compactification appears ad hoc, lacking grounding in physical intuition. It asserts that dimensions themselves can be small and curled – yet concepts like “small” and “curled” are defined within dimensions, not of them. Saying a dimension is small is like saying that time – not a moment in time, but time itself – can be “soon” or short in duration. It misapplies the very conceptual framework through which such properties are understood. At best, it’s a strained metaphor; at worst, it’s a category mistake and conceptual error.

This conceptual inversion reflects a logical gulf that proponents overlook or ignore. They say compactification is a mathematical consequence of the theory, not a contrivance. But without grounding in physical intuition – a deeper concern than empirical support – compactification remains a fix, not a forecast.

Conclusion

String theory may well contain a correct theory of fundamental physics. But without any plausible route to identifying it, string theory as practiced is bad science. It absorbs talent and resources, marginalizes dissent, and stifles alternative research programs. It is extraordinarily popular – and a miscarriage of science.

Extraordinary Popular Miscarriages of Science, Part 5 – Climate Science

Posted by Bill Storage in History of Science on April 6, 2025

NASA reports that ninety-seven percent of climate scientists agree that human-caused climate change is happening.

As with earlier posts on popular miscarriages of science, I look at climate science through the lens of the 20th century historians of science and philosophers of science and conclude that climate science is epistemically thin.

To elaborate a bit, most sensible folk accept that climate science addresses a potentially critical concern and that it has many earnest and talented practitioners. Despite those practitioners, it can be critiqued as bad science. We can do that without delving into the levels or claims, disputations, and counterarguments on relationships between ice cores, CO₂ concentrations and temperature. We can instead use the perspectives of prominent historians and philosophers of science of the 20th century, including the Logical Positivists in general, positivist Carl Hempel in particular, Karl Popper, Thomas Kuhn, Imre Lakatos, and Paul Feyerabend. Each perspective offers a distinct philosophical lens that highlights shortcomings in climate science’s methodologies and practices. I’ll explain each of those perspectives, why I think they’re important, and I’ll explore the critiques they would likely advance. These critiques don’t invalidate climate science conceptually as a field of inquiry but they highlight serious logical and philosophical concerns about its methodologies, practices, and epistemic foundations.

The historians and philosophers invoked here were fundamentally concerned with the demarcation problem: how to differentiate good science, bad science, and pseudoscience using a methodological perspective. They didn’t necessarily agree with each other. In some cases, like Kuhn versus Popper, they outright despised each other. All were flawed, but they were giants who shone brightly and presented systematic visions of how science works and what good science is.

Carnap, Ayer and the Positivists: Verification

The early Logical Positivists, particularly Rudolf Carnap and A.J. Ayer, saw empirical verification as the cornerstone of scientific claims. To be meaningful, a claim must be testable through observation or experiment. Climate science, while rooted in empirical data, struggles with verifiability because of its focus on long-term, global phenomena. Predictions about future consequences like sea level change, crop yield, hurricane frequency, and average temperature are not easily verifiable within a human lifespan or with current empirical methods. That might merely suggest that climate science is hard, not that it is bad. But decades of past predictions and retrodictions have been notoriously poor. Consequently, theories have been continuously revised in light of failed predictions. The reliance on indirect evidence – proxy data and computer simulations – rather than controlled experiments (which would be impossible or unethical) would not satisfy the positivists’ demand for direct, observable confirmation. Climatologist Michael Mann (originator of the “hockey stick” graph) often refers to climate simulation results as data. It is not – not in any sense that a positivist would use the term data. Positivists would see these difficulties and predictive failures as falling short of their strict criteria for scientific legitimacy.

Carl Hempel: Absence of Appeal to Universal Laws

The philosophy of Carl Hempel centered on the deductive-nomological model (aka covering-law model), which holds that scientific explanations should be derived from universal, timeless laws of nature combined with deductive logic about specific sense observations (empirical data). For Hempel, explanation and prediction were two sides of the same coin. If you can’t predict, then you cannot explain. For Hempel to judge a scientific explanation valid, deductive logic applied to laws of nature must confer nomic expectability upon the phenomenon being explained.